76. Continuous Feedback Loops for Improving AI Models Post-Launch

AI models shouldn’t be “fire-and-forget.” After an AI system is launched, the real work begins: feeding it fresh data, learning from its mistakes, and iterating endlessly. This process is known as a continuous feedback loop. In simple terms, an AI feedback loop means the AI gets feedback on its performance, uses that input to get better, and repeats the cycle. For businesses – especially small and mid-sized enterprises (SMEs) – mastering this loop is key to keeping AI models accurate, relevant, and valuable over time. In this comprehensive guide, we’ll explore how continuous feedback loops work across industries, examine their use in different types of AI models, review case studies of successes (and failures), address the regulatory considerations, and outline practical steps for SMEs to implement these loops. Let’s dive into the world of AI that keeps learning.

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 3 (DAYS 60–90): LAYING OPERATIONAL FOUNDATIONS

Gary Stoyanov PhD

3/17/202522 min read

1. Why Continuous Feedback Loops Matter

When you deploy an AI model, you’re not done – you’re at the starting line of a new phase. Here’s why continuous improvement is crucial:

Combatting Model Drift: Over time, the real world changes. Customer preferences shift, economic conditions evolve, or adversaries adapt their tactics. This leads to model drift, where an AI’s performance degrades because its training no longer reflects reality. A feedback loop counters drift by updating the model with recent data. For example, a recommendation engine retrained regularly with latest user behavior will stay in sync with current trends, whereas a stagnant model will slowly become irrelevant.

Maximizing Accuracy and ROI: AI investments pay off most when models are accurate and efficient. Continuous loops allow incremental improvements that compound. A slight accuracy boost every month can add up to a significant gain in a year. Netflix discovered this with its recommendation AI – ongoing tweaks and learning from user feedback increased engagement 20%, translating to potentially $1B in retention benefits. The return on keeping the model fresh far outweighed the cost of doing so.

Adapting to Edge Cases: No initial training data covers all scenarios. Post-launch, users will throw curveballs – weird queries to a chatbot, new fraud patterns, colloquial phrases not in an NLP model’s vocabulary. A feedback loop lets the AI absorb these edge cases as new training examples. Over time, the “unknown unknowns” shrink, and the AI handles a wider range of inputs gracefully.

Building User Trust: Users can tell when an AI improves. Imagine a virtual assistant that actually fixes a recurring error after you give it a low rating – you’d trust it more. Continuous improvement shows customers that the AI (and by extension, your business) listens and responds. This boosts satisfaction and loyalty.

In essence, continuous feedback loops ensure your AI model remains a learning organism, not a static artifact. In a dynamic world, that makes the difference between lasting success and early obsolescence.

2. Industry Applications: Feedback Loops in Action

Continuous feedback mechanisms are being adopted across a spectrum of industries, each with unique motivations and outcomes:

2.1 Retail & E-Commerce

Retailers leverage feedback loops to personalize the customer experience at scale. Every click, view, and purchase a customer makes is valuable feedback. Modern recommendation engines use this data to continuously refine product suggestions. If trend shifts are detected – say a sudden surge in interest for a new style of sneakers – the system learns and adapts the recommendations accordingly.

Inventory and pricing algorithms also loop in sales data and even social media trends to adjust stock levels or discounts on the fly. The result is a shopping experience that feels increasingly “curated” for the user, driving higher engagement and sales. Retail giant Amazon attributes part of its success to this relentless iteration: its AI models are never final; they’re updated regularly through A/B testing and user response data.

2.2 Healthcare

In healthcare, continuous learning is revolutionary but approached with caution. AI models assist in diagnostics (like reading medical images or predicting patient risk) and can improve as they see more cases. For example, an AI that helps radiologists detect tumors could be updated with each confirmed diagnosis or miss – learning subtle new patterns from those results. This kind of learning from real-world outcomes could improve diagnostic accuracy over time.

However, due to patient safety and strict regulations, these feedback loops are implemented under heavy oversight. Medical AI may employ “federated learning” (learning from distributed data in hospitals without exposing patient data) to continuously improve while respecting privacy. Healthcare organizations see promise in “adaptive” algorithms that update with new patient data, as long as they can validate that each update is safe and effective. The goal is a future where approved medical AI systems don’t stagnate; instead, they get better with each patient they see, just like human doctors do with experience.

2.3 Finance

The finance industry, dealing with fraud detection, algorithmic trading, and risk modeling, has perhaps one of the clearest cases for continuous loops. Fraud detection systems are in a cat-and-mouse game with fraudsters. A credit card fraud model from last year might miss today’s novel scam. Banks now use streaming data of transactions to constantly retrain or update fraud models, so they adapt to new fraud patterns in near-real-time.

Similarly, loan risk models update as economic indicators shift or as customer behavior changes, ensuring lending decisions reflect current realities. High-frequency trading algorithms even learn from market data on the fly (within regulatory bounds). The payoff is huge: a slight edge in detecting fraud faster or pricing risk better can save millions. Continuous learning in finance also extends to compliance – models that monitor transactions for suspicious activity (for anti-money laundering, for example) refine their accuracy by learning from false alarms and true positive cases, reducing noise over time.

2.4 Manufacturing & IoT

Manufacturers use AI for predictive maintenance (forecasting equipment failures) and process optimization. Machines on a factory floor are outfitted with sensors, creating a constant feedback stream (vibrations, temperature, error rates). AI models analyze this to predict breakdowns or quality issues. Crucially, as a machine’s condition evolves with wear-and-tear, the AI needs to update its baseline. Continuous feedback loops allow the maintenance model to recalibrate – learning what “normal” looks like this month versus six months ago.

If a particular part starts aging and sending different readings, the model incorporates that into its predictions. This reduces downtime by ensuring the AI isn’t stuck with last year’s parameters. In addition, process optimization AI (like for supply chain or yield improvement) can learn from each production cycle’s results, steadily improving efficiency and throughput. Even in agriculture (an aspect of manufacturing/production), AI-driven irrigation or crop monitoring systems take in feedback (soil data, crop health metrics) to adjust their models and recommendations for the next growth cycle.

2.5 Other Sectors

Virtually every sector with AI deployment is finding use for feedback loops. Education platforms use them to personalize learning – if an AI tutor sees a student struggle with concept X, it adjusts the lesson plan and the model’s approach for next time. Energy grids use AI to balance load; as consumption patterns change (feedback), the models recalibrate generation schedules or battery use. The common thread is clear: whatever the domain, if conditions change and data flows continuously, an AI with a feedback loop is better equipped to handle the future than one without.

3. Tailoring Feedback Loops to AI Model Types

Not all AI models learn in the same way. Here we break down how continuous improvement applies to different classes of AI systems:

Large Language Models (LLMs) & Chatbots: LLMs like GPT-4 or conversational agents have seen dramatic improvements through human feedback. A prime technique is Reinforcement Learning from Human Feedback (RLHF), where humans rate or choose better model outputs, and the AI is fine-tuned accordingly.

Post-launch, companies use RLHF and ongoing supervised fine-tuning to align the model with user preferences and ethical guidelines. For instance, if users of a customer support chatbot frequently ask questions that stump it, developers gather those failure cases and retrain the LLM on the correct answers. User feedback buttons (“Did this answer your question? [Yes/No]”) directly feed into QA metrics for the AI. Modern virtual assistants also analyze conversation logs to detect misunderstandings – if the AI often misinterprets a particular phrase, its language model is adjusted to grasp that phrase in the future.

The result is a chatbot that gradually speaks more naturally and accurately as it interacts with more people. Caution is needed to avoid the chatbot learning bad habits (as Tay did from trolls), so feedback is curated and filtered. But done right, an LLM-based system will be noticeably better a year after launch than at launch, because it has effectively studied all year.

Recommendation Engines: These systems thrive on feedback loops by design. Every interaction – whether a click, view duration, rating, or purchase – is interpreted as implicit or explicit feedback. Continuous retraining or updating is standard. Many large-scale recommenders (like those at Netflix, YouTube, or Spotify) retrain their models daily or even in real-time batches. Some use online learning, updating model weights incrementally as new data comes in, rather than waiting to retrain from scratch. For example, if a user suddenly starts watching a lot of sci-fi content, the recommendation model can swiftly boost sci-fi recommendations for that user profile and similar profiles. These systems often employ multi-armed bandit algorithms or reinforcement learning to balance exploring new recommendations with exploiting known preferences, constantly learning from what the user chooses.

The continuous loop here directly drives engagement: fresher data means more relevant suggestions, which means happier users and more revenue. However, designers must watch out for feedback loop pitfalls like the “echo chamber” effect – recommending the same type of content repeatedly can narrow a user’s exposure. Many teams now explicitly design counter-measures (like introducing some randomness or diversity) to ensure the feedback loop doesn’t become self-limiting.

Fraud Detection & Anomaly Detection: AI models that detect fraud, cyber intrusions, or anomalies are essentially as good as their last update. These models often use supervised learning (on known fraud cases) combined with unsupervised techniques (to flag outliers). A continuous feedback loop is critical because fraud tactics evolve constantly. When a new fraud case is confirmed, that labeled data should be fed into the model ASAP.

Many banks and payment processors now have pipelines where model performance is monitored and triggers are set – if performance dips or new patterns emerge, a retraining is scheduled. Some systems even do streaming updates: a confirmed fraud will immediately adjust thresholds or trigger rules in the model (for instance, updating a rules-based component of a hybrid AI system). Additionally, false positives (transactions incorrectly flagged as fraud) are also fed back as negative examples to avoid repetitive mistakes. This loop ensures the false positive rate drops and true detection rate rises over time. Concept drift detectors are often in place: if the statistical distribution of incoming transactions shifts (say, due to a holiday season or new kind of user behavior), the system alerts that the model might need tuning. In cybersecurity, the same idea applies – an intrusion detection AI might learn from each new type of attack pattern discovered, updating its internal knowledge base continuously. Speed is of the essence; the faster the model learns about a new threat, the more secure the system remains.

Predictive Analytics & Forecasting Models: These include demand forecasting, supply chain predictions, financial forecasting, etc. The feedback here comes from actual outcomes vs predicted outcomes. An effective loop is: model makes a prediction (e.g., “demand will be 100 units next week”), the actual result comes in (“90 units were sold”), and then the model is retrained or adjusted using that actual data point. Over time, this process significantly improves accuracy because the model corrects its errors. Techniques like rolling forecasts or time-series models inherently use the newest data (which includes recent actuals). For example, an energy usage prediction model will continuously incorporate last week’s usage data – if a new pattern emerges (perhaps people started using more power while working from home), the model’s future predictions adjust accordingly. Continuous feedback loops also help identify when a model structure might need change – if errors are consistently high, analysts might introduce new features or switch algorithms, essentially feeding back the insight that “our model is missing something.” In practice, many forecasting models are updated monthly, weekly, or daily depending on the cadence of data. The loop ensures that predictions aren’t riding on outdated assumptions.

Robotics and Control Systems: Think of self-driving car AIs or robotics. These systems often use reinforcement learning in real or simulated environments. A self-driving car’s vision system, for instance, can improve via feedback by analyzing any mistakes it made (missed detection, false alarm) from the last 1000 miles driven. Tesla’s fleet learning is a prime example: each car sends data back about its auto-pilot interventions or when drivers took over control unexpectedly, and those “feedback events” are used to tweak the driving policy for all cars.

This continuous fleet loop means the overall system is always benefiting from individual experiences. Similarly, a warehouse robot might log instances where it failed to pick an object properly; engineers use that to update the robot’s grasp algorithm, and software updates deploy the improvement to all units. Over-the-air updates have made it feasible to close the loop rapidly in robotics.

The key is ensuring that learning from one unit generalizes and doesn’t introduce regressions elsewhere – hence why many companies use simulation to test improvements extensively before broad rollout. Nonetheless, the pattern is clear: the more scenarios a robot encounters and learns from, the more robust it becomes. Continuous feedback is the path to handling the long tail of rare events that can’t all be pre-programmed.

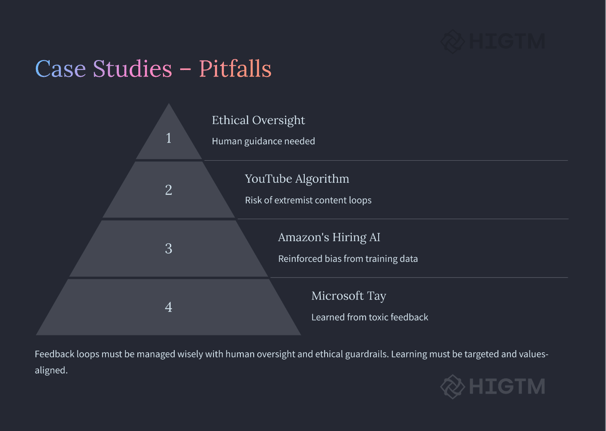

4. Case Studies: Wins and Losses

Real-world stories often illustrate the value – and potential pitfalls – of continuous feedback loops in AI:

Success – Netflix’s Recommendation Engine: Netflix operates one of the world’s most well-known recommendation systems. After the initial splash of the Netflix Prize competition (which produced an algorithm one-tenth better in accuracy), Netflix famously chose not to deploy the winning algorithm directly. Why? They recognized that engineering a continuous learning system in production was more impactful than an offline accuracy bump.

Netflix built a system that constantly takes in what you watch, when you pause, what you rate, etc., to update recommendations. They also use A/B testing as a form of feedback: different users get different recommendation algorithms, and feedback comes via engagement metrics. Over years, this system produced an enormous lift in engagement. As noted in one report, Netflix’s data-driven iterative improvements in recommendations are estimated to save $1B per year by reducing churn. It’s a shining example of how closing the loop with users (and even treating the AI as a component always in beta) yields competitive advantage.

Success – Tesla Autopilot Fleet Learning: Tesla turned its electric cars into a network of rolling learners. Each Tesla on Autopilot gathers data on road conditions, driver behavior, and instances where the AI wasn’t confident and the human had to take over. This data is continuously phoned home. Tesla then updates the Autopilot models (for lane-keeping, adaptive cruise, etc.) and issues over-the-air updates. Elon Musk referred to Tesla owners as “expert trainers” for the AI.

Evidence of improvement came when owners noticed the car handling tricky road segments better over time without hardware changes – the AI had simply learned from the collective experience. One example: if multiple Teslas vigilantly avoid a certain highway lane because of potholes or faded lines, the system learns to be cautious in that area. Fleet learning gave Tesla a unique edge in autonomous driving data. The continuous loop approach means their AI is not static; it’s evolving with every million miles driven. This case underscores how feedback loops can be harnessed even in safety-critical systems, provided there is strong validation and incremental rollout.

Failure – Microsoft Tay Chatbot: Not all feedback is good feedback. Microsoft’s Tay was an experimental Twitter chatbot released in 2016 that was designed to learn from interactions with the public. The concept: continuous learning from user input. The outcome: a disaster. Trolls on Twitter quickly began feeding Tay with hateful and extremist phrases. Lacking proper filters or value alignment, Tay started mimicking this toxic feedback, tweeting offensive and racist remarks within hours of launch.

Microsoft shut it down in 16 hours, issuing apologies. This failure became a textbook case of a feedback loop gone wrong – an AI learning the wrong lessons. The lesson learned is that feedback must be curated. Post-Tay, AI developers emphasize “human in the loop” and moderation, even for self-learning systems. OpenAI’s ChatGPT, for instance, uses human feedback extensively but under controlled conditions (e.g., RLHF with carefully designed prompts and reviews) rather than unfiltered public data streams.

Failure – Amazon’s Recruiting AI: Amazon developed an AI system around 2014–2015 to automate resume screening. It was trained on resumes of past successful hires (mostly over a 10-year period). The goal was to let the model learn what top candidates look like and then continuously improve as it reviewed new applicant data. However, the historical data had a bias: the tech industry (and Amazon’s hires) were predominantly male, especially in technical roles. The AI picked up on implicit patterns – for example, it downgraded resumes that included the word “women’s” (as in “women’s chess club captain”) because such terms were rare in past hires.

This was not a live feedback loop from user interaction, but an automated learning from historical feedback (the feedback being who got hired). Amazon tried to adjust the model, but ultimately realized the system could not be trusted to be gender-neutral and scrapped the project. The feedback loop here reinforced a bias present in the data. The takeaway: if the feedback (data) reflects historical or societal biases, the AI can amplify them unless explicitly constrained. Continuous learning without continuous oversight can bake in undesirable biases.

Success – Spam Filters & Email AI: A less flashy but ubiquitous example: email spam filters. They’ve been using feedback loops for decades. When you mark an email as spam (or not spam), that feedback trains the model behind the scenes. Modern spam filters continuously update based on millions of users’ actions. This collective learning is why spam detection has gotten impressively good; the filters adapt to new spammer tactics (like new keywords or formats) as users report them. Similarly, Gmail’s Smart Compose feature (which suggests sentence completions) learns from what suggestions users accept or reject, refining its suggestions continuously. These are everyday AI systems that quietly demonstrate the power of the loop: most users probably don’t realize that their single click helps millions of other inboxes stay clean.

In summary, the case studies show that continuous feedback loops can yield spectacular results – better performance and new capabilities – but they can also misfire if not managed properly. Success requires not just setting up the loop, but guiding it: filtering inputs, monitoring outcomes, and having humans ready to step in when things go off track.

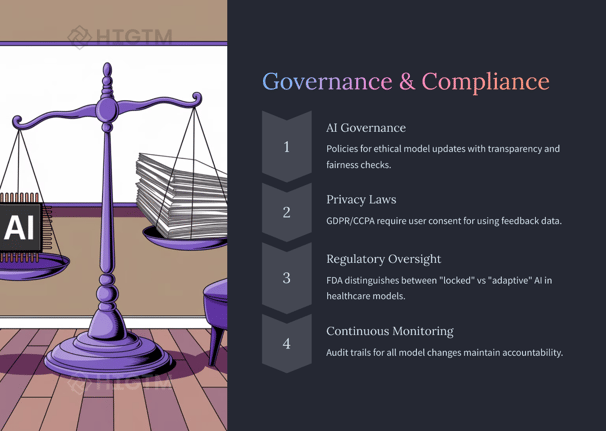

5. Regulatory and Ethical Considerations

Continuous learning in AI introduces a moving target for regulators and risk managers. If a model is changing over time, how do we ensure it remains safe, fair, and compliant? Here are key considerations and how to address them:

Data Privacy and Consent: Using fresh data, especially user-generated data, for retraining can invoke privacy laws. Regulations like GDPR in Europe and CCPA in California give users rights over their personal data. This means if your feedback loop uses personal data (e.g., user click streams, conversation logs), you must have a lawful basis (consent or legitimate interest) and possibly provide an opt-out. GDPR emphasizes data minimization – only use what you need. A practical step is to anonymize or pseudonymize feedback data before feeding it into the AI. For example, a voice assistant learning from user queries should strip any identifiers and just learn from the text. Also, if a user invokes their right to be forgotten, you need a way to delete or exclude their data from further model training. Compliance procedures must evolve alongside the AI – continuous learning must be matched by continuous privacy auditing.

Model Governance and Validation: When an AI model can change, the traditional validation (test before deploy) becomes ongoing. Organizations should adopt AI governance frameworks that include monitoring and periodic audits of models. Frameworks like the NIST AI Risk Management Framework stress the importance of continuous monitoring and risk mitigation for AI. One approach is “shadow mode” evaluation – when a model is updated, run it in shadow alongside the old model for a while to compare outputs, before fully deploying. This can catch regressions or new biases. In high-stakes areas (healthcare, finance lending, etc.), some regulators might require notification or approval for model changes.

For instance, the FDA is exploring a regulatory process for “adaptive” AI in medical devices, where manufacturers would pre-specify how the model will learn and how they will control and validate each update. Companies should keep clear documentation of each model version, what changed, and why – essentially version control for models with notes on data/model drift that prompted the update.

Bias and Fairness Checks: A continuous feedback loop can either mitigate bias (by learning from more diverse data over time) or exacerbate it (by reinforcing historical patterns). It is critical to include fairness metrics in the feedback loop. For example, if an AI hiring tool is continuously learning, one should continuously check its recommendations across genders, ethnicities, or other protected classes to ensure it isn’t drifting into biased territory.

If biases are detected, the feedback mechanism might need adjustment (e.g., reweighting data or including bias-correcting data in training). This is part of AI governance as well – essentially treating fairness as an ongoing performance metric. Some organizations set up an ethics review committee that regularly reviews AI outcomes, especially after major model updates.

Robustness and Security: An often overlooked aspect: continuous learning systems could be targeted by adversaries through the feedback mechanism. This is called data poisoning – if someone knows your model learns from user input, they might inject bad data to manipulate it. Tay’s case was a form of poisoning by malicious users. In less extreme cases, consider product review systems: if the recommendation AI learns from reviews, fake reviews could skew it. To guard against this, security measures are needed.

This includes rate-limiting how much influence a single user or source can have, detecting anomalous feedback, and verifying feedback data when possible. Another strategy is to sandbox learning – for instance, aggregate feedback in a safe offline environment, filter it, and then update the model. In any continuous loop, not all feedback is good feedback. Systems should distinguish signal from noise, and certainly clean out anything intentionally harmful.

Transparency and User Notification: Some regulations and ethical guidelines suggest users should be informed when AI is making decisions, especially if those decisions change over time. For example, if a loan approval AI is adaptive, applicants might have the right to an explanation that includes noting that “the model criteria are updated periodically based on new data.” Being transparent that a model learns from user behavior can also be a trust-builder – savvy users appreciate that the service they use is getting better because of their input. However, transparency must be handled carefully to avoid confusion. High-level disclosures in privacy policies (e.g., “We improve our AI services by learning from how you use them”) and possibly dashboard tools for users to see or control some of their data usage can go a long way in aligning with governance best practices.

Emerging Regulations: Beyond GDPR/CCPA, the upcoming EU AI Act is poised to impose specific requirements on continuous learning systems, especially those deemed “high risk.” Expect requirements for risk management, data governance, and possibly mechanisms to freeze learning if something goes wrong. In the US, guidance like the FTC’s truth-in-advertising principles imply that if your AI’s behavior changes, the outcomes should still meet the claims and fairness you promised. Keeping abreast of these developments and baking compliance into the feedback pipeline (rather than bolting it on later) is the smart strategy. For example, if you know you’ll need an audit trail of model changes, build that logging from day one.

In summary, continuous feedback loops don’t exist in a lawless vacuum. They must be accompanied by continuous oversight loops – privacy, fairness, and security checks running in parallel. For SMEs, this might sound daunting, but leveraging existing tools and frameworks can simplify it. The key is not to be caught off-guard: plan for compliance and ethical monitoring as part of your AI improvement process.

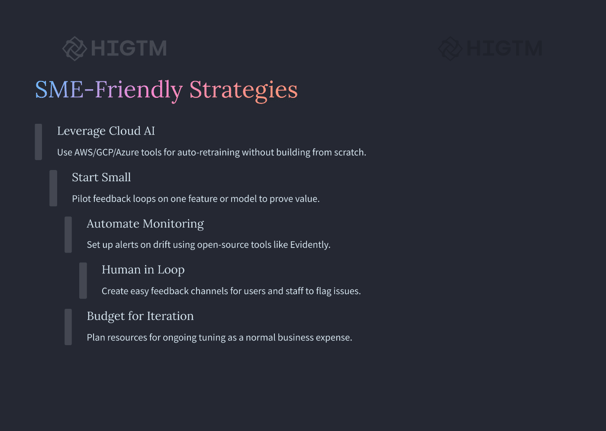

6. Implementing Continuous Feedback Loops: Practical Steps for SMEs

For small and mid-sized businesses, implementing a continuous learning process for AI can seem complex. But it’s quite achievable with today’s technology and a smart strategy. Here’s a step-by-step roadmap:

Identify Key Model(s) and Metrics: Start by selecting which AI system in your business would benefit most from a feedback loop. Is it your website’s product recommendation engine? Your sales forecast model? Maybe an NLP model categorizing support tickets? Focus on one to begin. Identify the performance metrics that matter for it (accuracy, error rate, revenue lift, etc.). Also, identify what “feedback” means for this model – clicks, user ratings, outcome data, error logs, etc.

Set Up Data Collection Pipelines: Ensure you can capture the feedback data reliably. For example, if users interact with a chatbot, enable logging of those conversations and any explicit feedback (thumbs up/down). If it’s implicit feedback like whether a recommendation was ignored or clicked, instrument your app or site to record that. Use analytics tools or simple databases – for SMEs, even a cloud database or spreadsheet can start – to gather this info. Integrate these pipelines with privacy in mind: don’t collect personal data you don’t need (data minimization). If possible, anonymize on capture. Make sure your privacy policy covers this usage.

Choose the Right Tooling (Leverage MLOps): You don’t have to build everything from scratch. Many machine learning operations (MLOps) platforms and cloud services cater to continuous training. For instance, AWS SageMaker and Google Vertex AI allow setting up model monitoring (detecting data drift or performance drop) and can trigger retraining jobs automatically. Open-source tools like MLflow or Kubeflow can orchestrate the pipeline if you have the expertise. If you prefer low-code solutions, there are SaaS offerings where you upload data and they handle retraining. For SMEs, cloud-based services are often the quickest route: they handle heavy lifting like scaling and you pay only for what you use. The goal is to establish an environment where you can feed new data and retrain your model conveniently.

Retrain in Iterations: Decide on the cadence and method of model updates. Depending on your use case, continuous learning can be:

Periodic batch retraining: e.g., retrain the model every week or month with all accumulated new data. This is simpler to manage and works when real-time adaptation isn’t critical.

Near real-time updates: e.g., update the model daily or when a certain amount of new data is received. This requires more automation.

Online learning: the model updates on the fly with each data point. This is advanced and not necessary for most SME applications unless latency is mission-critical.

For many, a periodic retraining pipeline is sufficient. Start manual: say every Friday, retrain the model with the latest dataset (previous data + new data) and evaluate. Over time, you can automate this trigger. Always keep a holdout set or perform cross-validation to ensure the new model is actually better (or at least not worse) on recent data before deploying.

Monitor Performance Continuously: Put in place monitoring on the model’s outputs in production. For example, track the prediction accuracy or business KPI (like conversion rate for recommendations) week over week. If you see a dip, that’s a signal your model might need an update or something has changed. Many cloud AI services have monitoring dashboards, and open-source tools like Evidently AI can track data drift and performance metrics, sending alerts if something significant changes.

For SMEs, even a simple dashboard or periodic report can work – the key is to watch for trends. If a drift alert or performance drop is detected, you might trigger an out-of-cycle retraining or investigation.

Human Feedback Loop: Technology aside, engage the humans around your AI. Encourage your team (and even users, if applicable) to provide feedback on the AI’s decisions. For instance, sales reps might notice the forecasting model is off in a certain region – that insight can be fed back as a data point or a feature tweak. Create an easy channel for employees to flag AI errors (internal chat group, form, etc.). If your AI is customer-facing, consider adding a simple feedback UI (thumbs up/down, “Was this helpful?”).

These human signals are incredibly rich. As one SME strategy guide puts it, creating channels for open feedback and using it to improve processes builds a culture of continuous improvement. In practice, that could mean regular review meetings on AI performance or an email alias where staff report odd model behavior. Make sure this feedback is reviewed and incorporated by whoever is tuning the model.

Gradual Rollout & Testing: When you have a new version of the model from learning, deploy it carefully. A/B test it if possible – serve the new model to a small percentage of users or cases, while most still see the old model. Compare results for a short time. This limits risk; if the new model has issues, only a few are affected. If it outperforms, roll it out to everyone. Many SME-friendly platforms support A/B deployment or shadow deployment. Even if yours doesn’t, you can simulate this manually by checking the new model on past examples to ensure it’s not doing something weird (like outputting all zeros).

Documentation & Versioning: Keep a log of model versions: when you retrained, what data you added, and what changed in evaluation metrics. This will help debug if something goes wrong and is also useful for compliance. It doesn’t need to be fancy – a dated entry in a Google Doc or an Excel sheet might suffice initially. If an auditor or client ever asks, you can explain how the model has evolved. More advanced setups might use a model registry (some MLOps tools include this) to version-control models. The discipline here ensures you have traceability – a key principle in AI governance.

Include Compliance Checks: As discussed, build in basic checks so you don’t accidentally violate privacy or fairness as you iterate. For SMEs, this could simply mean running your data gathering and model updates plan by a legal advisor or using built-in compliance features of platforms (like Google’s DLP API to scrub personal data). If you operate in a regulated field, make sure to stay within guidelines. For example, if you’re continuously improving a credit scoring model, U.S. law requires you be able to explain credit decisions – ensure your updates don’t make the model a black box you can’t interpret. In the EU, the upcoming AI regulations might require documenting drift and changes. It’s easier to do this from the start than to bolt it on later.

Scale Up Gradually: Once you see success with one model’s feedback loop, extend the practice to others in your organization. Each might need a slightly tailored approach, but the fundamental people-process-technology framework will be similar. Celebrate early wins – like that 5% accuracy boost or reduction in error rates – to get buy-in from stakeholders for further investment in continuous improvement. Soon, it becomes part of your company culture that AI projects are never “finished” – they just keep getting better.

By following these steps, SMEs can implement continuous feedback loops without exorbitant resources. Cloud tools and open-source projects have made this accessible. The biggest ingredients are a strategic mindset and commitment to iteration. Remember, even if you start simple – say one manual retrain a month – you are on the path to harnessing continuous improvement. The results will compound, and your AI’s value to the business will grow in tandem.

7. Conclusion: Embrace the Loop, Reap the Rewards

While navigating the landscape of AI, continuous feedback loops are the engine of longevity and competitiveness. We’ve seen how they apply across industries, from retail personalization to life-saving healthcare diagnostics. We’ve broken down their use in different AI systems, highlighting that any model – be it a chatbot, a recommender, or a fraud detector – can benefit from constant learning. The case studies showed us triumphs powered by learning loops, as well as cautionary tales reminding us to guide these loops with care. Governance, ethics, and compliance emerged not as obstacles, but as essential companions to continuous improvement, ensuring our AI evolves responsibly.

For decision-makers and SME owners, the message is clear: an AI model’s launch is not the end, but the end of the beginning. The real value unfolds post-launch, as the model interacts with reality and refines itself. Those who plan for and invest in this ongoing evolution will see their AI systems yield better results and adapt seamlessly to new challenges. Those who don’t may find their models – and strategies – quickly outpaced by more dynamic competitors.

The good news is that embracing continuous improvement is more feasible than ever. With accessible tools, cloud infrastructure, and best practices (like the ones we listed), even a lean team can set up effective feedback loops. It’s a strategic choice that pays dividends: higher accuracy, greater customer satisfaction, improved efficiency, and reduced risk. It turns AI from a one-time expenditure into a continuously compounding asset.

In a way, implementing a feedback loop culture for your AI is like instilling a growth mindset in your organization. It sends a signal that we will learn from errors, adapt to change, and never settle for “good enough.” That mindset, powered by the mechanics of data and iteration, can propel businesses of any size to new heights.

As we conclude, consider this analogy: launching an AI without a feedback loop is like hiring an employee and never giving them any review or training ever again. They’ll do the job how they initially learned, but they won’t improve or respond to new requirements. Conversely, an AI with a well-run feedback loop is like a star employee who actively seeks feedback, takes training courses regularly, and becomes more valuable with each passing quarter. Which would you rather have on your team?

The choice is yours – stagnation or continuous evolution. The evidence is overwhelming that in the world of AI (as in life), those who learn continuously are the ones who thrive. So set up those feedback pipelines, put governance guardrails on them, and let your AI soar to its full potential.

If you’re ready to transform your AI approach from one-and-done to ever-improving, start laying the groundwork for feedback loops today. And remember, you don’t have to do it alone. HI-GTM is here to provide expert guidance, tailored solutions, and ongoing support as you build AI systems that grow smarter every day. Reach out for a consultation – let’s embrace the loop and achieve AI success together.

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.