62. Prototyping & Piloting AI in Retail – An Iterative Approach to Reduce Risk

Instead of “go big or go home,” successful retailers are adopting a “start small and learn” philosophy. Prototyping and piloting AI solutions in iterative steps is emerging as the best practice to reduce risk while still moving quickly to seize opportunities. This article explores how a structured iterative approach to AI can help retail businesses innovate confidently – minimizing costly failures and maximizing ROI.

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 3 (DAYS 60–90): LAYING OPERATIONAL FOUNDATIONS

Gary Stoyanov PhD

3/3/202518 min read

1. Embracing Iterative AI Piloting in Retail

Retailers are learning that careful experimentation beats all-in bets when it comes to AI.

1.1 Why Big-Bang AI Projects Falter

Modern retailers often feel pressure to implement AI – whether for personalized marketing, inventory optimization, or cashier-less stores – in a big, transformative push. However, many grand AI projects fail to deliver. Without validation, an AI rollout to hundreds of stores or millions of customers can misfire due to unseen flaws. Studies indicate that **over 80% of AI projects fail ir objectives when organizations don’t have the right processes. Common pitfalls include: unclear objectives, poor data quality, and lack of user acceptance. In retail, these translate to wasted budget, frustrated staff, or even alienated customers if a new AI system doesn’t work as intended. A cautionary example is a retailer that deploys an AI pricing engine chain-wide overnight, only to find it misprices items due to a data glitch – resulting in lost sales and customer complaints. Such failures highlight the need for a safer approach.

1.2 The Case for “Test and Learn”

An iterative “test and learn” strategy is a proven antidote to big project risk. Rather than implementing a full solution outright, leading retailers launch prototypes and pilots – small-scale versions of the AI idea – observe the results, then refine or expand. This approach offers multiple benefits:

Early Validation: It proves on a micro-scale that the AI actually solves the intended problem (or not). For instance, will a chatbot actually reduce calls to customer service? A pilot in one department or on one channel can answer this before you invest in a company-wide chatbot.

Controlled Investment: By limiting scope, retailers spend only a fraction of the full budget initially. If the idea isn’t a winner, they’ve saved the remainder (and can pivot). If it shows promise, they can confidently invest more, knowing the fundamentals are sound.

Organizational Learning: Each prototype teaches the team about what data is needed, how employees and customers react, and what operational changes are required. These lessons are invaluable and often prevent costly mistakes during scale-up.

In summary, a pilot approach embraces failure as a possibility – but contains its impact. In the words of one innovation lead, “fail fast, fail cheap, and adjust.” For retail, where margins are thin and customer experience is king, this measured approach ensures any AI that scales has been battle-tested in miniature.

2. Structured Methodologies for Retail AI Prototyping

Not all experiments are created equal – the best retailers follow structured methods to make AI prototyping effective.

2.1 Lean AI Experimentation

Applying Lean Startup principles to AI in retail means starting with a clear hypothesis and the smallest experiment to test it. For example, a fashion retailer might hypothesize, “AI-driven outfit recommendations will increase online basket size by at least 10%.” Instead of spending a year building a sophisticated engine for all customers, they set up a lean experiment: use a simple rules-based recommendation or off-the-shelf AI on a subset of the website for a month, and measure basket sizes versus a control group. This lean approach focuses on learning – was the hypothesis correct? Retailers keep experiments cheap and quick: perhaps using a manual process behind the scenes (Wizard of Oz method) or a basic model, just enough to get data. The lean experimentation cycle – Build > Measure > Learn > Iterate – repeats until the team finds a configuration that delivers value or decides the idea isn’t viable. In our example, maybe the pilot shows only a 3% uplift, prompting the team to tweak the recommendation strategy or target a different customer segment, then test again. Lean experimentation in retail AI prevents teams from over-engineering solutions that customers don’t actually want or need. It ensures the problem-solution fit is validated early, using minimal resources.

2.2 Rapid MVP Development

A Minimum Viable Product (MVP) for an AI solution is a scaled-down version that still performs the core function. In the retail context, an MVP might be: a chatbot that can handle the top 5 FAQ questions, a vision model that tracks inventory for one category, or a personalization algorithm for a single product line. The MVP isn’t fancy – it might even have some manual processes hidden behind the scenes – but it’s real enough to test in a live environment. The goal is speed: get the MVP out in a subset of the real world quickly (within weeks or a couple of months, not years). For instance, a supermarket chain looking to implement AI-based demand forecasting could build an MVP that forecasts demand for just dairy products in one region, rather than a full system for all products nationwide. By doing so, they can observe how the MVP performs against actual sales in that region, and gather feedback from the local inventory planners.

This rapid MVP approach has two key advantages: (1) It exposes the AI to real-world conditions (messy data, edge cases, user behavior) early, and (2) it creates momentum – stakeholders see something working in practice, which builds confidence and urgency. Retail teams should define clear success criteria for the MVP pilot (e.g., “forecast error under 5% for dairy category”) and be prepared to iterate on the MVP in short cycles. If the MVP hits the mark, it forms the foundation for a broader solution. If not, the team can adjust inputs, algorithms, or even the use-case, with minimal sunk cost.

2.3 A/B Testing AI Solutions

Retail has long used A/B testing for marketing and web design; the same concept can validate AI initiatives. A/B testing involves running two scenarios in parallel – one with the AI solution (variant A) and one without or with the status quo (variant B) – and comparing results. This methodology provides a direct apples-to-apples measure of the AI’s impact. Suppose a retailer introduces an AI-driven pricing optimization tool. Rather than trust theoretical models, they designate a set of pilot stores to use AI-generated prices (Group A), while similar stores (in terms of size and customer demographics) continue with human-set prices (Group B).

Over a set period (say 4-6 weeks), they track metrics like sales revenue, units sold, and even customer feedback in both groups. If Group A significantly outperforms Group B – for example, a 5% higher sales uplift with stable margins – it’s strong evidence the AI pricing is effective. Alternatively, if there’s no difference or Group A underperforms, it signals the approach needs tweaking (or the old way was just as good). A/B testing can be applied to online scenarios too: testing an AI recommendation engine on a fraction of website traffic while the rest see the standard recommendations. Key to success is statistical rigor – ensuring the test runs long enough and the sample size is adequate to rule out randomness. For retail executives, these tests build confidence: they can literally see the numbers before deciding on a full rollout. A/B testing turns gut-feel into data-driven proof when assessing AI in customer-facing roles like marketing, pricing, or promotions.

3. The Power of Simulation: Digital Twins in Retail

What if you could test an AI change in a virtual copy of your store or supply chain, without any real-world risk? Digital twins make this possible.

3.1 Creating a Virtual Store or Supply Chain

A digital twin is a high-fidelity virtual model of a physical system – in retail, this could be a store, a warehouse, or even an entire supply chain network. With the rise of IoT and simulation technology, retailers now use digital twins to experiment with AI-driven changes in a risk-free virtual environment. For example, consider a large retail store implementing an AI system for store layout optimization (to reduce customer congestion and improve product discovery). Before moving a single shelf in the real store, they can replicate their store’s layout digitally, and deploy the AI agent in that virtual store. By simulating customer traffic (perhaps using agent-based modeling or historical footfall data), they let the AI rearrange virtual shelves or change end-cap displays, then observe the outcome on simulated shopper flow and sales. This virtual prototyping can answer questions like “What if we move the dairy section closer to the entrance?” or “How would adding an AI self-checkout in this corner affect queues at peak hours?” – all without inconveniencing a single real customer. Similarly, for supply chain, a retailer might create a digital twin of their inventory system and test an AI reorder algorithm during a high-demand season scenario (like Black Friday) to see if it prevents stockouts or creates bottlenecks. These simulations help teams refine AI parameters and strategies in a make-believe world that behaves like reality.

3.2 Reducing Risk with Simulation Results

The insights from digital twin simulations significantly de-risk subsequent physical pilots. By the time you go to an actual store pilot, you’ve already caught obvious flaws. For instance, a digital twin might reveal that an AI scheduling system for staff creates coverage gaps on Tuesday mornings – a quick tweak in the algorithm virtually can prevent a very real staffing issue later. Retailers like Amazon and Walmart have invested in simulation for years (Amazon’s Whole Foods acquisition famously involved simulations for inventory optimization). Even retailers without giant R&D budgets can leverage software like Unity or Nvidia’s Omniverse to build models, or work with providers that specialize in retail simulations. An added benefit: simulation can also help in communicating the vision. Showing a visual of a digital store with AI in action can help stakeholders and store staff grasp the concept and get comfortable ahead of a live pilot. Ultimately, digital twins don’t replace live pilots, but they act as a filter – many ideas or configurations can be tested in silico, and only the most promising move on to real-world trials. This means the pilots that do happen in physical stores have a higher probability of success, having been pre-vetted in the digital realm.

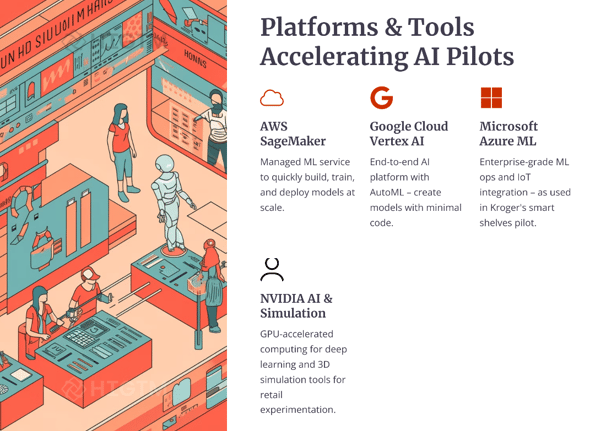

4. Leveraging Major AI Vendors and Tools

Retailers don’t have to go it alone. Tech giants and specialized vendors offer platforms to jump-start AI prototyping.

4.1 Sandbox Environments by Cloud Providers

Leading cloud vendors – Amazon Web Services (AWS), Google Cloud, Microsoft Azure – have developed robust environments for AI experimentation that retail firms can tap into. These platforms provide pre-built models, data storage, and computing power that can be scaled up or down on demand, perfect for piloting. For example, AWS’s retail suite includes tools like Amazon Personalize (for recommendations) and Lookout for Vision (for visual anomaly detection) which can be tried in a pilot with minimal setup. A mid-size retailer can use AWS to quickly build a prototype model for, say, detecting stockouts via shelf images, without investing in on-premise servers or extensive AI teams. If the pilot works, the same AWS infrastructure can scale to all stores; if it doesn’t, the retailer can shut down the cloud resources with little sunk cost.

Google Cloud’s AI Platform (Vertex AI) similarly lets retailers experiment with custom models or Google’s own AI APIs (such as Vision API or Recommendations AI) on a pay-as-you-go basis. This means a retailer can run a 3-month pilot of an AI model and only pay for what they use in those 3 months – no long-term IT commitments. The cloud also eases collaboration: data scientists, IT, and business analysts can all access the pilot environment remotely and see results in real time. By leveraging these vendor ecosystems, retailers get access to cutting-edge AI tech and security (since these platforms are enterprise-grade) from day one of their pilot, rather than reinventing the wheel.

4.2 Retail Tech Partners and Off-the-Shelf Solutions

Beyond the big clouds, there are specialized retail AI solution providers and consultancies (many likely partners of HIGTM) that offer “trial programs” or accelerators for piloting their technology. For instance, a company like IBM offers Watson-based solutions for retail (like Watson Assistant for customer service chatbots or Watson Order Optimizer). IBM often engages with clients in a phased approach: a short discovery, a pilot using the Watson solution in a limited scope, and then scale. Similarly, NVIDIA works with retailers on AI at the edge (like smart checkout cameras or loss prevention systems) by first setting up a pilot using their Jetson devices and AI models in a few stores to prove the concept. These vendors often bring deep expertise and even pre-collected data or models, which can jump-start a prototype.

Another example: SAP and Oracle have retail AI modules (for forecasting or personalization) that can be turned on in a test environment connected to a retailer’s data for a trial run. Partnering with such providers means you’re not building everything from scratch – you’re configuring and customizing an existing solution. The key for the retailer is to ensure that the partnership is structured for experimentation: clear goals, a defined pilot period, and an easy way to exit or pivot if results aren’t as expected. Many vendors even offer pilot pricing or money-back guarantees for trial projects, knowing that if the pilot succeeds, a lucrative full deployment may follow. By intelligently leveraging vendors, retailers can reduce technical risk (the tech is already proven elsewhere) and focus on validating fit for their specific context. It’s like renting a fully equipped test kitchen with a chef, rather than building a kitchen from the ground up, when trying a new recipe.

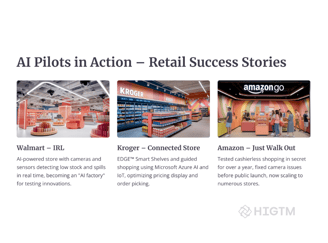

5. Real-World Case Studies: Retail AI Pilots

Nothing speaks louder than success stories. Here are how some major retail players have piloted AI – and the lessons learned.

5.1 Walmart’s Intelligent Retail Lab (IRL)

Walmart, the world’s largest retailer, recognized that implementing AI at scale in its thousands of stores would be extremely challenging – and risky – without thorough testing. To tackle this, Walmart created the Intelligent Retail Lab (IRL), a 50,000 sq. ft. live store in Levittown, NY that doubles as an AI innovation sandbox. In this “store of the future,” Walmart’s tech team installed thousands of ceiling cameras and sensors to trial an AI system that monitors stock levels, detects spills or checkout lines, and guides employees in real time. Importantly, IRL is a functioning Neighborhood Market grocery store, open to customers – meaning the pilots happen in a real-world setting, but contained to that one location. This approach yielded huge insights. For example, the AI learned to gauge produce freshness (bananas turning brown) and prompt restocking before customers. Early on, the Walmart team discovered challenges in processing the massive video data and in false alerts – issues they could solve in the lab store before trying similar tech elsewhere. Mike Hanrahan, CEO of Walmart’s IRL, described the lab as an “AI factory and learning. After refining the system at IRL, Walmart has started selectively rolling out pieces of it (like the out-of-stock detection) to other stores, confident that these AI tools actually work and are employee-friendly.

Lesson: A dedicated pilot environment like IRL can de-risk cutting-edge AI by allowing a “safe fail” space and continuous iteration. Even customers were educated via signs about the test, making the innovation transparent and building public trust before broader deployment.

5.2 Kroger’s Digital Shelf Trial

Another instructive case is Kroger, one of the largest grocery chains in the U.S., which experimented with digital smart shelves. Kroger partnered with Microsoft to develop the EDGE Shelf system – basically, electronic shelf displays with digital pricing and product info, coupled with sensors. Instead of rolling this out chain-wide (which would have been a huge investment), Kroger identified two pilot stores (one in Ohio, one in Washington state) to serve as testbeds. In these stores, they replaced traditional paper price tags with digital screens on every shelf and linked them to a cloud database. This enabled dynamic price changes, automated promotions, and even emoji signals to help customers find items on their shopping list via the Kroger app. The pilot stores collected data on everything from system stability (did the screens update reliably?) to customer reactions (were shoppers confused or delighted?). The results were promising – for instance, Kroger could change prices in seconds across the pilot store and found improved labor efficiency (staff spent less time on price updates). Customers appreciated the personalized icons guiding them to products. Most importantly, the technology proved stable in a real store environment. Armed with this evidence, Kroger expanded the test to end-c 100+ stores, where the risk was even lower (only some aisles had the tech). This gradual rollout let them refine the software and hardware in stages. Over time, Kroger saw not only operational benefits but also an uplift in sales for promoted items highlighted by the digital shelves.

Lesson: By piloting in a couple of stores and then scaling up slowly, Kroger avoided a massive upfront investment and ensured the concept truly worked before committing chain-wide. They also involved store employees closely in the pilot, training them and getting feedback – which smoothed adoption when the tech later spread to more locations.

5.3 Starbucks’ AI Barista Trials

Starbucks, though primarily a QSR (quick service restaurant), also provides a great example relevant to retail customer experience. Starbucks wanted to introduce an AI voice assistant at drive-thrus to take orders (a “digital barista”). Instead of immediately installing this in thousands of drive-thrus (which could risk customer satisfaction if it failed), they approached it methodically. Starbucks set up the concept in a controlled lab known as the Tryer Center – essentially a mock store environment at headquarters – to prototype the voice AI. They ran scenarios with employees and testers, using simulated drive-thru setups to see how well the AI understood orders, how quickly it responded, and what the error rate was. Early trials identified a big issue: the AI struggled with complex custom drink orders and noise interference. Thanks to this, Starbucks saved what they estimated to be 6 months of potential trouble in live stores. After improving the system, Starbucks then moved to a pilot in a small number of actual stores in select markets.

They quietly tested the AI barista during off-peak hours, gathering real customer interactions data. Customers were informed they were interacting with a new system, and Starbucks had a human on standby to take over if needed – ensuring service didn’t suffer. Over weeks, the AI improved and customer satisfaction remained intact in these pilot locations. Only with positive pilot results did Starbucks consider expanding the program.

Lesson: Even customer-facing AI can be piloted in low-risk ways – starting with employees or friendly testers in a lab, then limited real-world trials. This approach protected the Starbucks brand experience while still allowing them to innovate with AI.

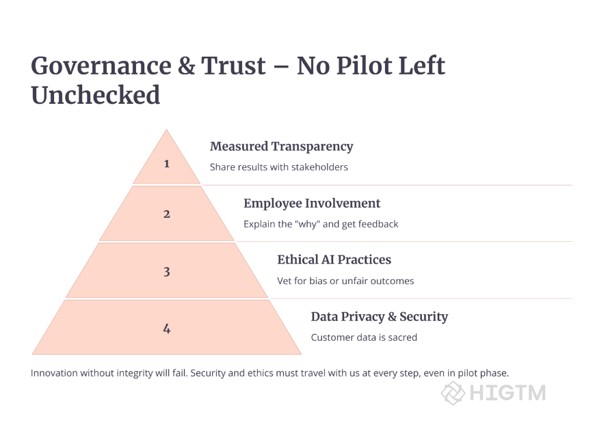

6. Best Practices for AI Piloting Success

Having the right mindset and process can make the difference between a pilot that’s a springboard and one that becomes a dead-end.

6.1 Define Success Criteria & KPIs Upfront

One of the golden rules of effective prototyping is knowing what success looks like before you start. For any retail AI pilot, clear metrics and KPIs (Key Performance Indicators) should be agreed upon at the outset. Are you aiming to reduce checkout time by 20% with an AI POS system? Increase online conversion by 0.5% with a recommendation engine? Decrease inventory holding costs by $X with better demand forecasts? Pick metrics that align with business goals and that are measurable within the scope of the pilot. It’s also important to set thresholds for these metrics to decide next steps.

For instance, if the pilot achieves at least 15% of the target (in a short period) it might merit extension or further investment; if it falls below 5%, perhaps it’s time to reconsider the approach. By quantifying success (and failure) in advance, the team avoids endless tinkering with no clear outcome. It also prevents the common issue of moving goalposts – where stakeholders might be tempted to declare a middling pilot a “success” without original criteria, or conversely kill a pilot that was actually on track if viewed with the right lens.

In addition to outcome metrics, define qualitative goals too: e.g., “store staff will be able to use the new system with minimal training” or “at least 30 customers try the new feature and give feedback”. These ensure you capture insights beyond the numbers, which in retail (a very human business) can be just as crucial.

6.2 Engage Frontline Employees and Customers

An often overlooked factor in AI pilots is the human element – the employees who operate the system and the customers who interact with it. The best pilots actively involve these groups and treat their feedback as a key measure of success. For example, if piloting an AI shelf management tool in stores, involve the store associates early: explain the purpose of the pilot, how it will make their jobs easier (e.g., “the AI will alert you when to restock, so you don’t have to constantly patrol aisles”), and solicit their input on the workflow. Similarly, in a customer-facing pilot (like an AI fitting room assistant in a clothing store), you might have associates observe or even interview a few customers after they try it out: Was it helpful? Frustrating? Did it feel natural? These insights can reveal usability issues or necessary feature improvements that pure data might miss.

Moreover, when employees feel heard and part of the innovation process, they become champions of the new system rather than resistors. Many successful pilots designate a few pilot champions – staff members trained to be super-users of the AI solution who can help train others and spread positive word-of-mouth if the pilot goes well.

On the customer side, consider soft launches (notifying customers that a new feature is beta) or offering small incentives for participating in a pilot program (like a discount for answering a survey about the experience). This manages expectations and makes customers more forgiving of any hiccups. In summary, treat a pilot not just as a tech trial, but a people trial – an opportunity to fine-tune the human-AI interaction and build broader support for the initiative.

6.3 Iterate Based on Pilot Learnings

The “iterative” in iterative approach means that running the pilot is not the end – it’s the midpoint in a continuous improvement loop. After or even during a pilot, teams should gather all forms of feedback (quantitative metrics, qualitative comments, observations of usage patterns) and hold a retrospective analysis. What worked well? What didn’t? Were there surprising behaviors? For instance, maybe your AI recommendation engine pilot found that customers indeed bought more, but it led to a slight increase in returns – digging in, you learn the AI sometimes recommended mis-sized items. This insight is gold: you can tweak the recommendation algorithm to factor in fit or true-size data and run a follow-up experiment.

Some pilots might need a Phase 2: a revised prototype launched after initial adjustments. It’s crucial to document these iterations – essentially building a knowledge base of what was tried and learned. Many organizations adopt an agile approach, treating the pilot as Sprint 1, then planning Sprint 2 for improvements, and so on.

Each cycle de-risks the project further and hones the solution. It’s also advisable to revisit the market context between iterations; retail is dynamic, and sometimes pilot results can be affected by external factors (seasonality, competitor moves) which should be accounted for in the next iteration. The ultimate aim of iterating is either to reach a configuration that meets success criteria (and is ready to scale) or to confidently determine that the concept should be shelved. Both outcomes are wins compared to blindly charging ahead.

A famous retail example: an online retailer piloting an AI image search (customers upload a photo to find similar products) found low usage. Instead of scrapping it outright, they iterated by adding a fun social sharing component, which increased engagement in a second pilot. The improved feature then became a hit on their app, illustrating that iteration can turn an initial flop into a future star.

6.4 Know When to Scale – or Fail Fast

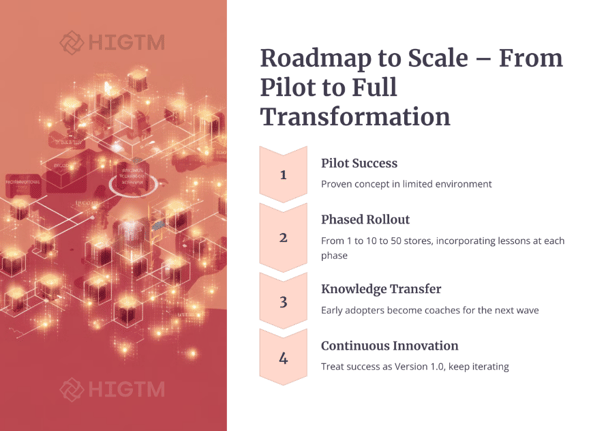

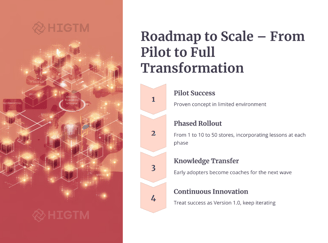

Not every pilot will succeed, and that’s okay. The beauty of a well-run pilot is that even a “failure” is a controlled failure with valuable learnings. However, it’s important for retail decision-makers to make the call: scale or abort, at the right time. Signs a pilot is ready to scale: it met or exceeded the success metrics, users (employees/customers) have largely positive feedback, and the team feels they have ironed out major kinks through one or two iteration cycles. In this case, the next step is developing a scaling plan – which might involve hardening the solution (for example, refactoring a quick prototype into a production-grade system), investing in infrastructure, training the broader workforce, and scheduling a phased rollout (perhaps region by region or product line by product line) to manage the growth.

Even in scaling, one can be iterative – expanding in waves rather than all at once preserves some flexibility to adjust if new issues emerge at larger scale. On the other hand, signs a pilot should be stopped or significantly rethought: the core metric is far off target and multiple tweaks haven’t moved the needle, users actively dislike or avoid the new system, or external changes have rendered the idea less relevant (imagine piloting an in-store tech that suddenly becomes less useful post-pandemic as shopping behavior shifts).

It takes courage, but ending a pilot early can save considerable resources – this is the essence of “fail fast.” Celebrate the attempt, analyze why it didn’t work (perhaps the tech isn’t mature enough yet, or the problem was misidentified), and share those lessons. Many times, a “failure” pilot informs a future successful one. For example, a retailer might pilot a robot greeter that fails to charm customers, but the data and feedback from it guide them to develop a more useful AI assistant for stock checks that does succeed later. The key is not to let a pilot linger in limbo. Decide and act – either scale it or move on – so the organization keeps its innovation momentum and resource focus.

7. Conclusion: Iterate to Innovate, the Retail Way

In an industry as competitive and fast-moving as retail, the way to stay ahead is not by making the single perfect bet, but by evolving through iterations. Prototyping and piloting AI initiatives provide a practical path to harness cutting-edge technology while safeguarding your business from the pitfalls of unproven ideas. The iterative approach turns potential risks into manageable experiments. Each experiment – whether a triumph like Kroger’s smart shelves or a near-miss that required a pivot – becomes a stepping stone toward a smarter, more customer-centric retail operation.

For retail leaders, adopting this mindset requires a cultural shift: encouraging teams to test ideas, accepting small failures, and valuing learning as much as immediate ROI. But the payoff is immense. When it does come time to execute a major AI rollout, you do so backed by data, proven solutions, and an organization that’s already adapted to the change in micro-dose form. The result? Maximized ROI and minimized disruption – a true win-win.

As you consider your next AI initiative – be it a personalized marketing engine, an autonomous store, or an intelligent supply chain upgrade – ask, “How can we pilot this first?” Start with that one-store trial or that one-month proof-of-concept. Engage your team in a creative prototyping workshop. Partner with experts or vendors to jump-start if needed. And set those compasses on your objectives, one incremental journey at a time.

In retail, innovation is not a one-time project, but an ongoing journey. The iterative prototyping approach ensures that journey is one of continuous improvement, guided by real-world feedback at every turn. By the time you reach your destination – a successful AI-powered solution at scale – you’ll have confidence it’s the right path because you’ve walked every step of it yourself. Your roadmap to AI success starts with that first small pilot – so take that step, and let the learning begin.

Ready to take the first step in your retail AI journey? Consider scheduling a strategy session or pilot consultation with experts who have done it before. At HIGTM.com, we specialize in guiding retail businesses through structured AI prototyping and piloting processes. With the right partner and approach, your next big idea could start as a small experiment that transforms your business.

Iterate, innovate, and lead the future of retail!*

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.