50. Quality Assurance in AI: Testing Models for Reliability in Retail

Artificial intelligence is rapidly becoming the trusted assistant in retail – making product recommendations, forecasting demand, personalizing marketing, and more. But what happens when that “trusted” assistant makes a mistake? Imagine an AI system mislabeling a high-end handbag as a $5 item, or a chatbot that accidentally offends a loyal customer due to a biased response. These scenarios aren’t sci-fi; they’re real risks when AI models aren’t rigorously tested. As retail C-suite executives at small and mid-sized businesses (SMBs), you might be investing in AI to gain an edge, but without Quality Assurance (QA), that edge can cut the wrong way. In this article, we’ll explore how to ensure your AI models are accurate, reliable, and fair – in other words, how to trust the decisions that your algorithms are making on your behalf. We’ll discuss why QA in AI is vital for retail, the risks of skipping this step, and practical ways to test and validate AI models before they impact your business and customers. The goal is to empower you with a strategic approach to AI quality that protects your brand while unlocking AI’s potential.

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 2 (DAYS 32–59): DATA & TECH READINESS

Gary Stoyanov PhD

2/19/202514 min read

1. The AI Opportunity in Retail – and the Hidden Risks

Retail is embracing AI like never before. In fact, about 87% of retailers have deployed AI in at least one area of their business, whether it’s an inventory management system or a customer-facing app. Even smaller businesses are jumping in; one study noted 75% of SMBs are experimenting with AI to enhance their operations. The promise is real – AI can boost efficiency, uncover insights, and personalize the shopping experience at scale.

However, with great power comes great responsibility (to borrow a phrase). When you let an AI model make decisions – be it setting prices, allocating stock, or recommending products – you are handing over a bit of control. If that AI is flawed or biased, the impact is immediate and widespread. Unlike a human employee, who might err occasionally, an AI system can replicate mistakes at lightning speed and massive scale. As IBM researchers famously pointed out, biased data or algorithms can lead to AI deploying biases at scale. In essence, a small bias in your training data can become a large bias affecting thousands of customers per day once the AI is live.

Regulators and consumers are aware of these pitfalls. The U.S. Federal Trade Commission (FTC) has warned that AI models are susceptible to bias, inaccuracies, “hallucinations,” and bad performance.

If big tech companies are struggling with these issues, it’s a signal that no company can afford to be complacent. For retail SMBs, a major AI failure could be devastating – think public relations nightmares, loss of customer trust, or even legal action. In the age of social media, one snafu can go viral. Retail executives need to understand that the hidden risks of AI (if untested) are very real: from recommending the wrong product at the wrong time to inadvertently discriminating against a segment of your customers.

2. Why Quality Assurance in AI is Critical for Retail

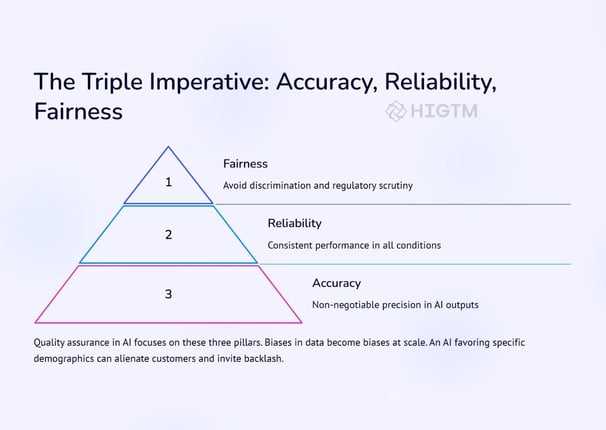

Quality Assurance is a familiar concept – we use QA processes to check products for defects or software for bugs. AI models need the same scrutiny, arguably even more so because they learn and change over time. Here’s why QA is especially critical for AI in the retail sector:

Protecting Brand Trust: Retail is built on trust and relationships. Customers expect that pricing is fair, recommendations are relevant, and interactions are respectful. If an AI glitch causes a widely incorrect price (imagine a $1 pricing error on an expensive item) or an offensive product suggestion, customers lose faith quickly. Retailers are keenly aware of this; they know a single AI misstep can invite not only customer ire but also regulatory attention. By thoroughly testing AI, you prevent rogue outcomes that could erode your brand reputation overnight.

Ensuring Accuracy and Reliability: An AI model is only as good as its results. A demand forecasting AI that’s accurate 9 times out of 10 can still cause havoc that 1 time it’s wrong if it overestimates demand for a product by 10x. Reliability means consistent performance. Deloitte describes robust AI systems as those that produce consistent and reliable outputs, even failing in expected ways when they must. Through QA, you check that your model performs well not just on average, but across a variety of scenarios – including edge cases. This is crucial in retail, where conditions can change quickly (sudden trends, weather impacts, etc.). QA gives you confidence that the AI won’t crumble under atypical conditions.

Mitigating Bias and Ensuring Fairness: AI models can inadvertently pick up societal biases present in historical data. In retail, this might manifest as an algorithm that recommends higher-end products predominantly to men, or offers discounts to one demographic more than another, simply because the training data reflected past marketing focus. For example, an AI might “learn” to favor young urban males in customer targeting if past campaigns overserved that group. This isn’t just a moral issue – it’s a business one. A biased AI can alienate important customer segments. Surveys show consumers are paying attention: 86% of consumers believe retailers should make their AI more diverse, equitable, and inclusive. If your AI isn’t fair, customers will notice – and they’ll vote with their wallets. QA processes, especially bias testing, can catch these issues early. By testing your model’s outcomes across different groups and scenarios, you ensure it meets modern standards of fairness and inclusive customer experience.

Avoiding Costly Mistakes: AI errors can directly hit the bottom line. Think of a pricing algorithm error that misprices products (either too low, causing revenue loss, or too high, driving customers away), or an inventory AI that fails to order in-demand stock, resulting in empty shelves. One striking real-world example is Zillow’s AI-driven home-buying fiasco, where an algorithm’s mistakes in pricing houses led to hundreds of millions in losses and the closure of an entire business unit. In retail, while the scale might be different, the principle stands – mistakes multiply. Quality assurance acts as a safeguard, catching the errors in a sandbox before they turn into costly blunders in the real world.

3. Key Areas to Test for AI Model Quality

Not all AI failures look the same. To comprehensively assure quality, retail executives should focus on several key areas when testing AI models:

Accuracy of Predictions or Decisions: This is fundamental. If your AI forecasts sales, how close are those forecasts to reality? If it’s a recommendation engine, how often do customers actually like or click the recommendations? Use metrics appropriate to the task (e.g., mean absolute error for numeric predictions, click-through rate or conversion rate for recommendations) to quantify accuracy. During QA, evaluate the model on a validation dataset (data it hasn’t seen before) that reflects real-world data. Check overall accuracy and also where it falters – are there certain products or scenarios where errors spike? Those need attention, either through model tuning or additional training data.

Consistency and Reliability: An AI model should perform reliably over time. Test the model on data from different time periods (seasonality checks) and simulate varying conditions. For instance, stress-test a pricing AI with a sudden cost change or a flood of demand, similar to a holiday rush. The model’s output should remain sensible and stable under stress. Also, run the model multiple times (with different random seeds or slight data perturbations) to ensure it’s not overly sensitive to minor fluctuations. Edge case testing is part of this – intentionally input extreme but plausible scenarios (e.g., a customer buys an unusually large quantity of an item, or an out-of-season purchase) to see if the AI still behaves logically.

Bias and Fairness Testing: As discussed, test the model for unbiased behavior. This means slicing the output by demographic or segment: do customers from different age groups, genders, ethnic backgrounds, or regions get significantly different outcomes from the model? For example, does the AI’s product recommendation quality drop for users in rural areas versus urban areas? If you have a loyalty program, does the AI favor new customers over returning ones (or vice versa) in a way that’s not intended? There are tools and techniques (such as bias detection toolkits) that can quantify bias – for instance, measuring difference in prediction accuracy across groups. If you find discrepancies, you may need to retrain with more diverse data or apply algorithmic fairness techniques. Ensuring fairness isn’t just ethical; it keeps your customer base broad and engaged.

Security and Robustness: While not unique to retail, it’s worth testing if your AI can be tricked or exploited. Could an input be crafted to confuse the model? In online retail, this might mean someone feeding a weird string of text to a search AI to get unintended results, or spamming a recommendation system with bot accounts to influence it. Adversarial testing (where you intentionally try to break the model or make it behave badly) can reveal vulnerabilities. Additionally, consider data drift – if the data the model sees in production gradually shifts (maybe your customer preferences change post-pandemic, or supply chain dynamics evolve), the model’s performance might degrade. Set up monitoring to catch when the model’s accuracy starts to slip over time, which is a cue that it needs a refresh or retraining (this is post-deployment QA, effectively).

Compliance and Ethical Alignment: Ensure the AI’s decisions align with legal and ethical standards. For instance, if your model uses customer data, make sure it’s respecting privacy regulations (like GDPR or CCPA) – e.g., no unfair use of sensitive attributes. Also, check that any business rules you have are correctly enforced by the AI. If corporate policy says “never recommend alcohol to underage customers” or “don’t use personal credit score in pricing,” verify the model isn’t indirectly violating those rules. This often involves having a human in the loop to review how the AI is making decisions (if it’s somewhat interpretable) or at least the outcomes in sensitive cases. Quality assurance should flag any output that could put the company in a legally or reputationally risky position.

4. Best Practices for Testing AI Models (How to Do QA Right)

Now that we know what to test, let’s talk about how to go about it effectively. Implementing AI QA in an SMB retail environment can be done step-by-step:

Establish a Validation Team or Process: You don’t need a huge department, but assign someone (or a small team) the role of AI auditor. This could be part of your data science team or even an external consultant with expertise in AI testing. The key is to have clear ownership of the QA process, separate from those who built the model (fresh eyes help spot issues).

Use Realistic Test Data: When testing, use data that mirrors your actual business environment. That means if you’re a fashion retailer, your test scenarios should include seasonal shifts (winter vs summer products), various customer profiles, and even anomalies like a surge in demand due to a trending TikTok video. The more your test data reflects reality, the more confident you can be in the results. Also include edge cases – for example, test an empty shopping cart, a cart with 100 items, a return scenario, etc., depending on what the AI handles.

Simulate the Customer Experience: Put yourself in the customer’s shoes. One effective practice is to run simulations or role-plays. If it’s a chatbot AI, have staff interact with it as if they were customers (including angry customers, curious customers, those with complex questions). If it’s a recommendation engine, simulate a user session and see what gets recommended for various user profiles. This qualitative testing can surface issues that pure data testing might miss – like a response that is technically correct but tone-deaf or confusing to a human. Remember, at the end of the day, it's about how the AI’s behavior feels to the customer.

Automate Where Possible: Just like software testing has unit tests and automated test suites, AI models can have automated tests too. You can script a battery of tests that run whenever the model is updated: e.g., feed a standard set of inputs and check that the outputs haven’t strayed from expected ranges. For instance, if your pricing model suddenly suggests a 90% discount outside of a promo period, the test should flag it. Automation ensures that as your AI evolves, you maintain consistent quality checks without manual effort each time.

Incorporate Human Oversight and Review: Especially for fairness and ethical considerations, human judgment is crucial. Set up a review of AI decisions at regular intervals. For example, review a random sample of recommendations or transactions that the AI handled each week. Are they reasonable? Do they align with your brand values? This is similar to quality spot-checks in customer service. Humans can catch subtleties like “this product recommendation technically fits the user’s purchase history, but it’s a sensitive item that might offend them.” Such nuances might be lost on the AI without guidance.

Continuous Monitoring Post-Deployment: QA isn’t a one-and-done deal. Once the AI is live, establish KPIs to monitor its performance. If it’s customer-facing, track customer satisfaction scores or complaint rates. If it’s back-end, track error rates or the business metric it’s supposed to move (like inventory turnover rate). Sudden changes in these indicators can signal an issue. Some companies implement an automated alert if the AI’s outputs go out of a certain bound. Additionally, gather feedback from employees and customers. They are the first to notice if “the AI is acting weird.” A feedback loop helps you catch issues that initial testing might not have foreseen.

Document and Audit: Keep records of all tests performed, issues found, and fixes made. This documentation not only helps improve the model over time but also is useful if you need to demonstrate your AI governance to regulators or partners. Showing that you have a robust QA process can increase trust with stakeholders. It’s part of good AI governance – treating the model not as a black box, but as a product that goes through quality control and improvement cycles.

5. Reducing Bias – A Closer Look at Fairness Testing

Because fairness is so important, let’s delve a bit deeper into how you can reduce bias in AI models. Start at the source: the training data. If your data on customer behavior is skewed (perhaps most data comes from a single store or one region), the model will mirror those skews. Strive to train on data that’s as diverse and representative as possible of your overall customer base. This might mean augmenting data from underrepresented groups or time periods. For instance, if you’re implementing an AI for holiday sales forecasting, ensure you have data from multiple years of holiday seasons, not just one atypical year.

Next, use bias detection tools or even simple analysis on model outcomes. There are open-source toolkits (like IBM’s AI Fairness 360 or Google’s What-If Tool) that help test a model for bias. If you find that the model is less accurate for a certain group, consider techniques like re-weighting the training data or adding fairness constraints in the model training process. In practice, a straightforward step could be: during testing, you notice the recommendation AI suggests fewer women’s products to female users than to male users (maybe because historically, male users bought more, skewing its logic). To fix this, you might retrain the model giving more weight to female user data or explicitly instruct the model to balance recommendations.

Human review also plays a key role in fairness. Sometimes, technical metrics alone won’t capture an unfair outcome. Assemble a diverse team to review AI decisions. Different perspectives can highlight issues others might miss. One person might notice a cultural insensitivity in a chatbot’s phrasing, while another spots that the AI isn’t recommending products in certain skin-tone ranges to certain users. These insights are invaluable. The QA process should involve these human checks before the AI is fully unleashed.

Lastly, commit to continuous education of the model. If biases are discovered, correct them and then monitor. Bias can creep back in as customer behavior changes or new products/data are introduced. Regularly scheduled bias audits (say, quarterly) can help ensure the AI stays on the fair path. In a way, think of it as tuning an instrument – over time, you need to recalibrate to keep the model in harmony with fairness standards.

6. Business Benefits of Rigorous AI Testing

Implementing thorough QA for AI requires effort – so what’s the payoff for your business? There are several clear benefits:

Confidence in Deployment: When you’ve tested an AI model every which way, you can launch it with confidence. This means faster adoption of AI-driven initiatives because the C-suite and stakeholders trust the model. Instead of second-guessing whether the AI will work, you know it will (and if it acts up, you have mechanisms to catch it). This confidence can accelerate innovation – you’ll be more willing to try AI in new areas of the business once you have a proven QA process.

Improved Performance and ROI: QA isn’t just about preventing disasters; it also uncovers opportunities to improve the model. During testing, you might find, say, the AI’s recommendation accuracy for electronics is low – which prompts you to add more training data for that category or tweak the algorithm. The result is a model that performs better, leading to more conversions or savings. Better AI performance directly translates to better financial results, whether that’s higher sales from recommendations or lower costs from efficient inventory management. It ensures you truly get the ROI that AI promised in the first place.

Customer Satisfaction and Loyalty: A reliable, well-behaved AI enhances customer experience. Think of a chatbot that actually solves customer issues without confusion, or a personalized offer that feels just right. These positive experiences can boost customer satisfaction. On the flip side, by avoiding the negative AI experiences (like wrong charges, irrelevant suggestions, or perceived discrimination), you’re not giving customers reasons to leave. In retail, keeping customers happy and loyal is gold. QA-ed AI contributes to that by being a consistent, positive touchpoint. There’s a reason why major retailers heavily invest in QA – they know a smooth customer experience is partly thanks to catching glitches before customers ever see them.

Regulatory and Legal Safety: With upcoming regulations on AI (and existing laws on consumer protection and anti-discrimination), having a documented QA process and demonstrably fair AI can protect you from fines and lawsuits. If something does go wrong, being able to show that you took responsible steps can mitigate reputational damage. Essentially, QA is part of good governance and due diligence. It’s much cheaper to fix a problem early than to fight a class-action lawsuit or regulatory investigation later. Techstrong Media notes that continual monitoring and testing help maintain fairness and address biases over time, underscoring that regulators expect proactive management of AI risks.

Competitive Advantage: Finally, think strategically. If your competitors are rushing AI into production and they hit a scandal or their system makes a big mistake, their customers may lose trust. If you’ve taken the careful road with QA, you’re less likely to have those missteps. You can position your brand as one that uses “trusted AI”. In marketing to your customers, this can even be a point of differentiation: for example, a note on your website or press releases that you have an ethics and quality process for AI. In an era where consumers are increasingly skeptical of unchecked AI, being the retailer that got it right is a competitive edge.

7. Conclusion: Ensuring Reliable AI for Retail Success

AI is no longer a novelty in retail – it’s becoming business as usual. But as we integrate AI deeper into operations and customer touchpoints, ensuring its reliability and integrity must become business as usual too. For SMB retail executives, the message is clear: you don’t have to be a tech giant to implement robust AI Quality Assurance. With the right approach, even a lean team can put effective checks and tests in place.

Quality Assurance in AI is about earning trust – the trust of your customers, your employees, and your own trust in the systems you deploy. By rigorously testing for accuracy, consistency, and fairness, you turn AI from a risky bet into a reliable partner. You catch the problems in the lab so they never surface in the storefront. In doing so, you safeguard your brand’s reputation and ensure that your investment in AI truly pays off.

As you lead your company through the exciting frontier of AI in retail, remember that due diligence is your safety harness. Embrace a culture that questions and tests algorithms just as much as it celebrates what they can do. Encourage your teams to flag odd AI behaviors and treat those findings as opportunities to improve, not embarrassments to hide. When quality assurance becomes ingrained in your AI projects, you create a virtuous cycle: better AI leads to better business outcomes, which leads to more resources and confidence to expand AI in new areas – all under the umbrella of trust and verification.

In summary, testing models for reliability is the unsung hero of AI success stories. It’s the hard work behind the scenes that prevents headline-worthy failures. By focusing on QA, you’re not slowing down innovation – you’re fortifying it. Retail is detail, and in the age of AI, that adage extends to digital details like algorithms and data. Sweat the details now, and you’ll reap the rewards of AI-driven retail transformation with far fewer hiccups. Here’s to reliable, fair, and effective AI powering your retail success for years to come.

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.