47. Open-Source AI Tools – A Transformative Opportunity for Financial SMEs

Artificial Intelligence isn’t just for Wall Street giants or Silicon Valley unicorns anymore. Today, small and mid-sized financial institutions (SMEs) – from community banks and credit unions to boutique investment firms – can tap into the power of AI without breaking the bank. How? Through open-source AI tools. These are free, community-driven software frameworks and libraries that anyone can use. For a cost-conscious SME operating under tight budgets and strict regulations, open-source AI offers a compelling path to innovation. Imagine deploying a cutting-edge fraud detection system or an intelligent credit scoring model with zero licensing fees and total control over the code. It’s possible – and many forward-thinking financial SMEs are doing it already. In this comprehensive guide, we’ll explore the opportunities open-source AI brings to financial SMEs. We cover strategic considerations (like cost, security, and scalability), compare open-source with proprietary solutions, highlight top open-source AI tools in finance, and discuss how to overcome implementation challenges. Throughout, we’ll provide real examples and best practices, so you come away with a clear roadmap for leveraging open-source AI in your organization. Whether you’re a bank executive looking to optimize operations, a fintech founder aiming to build innovative products, or an IT manager tasked with “doing more with less,” this article will show you how open-source AI can be a game-changer for your business – and how to adopt it effectively and safely. Let’s dive in!

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 2 (DAYS 32–59): DATA & TECH READINESS

Gary Stoyanov PhD

2/16/202533 min read

1. Why Open-Source AI Makes Sense for Financial SMEs

1.1 Cost Savings

For any SME, controlling cost is vital. Proprietary AI software or platforms often come with hefty price tags – think expensive licenses, per-user fees, or revenue-sharing models. Open-source AI tools, on the other hand, are typically free to use. There are no licensing fees. You can download a library like TensorFlow or scikit-learn and start building, without writing a check. The cost savings can be substantial: not only do you save on software purchase, but you also avoid vendor maintenance contracts and costly upgrades. Many SMEs redirect these savings into hardware (like better servers or cloud services) or into hiring talent to actually build the models.

One community bank, for example, reported saving over $500,000 per year by replacing a proprietary fraud detection system with an open-source AI solution they developed in-house. Those savings went straight to their bottom line – or into funding other strategic projects.

1.2 No Vendor Lock-In

Vendor lock-in is a common concern in finance. If you adopt a proprietary system, you often become dependent on that vendor’s technology and roadmap. It can be hard to switch providers or adapt if the vendor’s product no longer meets your needs (and switching is usually extremely costly and disruptive). Open-source software mitigates this risk. Because the code is open and standards-based, you can move it, modify it, or even fork it if needed. You’re not tied to a single vendor’s ecosystem. For instance, you could start running an open-source AI tool on AWS cloud today and decide next year to shift to Azure or on-premises servers – and you could do so relatively easily, since you have full control over the software. This flexibility is like an insurance policy for your tech investments.

A study in the financial sector found that 77% of firms cited reducing vendor lock-in as a key benefit of open-source adoption.

For SMEs, avoiding lock-in not only preserves agility but also gives you negotiation power. If you do need enterprise support for an open-source tool, there are often multiple companies (including possibly the vendor themselves offering support services) who can compete for your business – keeping costs competitive.

1.3 Customization and Control

Finance isn’t a one-size-fits-all industry. A community bank has different needs than a large commercial bank; a fintech lending startup underwrites loans differently than FICO does. Open-source AI allows you to customize and tailor solutions to your exact needs. Since you have access to the source code, you can tweak algorithms, add features, or integrate deeply with your existing systems. Want your credit risk model to incorporate an unconventional data source? You can modify an open-source model to do that. Need to adhere to a new regulation that requires additional data logging? You can build that capability around your open-source pipeline.

This level of control is usually impossible with closed, proprietary AI systems where you can only adjust what the vendor lets you adjust. With open-source, you effectively own the “recipe” of your AI solution. Many SMEs find that this control not only helps them create a better fit for their business, but it also streamlines compliance (because they can ensure the system does exactly what regulations require).

1.4 Innovation & Community Support

Open-source projects are at the heart of AI innovation. Much of the latest research in machine learning is implemented and shared through open-source. This means by adopting open-source tools, you’re plugging into a global R&D effort. You get access to state-of-the-art techniques, often years before they might appear in commercial products. For example, advancements in natural language processing (like transformers) or new algorithms for anomaly detection are typically available open-source long before a vendor includes them in a paid offering.

Furthermore, for SMEs that can’t afford a dedicated R&D division, the open-source community acts as an extension of your team. Thousands of developers, including those at big tech companies and universities, contribute improvements, fix bugs, and answer questions online. Resources like Stack Overflow, community forums, and GitHub repositories are invaluable. If you run into an issue, chances are someone else has too – and the solution is already documented. This collaborative support can often substitute for formal vendor support. As one fintech founder put it, “Whenever we hit a roadblock, we found an answer on the internet within hours – it’s like having a 24/7 support line, powered by enthusiasts around the world.”

1.5 Scalability and Performance

Don’t let the word “open-source” give an impression of hobbyist or low-grade software. The reality is many open-source AI tools are enterprise-grade in performance. They are built and used by the likes of Google, Facebook (Meta), NASA, and yes, big banks too. TensorFlow, for instance, was developed by Google Brain – it can distribute training across hundreds of machines or run on a smartphone, scale up or down as you need. For an SME, this means headroom: you can start small, but the same tools can scale as your data or user base grows. You won’t need to re-platform when you go from 1000 customers to 100,000 customers.

Open-source tools often exploit the latest hardware (GPUs, TPUs) and efficient algorithms. This matters in finance where speed can equal money – e.g., processing transactions in real-time for fraud, or running risk calculations overnight before markets open. Many SMEs have successfully used open-source AI to achieve real-time analytics that were previously only dreamt of, thanks to the performance optimizations contributed by the community.

In short, open-source AI brings to financial SMEs what open-source Linux brought to enterprise computing years ago: a cost-effective, flexible, scalable foundation that you can build world-class systems on. It shifts the advantage towards those who are willing to embrace it and invest in harnessing its power.

2. Strategic Considerations: Cost, Security, Compliance, and Integration

Adopting open-source AI is a strategic decision. It’s important to consider a few key factors to ensure success:

2.1 Cost-Effectiveness Analysis

While open-source software is free, it’s not “free” in the sense that it runs itself. SMEs should plan for indirect costs: hardware or cloud resources to run AI workloads, training for staff, possibly consulting support for implementation, and ongoing maintenance (updates, monitoring). The good news is even with these factored in, open-source solutions tend to be significantly cheaper over time than proprietary ones. Avoiding license fees can save tens or hundreds of thousands annually. Plus, you often avoid the dreaded yearly “price hike” letters from vendors because you own your solution. When building your business case, consider a 5-year total cost of ownership (TCO): include the cost of any outside help, additional hiring, and infrastructure. Compare this to the quote a vendor gives for their solution. In many cases, SMEs find the TCO of open-source can be 50-80% lower than proprietary options for equivalent functionality – a huge win for the bottom line.

Also consider the opportunity cost: by saving money with open-source, you free up budget that can be invested in other projects or marketing, etc. One SME lender allocated savings from not renewing a software license towards a data scientist’s salary – effectively trading a software expense for a new hire who not only implemented an open-source replacement but then kept improving models continuously. That’s a strategic reallocation of funds that yields ongoing returns.

2.2 Security & Risk Management

With great power (control of code) comes great responsibility. When you run open-source AI, you are effectively your own vendor in terms of security. It’s critical to implement strong cybersecurity practices. This includes:

Keeping Software Updated: Stay on top of updates for your open-source tools. Subscribe to mailing lists or RSS feeds for security advisories. Many open-source AI frameworks will post patches if vulnerabilities are discovered. Don’t ignore those updates.

Using Reputable Libraries: Stick to widely-used, well-supported libraries. For example, TensorFlow and PyTorch are backed by large organizations and have many contributors and users – they are likely more secure and quickly fixed than a very obscure project with one maintainer.

Secure Configurations: Out of the box, some tools may have debug modes or settings that aren’t secure for production. Ensure you (or your IT team) configure them following industry guidelines (for instance, requiring authentication on any dashboards or APIs you enable).

Data Security: AI often involves a lot of data. Ensure that sensitive data (personal identifiable info, account numbers, etc.) is protected. Use encryption for data at rest and in transit. Open-source encryption libraries (like OpenSSL) are standard and reliable; use them. Also, consider techniques like data masking or synthetic data for training, so that even if model data is exposed, it doesn’t leak real customer info.

Testing: Before deploying, test your AI application for vulnerabilities. There are open-source security scanners that can be run (for web services, etc.). Engage your security team to do penetration testing. Basically, treat it with the same seriousness as you would a new online banking portal launch.

Governance: Set up internal policies for using open-source. For example, maintain an inventory of open-source components in use (there are tools for Software Bill of Materials – SBOM generation). That way, if a major vulnerability (like the famous Log4j incident) hits the news, you can quickly identify if you’re affected and where. Some financial regulators are starting to ask about open-source governance, so having this in place also demonstrates proactive risk management.

The bottom line is that open-source can be as secure as any proprietary solution – many would argue more secure, since you have nothing hidden and a community watching for issues – but it requires you to actively manage it. Most SMEs find that with a bit of discipline and perhaps external guidance initially, they can confidently run open-source in a highly secure manner. (Many large banks do – they wouldn’t if it were inherently insecure).

2.3 Regulatory Compliance

Financial services is one of the most regulated industries, and rightly so. When deploying AI, you need to be mindful of laws and guidelines such as:

Privacy regulations (GDPR, CCPA): Ensure data usage complies with privacy laws. For instance, under GDPR, if you’re using personal data to train models, you might need a legal basis (consent or legitimate interest) and you must also consider the “right to explanation” if that model makes automated decisions about individuals. If you adopt an open-source AI model for credit scoring in Europe, you’ll likely need to be able to explain to a rejected applicant why the model said no. This is doable – techniques for model interpretability (like SHAP values, LIME) are available as open-source add-ons. So include an explainability step in your AI pipeline.

Fair Lending and Bias (ECOA, etc.): AI models, if not carefully managed, can inadvertently discriminate. It’s crucial to test your models for bias. Open-source toolkits like AI Fairness 360 (from IBM, open-source) can help check bias metrics. You may also need to periodically review model factors with compliance officers to ensure no prohibited variables are being effectively used via proxies.

SEC/FINRA Guidelines: If you’re in securities or brokerage, regulators like FINRA have issued guidance on AI. A key point they emphasize is supervision – just because a machine is making decisions, you can’t set-and-forget. You need to supervise AI outputs like you would a human employee’s work. That means monitor model decisions, have humans in the loop for critical junctures, and ensure there’s an escalation path if the AI does something unexpected. Document your AI model development and validation as you would a traditional model under SR 11-7 (for those in banking who know model risk management guidelines).

Auditability: Regulators or auditors might ask: “How was this model developed? Show me it was properly validated.” If you use open-source, you have the advantage of transparency – you can show the actual code and parameters. But make sure to keep good records: which data was used for training, what assumptions were made, how was the model tested, and what results came out. Many SMEs maintain a “model document” for each key AI model, akin to documentation a vendor might provide, but produced internally. This builds trust with regulators and management.

Licensing Compliance: Slightly different angle – open-source tools come with licenses (Apache 2.0, MIT, BSD, etc.). Most are very permissive (you can use them freely in commercial contexts), but you should still keep track. Ensure none of the licenses have obligations you need to meet (for example, some GPL-licensed code would require sharing modifications). Generally, major AI libraries use permissive licenses that are business-friendly. This is usually a minor point, but your legal team might want to review licenses of key components.

One encouraging aspect: regulators are increasingly aware of AI and are educating themselves. They generally don’t ban AI or open-source; instead, they focus on outcomes (is it fair, is it secure, can you explain it?). If you implement open-source AI thoughtfully, you can meet these expectations. In fact, your ability to custom-fit and explain open-source models can give you an edge in compliance compared to a black-box vendor model where you have to say “we just trust the vendor”.

2.4 Integration and IT Infrastructure

Before jumping in, assess your current IT landscape. Where will an AI model live? How will it fetch data and how will results be consumed?

Open-source AI tools typically run on Linux-based systems (often in Docker containers). If you already use cloud services, integrating is relatively straightforward – you can use cloud servers or managed Kubernetes to deploy your AI services. If you run on-premises, ensure you have a server environment (or at least powerful desktops or a small server for initial development). For heavy model training, having a GPU (either in-house or renting one in the cloud) can dramatically speed up work.

Integration points to consider:

Data Sources: Identify where your data is coming from. Core banking database? CSV exports? Real-time message queues? You might need connectors – e.g., if data is in Oracle, you’ll use a Python Oracle client to pull it into a pandas DataFrame for modeling. These connectors are usually available (often provided by the database vendor or open-source communities). Plan the data pipeline for training (one-time or periodic data loads) and for production (e.g., a real-time feed vs. batch processing overnight).

Systems to Integrate With: Will the AI output feed into a decision engine, a dashboard, or a customer-facing app? For example, if an AI model approves loans, you’d integrate it with your loan origination system. Often, wrapping the model in a REST API is a clean solution. That way any system (regardless of language or platform) that can make an HTTP call can get a prediction. There are lightweight frameworks (Flask, FastAPI in Python) to create such APIs easily.

Core System Constraints: Some older core banking systems might not easily interface with modern tech. In such cases, you might use an intermediate step – e.g., export data from core system daily, run the AI model on that data on a separate server, then import results back. It’s not real-time but can still add value (like daily risk reports or weekly churn predictions). Over time, you can work on tighter integration.

IT Team Involvement: Involve your IT team early. They will likely need to provision environments, ensure network security for any new services, and possibly manage deployments. Frame the open-source AI introduction not as a rogue experiment, but as an enhancement to the infrastructure. Many IT departments are actually quite open to open-source, since they likely already use Linux, Apache, etc. Work with them on what’s needed for a development environment vs. a production environment.

2.5 Talent and Training

A strategic plan should include the human element. Do you have people who can run these tools? If not, will you train existing staff or hire new talent or work with consultants? Often a mix is best: maybe send a couple of keen analysts or engineers for AI training (or online courses), and possibly bring in a contractor or consulting firm like HIGTM to accelerate initial development while mentoring the team.

Fortunately, open-source skills are widespread. Many young data scientists learn on open-source (because that’s what universities teach and what’s readily available). Hiring for Python/R, machine learning, etc., will tap into a large talent pool – certainly larger than hiring for a proprietary skill like “X Software Certified Professional,” which narrows candidates.

Having a plan to build an internal “AI champion” team – even if it’s a team of 2 or 3 – can go a long way. They can pilot projects and then cross-pollinate knowledge to others in the organization.

3. Open-Source AI vs Proprietary Solutions: Pros, Cons, and Finding the Right Fit

We touched on this in strategy, but let’s lay it out clearly.

3.1 Open-Source AI – Pros

Zero License Cost: Use your budget for other things (or simply save money).

Flexibility & Customization: Modify the code or models to your heart’s content.

Transparency: You can see how everything works. Critical for trust and debugging.

Community & Innovation: Access to a global knowledge network and rapid improvements.

No Lock-In: You decide where and how to run it; switch environments or providers freely.

3.2 Open-Source AI – Cons

Requires Expertise: You need people who know how to use and deploy these tools (or a partner to help you). It’s like getting a high-performance race car – without a trained driver, you can’t maximize it.

Support is DIY or Third-Party: There’s no default vendor to call at 2 AM. You have to rely on community support or pay someone (like a support vendor or consultancy). Though, to be fair, many SMEs running proprietary software also end up hiring consultants or waiting for vendor support windows.

Integration Effort: Not pre-integrated into your environment – you have to do the work. A proprietary solution might come pre-integrated or with promises of “plug and play” (though often reality falls short of marketing).

Perceived Risk: Some stakeholders might be wary of “free software” (“Is it secure? Who do we blame if something goes wrong?”). This is more of a mindset issue but must be managed with education and governance.

3.3 Proprietary AI – Pros

Ready-Made Solutions: In some cases, you get an end-to-end package. For instance, a vendor might offer a complete fraud monitoring system where you just input your data and it generates alerts, with a nice UI on top. This convenience can be valuable if you lack any in-house capability or need something very quickly.

Vendor Support & SLA: You usually have a contract that guarantees support. If the system goes down, the vendor is obligated to help get it back up. They may also handle updates, backups, etc. (Though in reality, you still need an internal admin to manage vendor systems too.)

Training & Documentation: Vendors often provide formal training sessions, user manuals, and perhaps advisory services on how to use their product for your use cases. This can flatten the learning curve for your team.

Accountability: There’s a sense of “one throat to choke.” If the software misbehaves, you can escalate to the vendor. With open-source, if something goes wrong, your team has to figure it out (or escalate within your own management). Some companies are more comfortable having that external accountability.

3.4 Proprietary AI – Cons

High Costs: License fees, support fees, consulting fees – it can become a large ongoing expense. Also, many vendor models are subscription-based now, meaning you pay perpetually.

Less Customizable: You might be stuck with whatever features are offered. If you need something custom, you request it and maybe it appears in next year’s release (or not). Workarounds might be clunky.

Lock-In: Once integrated, it’s hard to yank out a vendor system. You may also be pushed to buy more modules or services from the vendor (upselling the “ecosystem”). If you try to switch, migrating data and processes can be painful – vendors know this, which can embolden them to raise prices or be less responsive over time.

Slower Innovation: Vendors have development cycles, and they may not prioritize what you need. If a new breakthrough in AI comes out, you could be waiting a while to get it in their product, whereas in open-source you could implement or adopt it immediately.

One-Size-Fits-All: Vendors try to build solutions that cater to many clients, which can mean certain features or workflows aren’t exactly as you’d prefer. Some degree of your process might have to conform to the tool, rather than the tool fitting your process.

3.5 Finding the Right Fit

The decision isn’t always binary. Some SMEs use a hybrid approach:

Maybe use open-source core libraries to develop your proprietary algorithms, but use a paid platform to serve them (though that’s less common now with easy deployment tools).

Or use open-source tools internally, but a proprietary vendor for a customer-facing part (e.g., you build models in-house but use a vendor’s reporting dashboard to present results).

Some proprietary products actually incorporate open-source under the hood. (Be aware, you might be paying for things you could get for free. But sometimes the value-add is the glue and polish they provide).

The key is to evaluate: What are your organization’s strengths and weaknesses? If you have an IT team hungry to innovate and learn, open-source will energize them and they’ll likely excel. If your team is extremely lean and already stretched thin, and you have available budget, a proprietary solution could get you to baseline faster (though possibly at the cost of a higher long-term spend).

Another factor is control vs convenience. Open-source gives control; vendor gives convenience (at least in theory upfront). Many SMEs start with convenience (vendor) when they have no capability, but as they grow or feel the pinch of costs, they shift to more control (open-source). Some others jump straight to open-source from the start, especially newer fintech companies that are tech-savvy from day one.

One concrete strategy: If you’re unsure, pilot something open-source in parallel with evaluating vendors. For example, have your analyst build a simple model using Python over a few weeks with open-source data science libraries, while you also demo a vendor product. Compare the results and the experience. You may find the “homegrown” approach is more viable than you thought (or you identify gaps you need to fill). The pilot won’t cost much except some time, and it gives you a taste of what open-source adoption entails.

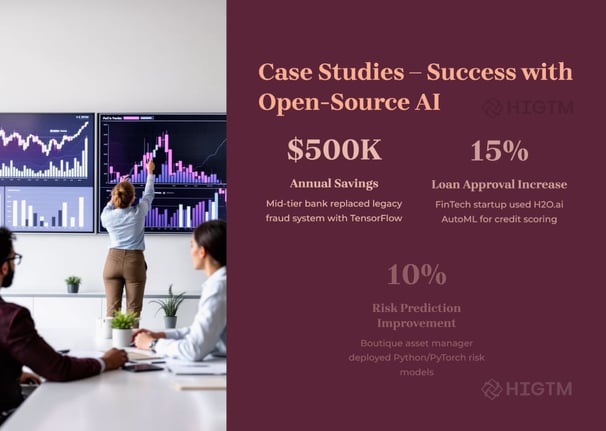

4. Real-World Success Stories

Let’s bring theory to life with a couple of case studies of financial SMEs who implemented open-source AI successfully:

4.1 Case Study 1: Fraud Detection in a Mid-Sized Bank

The Challenge: A mid-sized bank (let’s call it “SecureBank”) was experiencing losses due to fraud, especially in online payments and transfers. They had an older rule-based fraud management system which generated a lot of false alarms and was expensive to maintain. Every year, the vendor’s support fees went up. SecureBank’s operations team was overwhelmed manually reviewing flagged transactions (most of which turned out legitimate, angering customers with transaction delays).

The Open-Source AI Solution: SecureBank’s analytics team proposed an in-house fraud detection model using open-source AI. They used Python as the language and libraries like scikit-learn and XGBoost (an open-source gradient boosting algorithm) to develop a model on historical transaction data. With guidance from a consultant, they implemented a machine learning model that could learn patterns of fraudulent vs. legitimate transactions. They also used TensorFlow to experiment with a neural network for fraud detection, particularly to catch new types of fraud by learning complex patterns.

For integration, they didn’t rip out the old system immediately. They ran the new model in parallel (“shadow mode”) where it would flag transactions but not actually block them, comparing its performance to the legacy system. In a couple of months, it was clear the open-source model was more accurate – it caught several fraud attempts missed by the old rules, and it significantly reduced false positives (it was more precise). The bank then integrated the model into production: basically, their transaction processing platform would call a Python API service running the model (in real-time). This was achieved using a lightweight Flask API. The model decision and a score would come back, and if high risk, the transaction was flagged for review or declined.

Results: Fraud losses dropped by an estimated 30% in the first year due to better detection. False alerts dropped by 20%, meaning fewer customers impacted by unnecessary blocks. The bank saved on vendor fees – roughly $300k annually – by not renewing the old system’s license. They did incur some costs: they paid a consultant for a few weeks and invested in a couple of GPU servers (and eventually used cloud instances for scalability). But the ROI was positive within months. Perhaps as valuable, the bank now owned their fraud model. They could update it quickly as fraud patterns evolved (no waiting for vendor updates). Their internal analytics team felt a sense of pride and empowerment, which led them to tackle more projects (next they focused on anti-money laundering alerts with similar techniques).

Takeaway: Even a mission-critical area like fraud can be handled by open-source AI, with results often superior to legacy vendor systems. The keys were a careful pilot, ensuring regulatory compliance (they involved their compliance team to ensure the model decisions could be explained and that no legitimate customers were unfairly treated), and a phased integration that mitigated risk.

4.2 Case Study 2: Credit Scoring by a Fintech Lender

The Challenge: A fintech startup (we’ll call it “LendFast”) wanted to issue consumer loans online. Their target market included younger borrowers or those with thin credit files – people often overlooked by traditional credit scoring. They needed an underwriting model to predict default risk, but traditional scores (like FICO) weren’t sufficient or sometimes not available for their users. As a startup, budget was tight; they couldn’t afford a fancy proprietary AI platform or a team of 20 data scientists. They had a small team of 3 analysts with decent programming skills.

The Open-Source AI Solution: LendFast went all-in on open-source. They used the H2O.ai open-source platform for its AutoML functionality. AutoML (Automated Machine Learning) trained multiple models (random forests, gradient boosting, neural nets, etc.) on their data and gave them the best one. This helped the small team quickly get a high-performing model without manually tuning dozens of algorithms. They combined traditional credit data with alternative data (like utility bill payments, rent history, even some social data with user consent) to feed the model. H2O’s system ranked variable importance, allowing them to ensure no variable was being used that could introduce bias or regulatory issues.

They also utilized interpretable models – for compliance, they needed to provide reasons for denial. So even though a complex ensemble might be super accurate, they decided to use a slightly more interpretable model (a gradient boosting machine with SHAP values for interpretability, or a two-stage model where they segment and use simpler models in each segment). All of this was possible with open-source tools. They used Python for data processing (Pandas library to clean and join data) and fed it into H2O (which has a Python API).

For deployment, since they were a modern tech startup, they containerized the model using Docker and deployed it on a cloud service. Every time a loan application came in via their website, their system would call this containerized model (through an API endpoint) to get a score and decision.

Results: LendFast was able to underwrite thousands of loans in their first year with a solid handle on risk. They achieved loan approval rates ~15% higher in their target segment (young, thin-credit customers) compared to if they had used a traditional cut-off, without increasing default rates beyond acceptable levels.

In other words, they grew their customer base safely using AI to better assess risk. Because their models were open-source and interpretable, they navigated regulatory exams successfully – they could show how the model worked and demonstrate it did not incorporate prohibited factors (like no decisions based on race/gender/etc., and they could explain decisions to customers like “Your loan was denied because your reported income was too low relative to the loan amount and you have short credit history”).

Cost-wise, their expenditure was minimal: they paid for cloud compute and storage and used free software. The value they got (the improved risk model) might have been something that a traditional lender would pay millions to a vendor or a team of PhDs to develop. Instead, this 3-person team delivered it using open-source in a few months.

As the company grew, they continued this approach, even open-sourcing some of their own code improvements (contributing back to the community). They eventually hired more data scientists, who were attracted to the company precisely because they were using cutting-edge open tools rather than legacy systems. This helped fuel an innovative culture.

Takeaway: For startups and smaller financial players, open-source AI is often the only viable way to build advanced analytics without huge capital. It levels the playing field, allowing them to punch above their weight in sophistication. With AutoML and other user-friendly open tools, even a small team can create complex models. And because they own the solution end-to-end, they can adapt quickly as business needs change.

These case studies highlight a common theme: open-source AI empowers financial SMEs to solve problems in-house that they used to rely on vendors for. By doing so, they not only save money but often achieve better outcomes (because the solution is tailored to their data and context). It does require investment in people and learning, but the return on that investment is high.

5. Top Open-Source AI Tools for Finance (and How to Use Them)

The open-source ecosystem can feel overwhelming – there are hundreds of projects. But a handful have emerged as go-to tools for AI, and are particularly relevant for finance use cases. Here we’ll introduce some of the most popular ones and give examples of how financial SMEs can use them:

5.1 TensorFlow

Developed by Google, TensorFlow is a powerful framework for building and training neural networks and other machine learning models.

Use Cases in Finance: TensorFlow is often used for deep learning tasks. In finance, a common use is fraud detection – e.g., building a neural network that detects fraudulent transaction patterns (credit card fraud, identity theft attempts). It’s also used for financial forecasting: time series prediction of stock prices, option pricing (some quants use TensorFlow to approximate complex pricing models), or even macroeconomic indicators. Another emerging use is in Natural Language Processing (NLP) for finance – TensorFlow can train NLP models to, say, parse news articles or earnings call transcripts to gauge sentiment and predict stock moves.

Why It’s Great: TensorFlow can scale from a single laptop to a distributed cluster with GPUs or TPUs (Tensor Processing Units). It has a high-level API (Keras) that makes it relatively user-friendly for beginners, and low-level control if needed. Google and others have open-sourced many pre-trained models in TensorFlow (check TensorFlow Hub), which can be fine-tuned for specific tasks – for example, a pre-trained language model that you can adapt to analyze financial text (like sentiment on social media regarding your bank).

Example: A credit card company could use TensorFlow to implement an autoencoder model – a type of neural network – that learns the “normal” patterns of customer transactions. If a new transaction looks very different from the reconstruction the autoencoder expects, it could indicate fraud. This unsupervised approach can catch new types of fraud. TensorFlow’s strong support for such architectures and anomaly detection use cases makes it a solid choice.

5.2 PyTorch

Developed by Facebook’s AI Research lab, PyTorch is another leading deep learning framework, loved for its flexibility and dynamic computation graph (which makes debugging easier).

Use Cases in Finance: PyTorch is widely used in research and increasingly in production. Finance use cases are similar to TensorFlow: fraud detection, market prediction, NLP tasks (like analyzing customer chats for compliance or service quality), and more. PyTorch is especially popular in quantitative finance research for prototyping deep models because of its pythonic and flexible nature. For instance, some hedge funds use PyTorch to develop reinforcement learning models for trading (learning strategies by trial and error in simulation).

Why It’s Great: PyTorch feels more intuitive to many Python developers (no static computation graph to compile, you just write normal Python code that does the math). This makes it easier to test and iterate. It also has a strong community, and many cutting-edge AI research papers release PyTorch code, so you get early access to new model architectures. Tools like PyTorch Lightning provide high-level structure to make PyTorch training easier for production.

Example: A risk management team might use PyTorch to build a stress-testing model. Imagine modeling how a portfolio of loans might behave under various economic scenarios (economic stress testing). PyTorch could be used to create a model that takes in scenario parameters (like unemployment rate spike, interest rate changes) and outputs expected portfolio losses. Because of PyTorch’s flexibility, the team can easily incorporate custom loss functions and constraints (perhaps to ensure the model aligns with known business rules). Once satisfied, the model can be optimized and even converted to run in C++ for deployment via TorchScript if needed, making it viable for production.

5.3 scikit-learn

This is the classic machine learning library in Python, containing efficient implementations of basically all the standard machine learning algorithms (except deep learning). It’s great for regression, classification, clustering, etc.

Use Cases in Finance: Scikit-learn is a workhorse for structured data. Credit scoring models (logistic regression, decision trees, random forests) can be done in scikit-learn. Customer segmentation using clustering (k-means, DBSCAN) is straightforward. Churn prediction (which customers are likely to leave) can be modeled with its classification tools. It’s also used in regulatory compliance for things like predicting which transactions might be flagged by regulators as unusual (using anomaly detection algorithms). Because it’s easy to use and well-documented, many analysts without a hardcore CS background can pick it up to start adding AI to Excel-like work.

Why It’s Great: Scikit-learn is simple but powerful. It’s extremely well-documented and consistent. Training a model often comes down to a few lines of code (model = RandomForestClassifier().fit(X, y)). It also integrates perfectly with other parts of Python’s ecosystem (NumPy for numeric computation, pandas for data frames). For many problems, you don’t need deep learning – a well-tuned random forest or gradient boosting model from scikit-learn does the job with less complexity and more interpretability.

Example: A regional bank’s marketing team wants to identify segments in their customer base for targeted outreach. They have transaction behaviors, product holdings, demographics, etc. They can use scikit-learn’s KMeans clustering to group customers into, say, 5 segments with distinct profiles (perhaps “Young digital-savvy savers”, “High-wealth investors”, “Credit-focused borrowers”, etc.). Scikit-learn makes it easy to try different numbers of clusters, evaluate cluster quality, and extract the defining features of each cluster. This informs marketing strategy, and it took just a few hours for an analyst to do with open-source tools – versus possibly paying a marketing consultancy quite a bit for similar insights.

H2O.ai (open-source H2O platform) – H2O.ai provides an open-source machine learning platform (sometimes called H2O-3) that is very popular in business settings, plus a paid Driverless AI product. Here we focus on the open-source part.

Use Cases in Finance: H2O’s AutoML capability is a standout – it’s great for quickly building models for things like credit risk, underwriting, fraud, marketing response prediction, etc. Many banks have used H2O for credit scoring and stress testing because it can efficiently train models on large datasets (it’s written in Java and can run distributed). It also has modules for GLM (generalized linear models) that replicate things like logistic regression with regularization, which is useful for regulatory models that need to be simple and interpretable.

Why It’s Great: H2O is designed to be enterprise-friendly. It can read directly from data sources like Hadoop clusters or CSV files and distribute the modeling task across multiple servers. It supports many algorithms (random forests, GBM, deep learning, GLM, etc.) and offers an easy-to-use web interface as well as R/Python APIs. For a team that might not be coding experts, H2O’s Flow interface (a web GUI) lets them click through data exploration and model training, which can be less intimidating. H2O AutoML will rank models and even do some hyperparameter tuning for you. The fact that 18,000+ companies use open-source H2O, including many in finance, adds to its credibility.

Example: An insurance company needs to build a model to predict which policyholders are likely to claim (to adjust premiums or target retention efforts). They have a lot of data and not a lot of data science staff. Using H2O, they upload their data to an H2O cluster and let AutoML rip. It might produce a gradient boosted trees model that has high accuracy. H2O then provides explanations for the model (variable importances, partial dependence plots to see how each factor affects outcome). This process can compress what might be weeks of manual model building into a few hours of computation and analysis. The team can then validate and tweak the top model, perhaps simplifying it slightly for deployment, and use it in their policy management system. The heavy lifting was done by open-source AI in an automated way.

Other Notable Tools:

XGBoost & LightGBM: These are specialized libraries for gradient boosting, extremely popular in Kaggle competitions and structured data problems. Finance heavily uses these for things like credit scoring (they often win in terms of accuracy vs. logistic regression or single decision trees). They are open-source (XGBoost originally came out of academia/community; LightGBM from Microsoft). SMEs can easily plug these into Python/R workflows. They tend to be faster and more memory-efficient than using, say, scikit-learn’s implementation for large datasets.

Apache Spark (MLlib): If you have Big Data (like terabytes of records) and need to distribute computing across a cluster, Spark’s machine learning library can run algorithms in parallel. For example, a big retail bank might use Spark’s ALS algorithm for recommendation (to recommend products to customers based on transaction history) or use Spark ML to train a fraud model on billions of rows. For an SME, Spark might be overkill unless you are data-heavy, but if you already use a Hadoop/Spark environment, it’s a great way to bring AI to the data rather than moving data around.

NLTK / spaCy / Hugging Face Transformers: For text-based AI, these are key. An SME could use spaCy to do entity recognition in documents (e.g., automatically pulling out names, dates, amounts from legal contracts) to speed up compliance checks. Hugging Face provides access to advanced NLP models (like BERT, GPT variants) which can be fine-tuned for financial language tasks (there are even pre-trained models tuned on financial text). Open-source NLP has matured a lot – one can, for example, classify customer emails or social media posts for sentiment or urgency using these tools effectively.

Dash/Streamlit (for AI dashboards): Once you have models, you might want to expose them or their results to business users. Open-source tools like Dash (by Plotly) or Streamlit allow you to build interactive dashboards or mini web apps in Python easily. For instance, a risk officer could have a dashboard (powered by Dash) that lets them adjust assumptions and see the model’s output (maybe a “what-if” loan risk scenario tool). It’s not an AI tool per se, but it’s part of delivering AI results in a user-friendly way.

Jupyter Notebooks: Worth a mention – Jupyter is the de facto environment for developing and sharing AI experiments. It’s open-source and incredibly useful for documenting the modeling process, doing EDA (exploratory data analysis), and even building simple reports. Many SMEs adopt Jupyter for their data science workflow.

In summary, the open-source toolbox for AI is rich. Financial SMEs don’t need all of it – the key is to pick the right tool for the job:

For straightforward predictive modeling on tabular data: scikit-learn, XGBoost, LightGBM, or H2O AutoML are great.

For complex patterns, large data, or unstructured data (images, text): TensorFlow or PyTorch (with possibly libraries like Keras for ease, or Transformers for NLP).

For big scale distributed stuff: Spark.

For integration and sharing: Flask/FastAPI for model APIs, Dash/Streamlit for UI.

All these tools can work together since they often use common data structures (pandas DataFrames, numpy arrays) or provide conversion utilities. It’s common to use scikit-learn for one part of a project and TensorFlow for another.

And remember: using these tools is free, but mastering them takes practice. Thankfully, there are abundant online tutorials, forums, and example projects to learn from – itself an advantage of open-source (the collective learning resources are vast). An SME can bootstrap an AI capability by leveraging not just the code but the knowledge shared by the community.

6. Overcoming Implementation Challenges

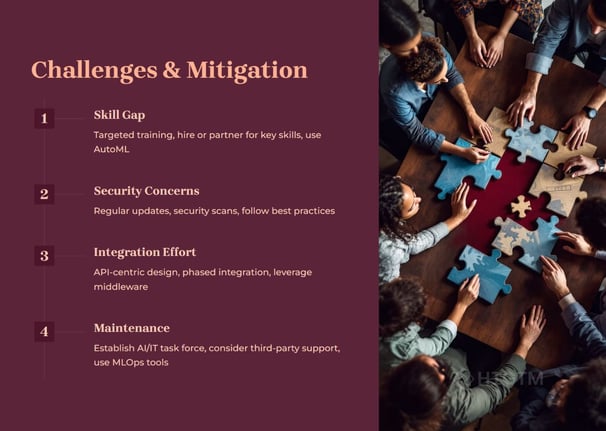

Adopting open-source AI is a journey. As we saw, there are challenges around skills, security, integration, and maintenance. Let’s discuss how an SME can address these effectively:

6.1 Challenge: Limited AI Expertise in-house.

Solution: Start with a pilot project and training. Identify employees who are tech-savvy or enthusiastic about analytics – maybe someone in IT who scripts a lot, or a business analyst who’s taught themselves some Python. Give them a small project (like, “predict which customers might close their account”) and the mandate to explore open-source solutions. While they work on it, provide time and possibly budget for learning – perhaps enroll them in an online course like Coursera’s Machine Learning or fast.ai’s deep learning course (both of which use open-source tools). You could also bring in a consultant or hire a part-time data scientist to mentor this team during the pilot. The goal is a low-stakes environment to build confidence. When the pilot yields something (even a simple model or insight), showcase it internally – this builds buy-in and demonstrates that “yes, our team can do AI.”

Additionally, consider collaborations: maybe a local university has a data science program – you could sponsor a student capstone project to work on one of your problems using open-source AI. It’s low-cost and can pipeline talent to you.

6.2 Challenge: Uncertainty about Security & Compliance.

Solution: Develop a simple governance framework for AI projects. This might sound fancy, but it could be as straightforward as a checklist that every AI project must go through: data privacy check (has legal approved using this data? do we anonymize it?), bias check (did we test the model for bias or fairness issues?), security check (did IT review the deployment plan for vulnerabilities?), and performance check (does it meet business accuracy requirements?). Engage your risk management or compliance officer early – explain what you’re doing, get their input. Many compliance folks appreciate being involved at design stage rather than being handed a black box later.

Also, lean on best practices: use well-vetted libraries (don’t use some random GitHub code that no one’s reviewed for production), and keep an eye on official guidance. For example, the EU AI Act is coming – if you likely fall under its requirements (e.g., maybe your AI is used for credit decisions, which is high-risk category), design with those principles in mind (transparency, human oversight). It’s easier to bake compliance into the solution than to retrofit it after.

Document everything. Make documentation a habit – data sources, model assumptions, evaluation results, who approved what. This not only helps with compliance, but also with internal knowledge transfer.

6.3 Challenge: Integration with Existing Systems.

Solution: Plan integration as a phased approach:

Phase 1: Standalone Pilot – run the AI model offline or in parallel, not affecting existing systems. Use this to prove the concept and measure results.

Phase 2: Partial Integration – e.g., integrate the model into a non-critical part of the workflow or as a decision-support tool (humans see the model’s output and use it, but it’s not fully automated). For example, a model that predicts cross-sell opportunities might just produce a report that sales reps see.

Phase 3: Full Integration – once confident, embed the model into the automated workflow (e.g., automatically approve low-risk loans as identified by the model).

Using APIs is generally the cleanest way. If your core system doesn’t support API calls, consider if it can at least import/export files and whether a batch process is acceptable. Many older systems can drop a file with data – you can have a separate process pick that up, run the model, and then return a file of results to be ingested. It’s not real-time but could be fine for overnight processes.

Also, explore if your existing software has integration hooks. Some core banking solutions allow “extensions” or calling external decision engines. If so, you can likely plug your model in there, possibly even directly in the language of the core (some banks export models as Java code to embed into Java-based core systems).

6.4 Challenge: Maintaining Models and Software.

Solution: Embrace MLOps (Machine Learning Operations) practices, akin to DevOps. For an SME, this doesn’t have to be heavy – even using Git for version control of your code and models is a good start (so you have history of changes). Use scheduling tools (like cron jobs or more advanced like Airflow) to automate model runs or retraining if needed. Monitor model performance over time – if accuracy is degrading, it might be time to retrain with fresh data (concept drift is when patterns change).

Many open-source tools exist to help with MLOps: for example, MLflow can track experiments and store models with a version number, and even deploy them. Docker containers can ensure your runtime environment is consistent (so “it works on my machine” is less of an issue). Kubernetes might be overkill for many SMEs, but if you’re deploying multiple services, a lightweight orchestration or just good use of cloud managed services can keep things manageable.

If you lack capacity to maintain, consider a support contract for critical pieces – e.g., some companies offer support for running TensorFlow in production or will manage your models on their cloud. This still is often cheaper than a full proprietary solution, but eases the ops burden. It’s essentially paying for help on open-source.

6.5 Challenge: Change Management (People and Culture).

This is often overlooked. Introducing AI can be sensitive – employees might fear job replacement or not trust the model’s decisions, etc. It’s crucial to position AI as augmenting human expertise, not replacing it. Involve the end-users (like loan officers, fraud analysts, etc.) in designing how AI will assist them. Get their feedback on the model outputs and iterate. Small successes and showing that AI makes their job easier (not irrelevant) will create advocates who then champion the new tools.

For example, if a model prioritizes which alerts an analyst should look at first (rather than doing random checks), highlight how it saves them time and helps catch important issues faster, rather than framing it as “the AI will now do the checking.” In time, if some roles shift (maybe fewer people needed for manual review), you can retrain those people to higher-value analytical or oversight roles – ensuring everyone grows with the technology adoption.

7. The Future of AI in Finance and HIGTM’s Role

The financial industry is on the cusp of an AI-powered transformation. Open-source tools are accelerating this by democratizing access to sophisticated algorithms. We foresee a future where:

AI is ubiquitous in financial decision-making – from personalized customer service chatbots to real-time fraud defense that adapts instantly to new tactics, to dynamic credit pricing that adjusts risk-based interest rates on the fly.

Regulators increase focus on AI transparency – meaning the ability to explain and audit AI is as important as the accuracy. Open-source will play a key role because it inherently allows for inspection and understanding.

SMEs punching above their weight – smaller firms can collaborate (perhaps share anonymized data or models via federated learning) and collectively improve their AI capabilities, blurring the line between what a big bank can do and what a tech-savvy community bank can do.

Continuous innovation – new open-source projects will emerge (for instance, privacy-preserving ML is a growing field – imagine sharing insights without sharing raw data, through open algorithms).

In this evolving landscape, HIGTM positions itself at the forefront for clients. We aim to be not just consultants but partners in innovation:

We stay updated on the latest open-source advancements and distill which ones actually deliver value (so our clients don’t have to chase every GitHub repo – we separate signal from noise).

We engage in thought leadership – publishing insights (like this article), case studies, and hosting webinars to educate the financial SME community about best practices in AI.

We may even contribute to open-source (for example, building a plugin that makes a certain open-source tool more suitable for finance, and releasing it for the community). This keeps us hands-on and credible in the space.

For an SME looking at the future, aligning with open-source means aligning with continuous improvement. Unlike a static product that might update yearly, open-source projects update continuously. By adopting them, your organization becomes more agile and tech-forward. With HIGTM’s guidance, SMEs can create an AI adoption roadmap that is sustainable: start with foundational capabilities (like getting your data infrastructure right, maybe setting up a small cloud environment for analytics), then gradually layering more sophisticated AI solutions.

As AI potentially disrupts business models (think of how robo-advisors emerged or how peer-to-peer lending uses AI to match borrowers and lenders), SMEs need to be ready to pivot and leverage AI in new offerings. Open-source gives the flexibility to experiment with such ideas without huge sunk costs. It’s no exaggeration to say that those who harness open-source AI effectively will have a competitive edge in customer experience, operational efficiency, and risk management.

HIGTM’s commitment is to ensure our clients are those frontrunners – not the ones scrambling to catch up. We provide the strategic vision, the technical know-how, and the change management expertise to infuse AI into your organization’s DNA.

Open-source AI tools present a golden opportunity for financial SMEs to innovate smarter, faster, and more affordably. By carefully considering strategic factors (cost, security, compliance, scalability) and leveraging the rich ecosystem of tools and community, even a small firm can build AI capabilities that rival those of large institutions. The journey has challenges – from skill gaps to integration hurdles – but none are insurmountable. With a clear vision, the right partners, and a willingness to learn and adapt, financial SMEs can ride the wave of AI to new heights of efficiency, customer satisfaction, and competitive advantage.

At HIGTM, we’re excited about this future. We’ve seen the spark in a COO’s eye when they realize an open-source solution can solve a problem in weeks that they assumed would cost millions. We’ve watched teams transform from Excel-bound analysts to proud data scientists automating decisions with AI. These success stories can be yours as well. The tools are ready and waiting – and so are we, to guide you in using them. The question now is: Are you ready to seize the open-source AI advantage?

(For more information or to discuss how your organization can implement open-source AI, feel free to reach out to HIGTM for a consultation. We’re here to help you navigate the journey from idea to impact.)

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.