44. Cybersecurity Fundamentals: Protecting AI Assets in the Age of Intelligent Threats

This comprehensive guide explores the major security risks AI systems face – from data breaches to adversarial manipulation – and provides a step-by-step strategy to safeguard your AI initiatives. Executives and business leaders will learn practical, actionable insights to protect their investment in AI while navigating an evolving regulatory landscape.

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 2 (DAYS 32–59): DATA & TECH READINESS

Gary Stoyanov PhD

2/13/202520 min read

1. Data Breaches and Privacy Violations in AI

AI thrives on data – often large, diverse datasets that include personal, sensitive, or proprietary information. This makes data breaches involving AI particularly damaging. A breach could mean exposing not just one database, but potentially everything the AI knows or has learned.

One unique concern is that AI models can inadvertently memorize aspects of their training data. For instance, researchers found that image-generating AI models (like Stable Diffusion) sometimes spit out exact copies of images from their training set. If any of those images contained private or copyrighted content, the AI just turned a training data breach into a new leak. Similarly, large language models trained on sensitive text might regurgitate a user’s personal information if prompted just the right way.

Real-World Example – Chatbot Data Leak: In March 2023, users of a popular AI chatbot (ChatGPT) were shocked to find conversation titles in their history that weren’t theirs. OpenAI later admitted a bug allowed some users to see parts of other users’ chat sessions. While the content exposed was limited (titles, not full transcripts), it was a stark reminder: AI services can leak data if something goes wrong. Now imagine if those chats contained confidential business info – a well-intentioned employee could have unknowingly put trade secrets into the chatbot, and a stranger online glimpsed them. In fact, a similar scenario happened at Samsung: engineers pasted proprietary code into ChatGPT to help debug, and that data was now on external servers outside Samsung’s control.

Privacy Violations and Regulations: Data breaches in AI also raise flags with privacy laws. The EU’s GDPR doesn’t care whether a leak came from a traditional database or an AI model – if personal data of EU citizens is exposed, your company could face fines up to €20 million or 4% of global turnover (whichever is higher).

Clearview AI learned this the hard way: in 2022, they were fined €30 million for scraping and using people’s photos in an AI facial recognition system without consent.

Even OpenAI was fined €15 million by Italy for how ChatGPT processed personal data.

Privacy violations can also erode customer trust permanently; clients want to know their data is safe, even when used in AI.

2. Mitigation – Protecting Data and Privacy:

To prevent AI-related data breaches and privacy mishaps:

Data Encryption & Access Control: Treat your AI training data and outputs as sensitive data stores. Encrypt data at rest and in transit. Limit access to the datasets – only team members with a need should handle raw data, and use secure data warehouses with strict permissions. This way, even if someone breaches a server, they can’t easily read the data.

Anonymization & Privacy Techniques: Whenever possible, anonymize or pseudonymize personal data before using it in AI training. Techniques like differential privacy add statistical noise to data or model results, making it hard to reverse-engineer any individual’s information.

For example, Apple and Google use differential privacy in their AI so they can collect usage data without learning exactly what one specific user did. If your AI can learn from data without memorizing exact personal details, you’ve reduced the privacy risk.

Secure AI Lifecycle: Build security checkpoints into the AI development process. This includes vetting training data sources (to ensure you’re allowed to use that data and it’s not tainted) and scanning models after training to see if they inadvertently retained any sensitive strings (researchers have tools to test language models for memorized secrets). If you find any, retrain with more regularization or privacy constraints.

Monitor and Respond: Much like you’d have a SIEM (Security Information and Event Management) for your databases, monitor AI systems for unusual data access or output patterns. If an AI system suddenly starts outputting things it shouldn’t (like chunks of raw data or someone’s SSN), that’s an incident – investigate immediately, it could be a sign of an attack or a bug. Have an incident response plan that covers AI-specific scenarios (for example, how to pull a model offline if it’s leaking data, and how to patch or retrain it).

By safeguarding the data pipeline around AI and adding privacy-minded controls, you drastically lower the chance that your shiny new AI will turn into a data geyser of private information.

3. Adversarial Attacks: AI Manipulation and Evasion Techniques

Unlike traditional software, AI can fail in strange ways when given inputs specifically designed to mislead it. These adversarial attacks don’t break into your system; instead, they feed your AI model data that causes it to make a wrong prediction or decision. It’s like an optical illusion crafted for machines.

There are a few common types of adversarial attacks on AI:

Evasion Attacks: Small, often imperceptible perturbations to input data that cause the model to misclassify it. In images, this could be tiny changes to pixels; in text, it might be a cleverly phrased prompt or special tokens that confuse a language model. The classic example is adding “noise” to an image of a panda such that a human still sees a panda, but an AI is 99% confident it’s looking at a gibbon.

Physical Attacks: Taking evasion into the real world – e.g., printing adversarial patterns on a sticker, t-shirt, or glasses. If done right, the AI model processing camera images gets fooled by the pattern.

Poisoning Attacks: Manipulating the training data or environment so the AI learns the wrong lesson or embeds a backdoor. (This one is more of a supply chain issue, which we’ll cover later, but it’s worth noting here as a way to set up an AI to be easily exploitable during operation.)

3.1 Real-World Example – Tricking Vision with Stickers

Researchers have repeatedly shown how easy it can be to fool machine vision systems. One team placed a few small stickers on a STOP sign, and a popular image recognition AI then saw it as a 45 mph speed limit sign.

In a similar vein, McAfee researchers demonstrated they could trick Tesla’s Autopilot by altering a road sign: a tiny piece of black tape on a “35 MPH” sign made the car interpret it as “85 MPH,” causing it to dangerously accelerate.

These examples highlight that adversarial attacks can have physical-world consequences – not just classification errors in a lab. For businesses, an adversarial attack might mean an AI security camera system can be bypassed by intruders wearing a certain patterned shirt, or a competitor feeding subtly crafted data into your public-facing AI API to make it malfunction.

3.2 Real-World Example – Prompt Manipulation

Adversarial attacks aren’t limited to vision. With the rise of language models, we’ve seen “prompt injection” attacks – where a user cleverly crafts input that causes an AI to ignore its instructions or reveal secrets. For instance, users found that by appending certain phrases or exploits in a prompt, they could get chatbots to output disallowed content or confidential information (essentially social engineering for AI). Microsoft’s AI chatbot Tay (launched in 2016) is a cautionary tale: internet trolls bombarded it with toxic language, causing the AI to begin outputting racist and misogynistic tweets within hours of launch. Tay’s machine learning system was manipulated via its input (tweets from users) – a crude but effective adversarial attack that forced Microsoft to shut it down.

3.3 Impact of Adversarial Attacks

The immediate risk is misleading outputs – an AI system gives the wrong answer or classification. In cybersecurity terms, this can be just as harmful as a data breach in certain contexts. For example, if an AI system that approves loan applications is tricked into misclassifying an obviously fraudulent application as safe, the bank loses money and unknowingly takes on risk. Or imagine a medical diagnostic AI misidentifying a tumor because the input scan had adversarial noise – a patient could miss early treatment. Beyond direct harm, adversarial attacks erode trust in AI systems. Users or executives might question the reliability of AI if it can be so easily duped.

4. Compliance and Regulatory Challenges (GDPR, CCPA, AI Act, etc.)

The regulatory environment around AI and data privacy is intensifying. Authorities across the globe have noticed the rapid adoption of AI and are crafting rules to ensure it’s done safely and ethically. For businesses, this means that AI security isn’t just a nice-to-have – it’s increasingly a legal requirement. Failing to secure AI systems and the data they use can lead to penalties, lawsuits, and bans.

Let’s break down a few major regulations and what they imply for AI security:

GDPR (General Data Protection Regulation) – Europe: GDPR is fundamentally about data protection and privacy for individuals. If your AI processes personal data of EU residents (which it likely does if you have any consumer data or even employee data), GDPR provisions apply. Two key concepts: privacy by design and data security. GDPR Article 5 demands that personal data be processed securely and with appropriate protection against breaches. If an AI system leaks personal data or is breached, you must notify authorities in 72 hours, and you could face fines up to €20M or 4% of global revenue.

Additionally, GDPR’s Article 22 gives individuals rights regarding automated decisions: they can request human intervention or an explanation. If an AI is compromised (say, by an attack that biases decisions against certain people), you could end up unknowingly violating non-discrimination principles too. Bottom line: securing AI under GDPR isn’t just about preventing breaches, but also ensuring the integrity and fairness of automated decisions.CCPA/CPRA (California Consumer Privacy Act / California Privacy Rights Act): California’s laws give consumers rights similar to GDPR (access, deletion, opting out of sale of data). From a security standpoint, CCPA mandates businesses to implement reasonable security measures for personal data. If you suffer a data breach due to inadequate security, CCPA allows consumers to sue for damages (up to $750 per consumer per incident) even if they can’t prove harm.

That can add up fast if thousands of consumers’ data is leaked via an AI mishap. So if your AI is handling personal data of Californians, ensuring its security (and that of its data) is part of compliance.

EU AI Act (upcoming in EU): The AI Act is a groundbreaking proposed regulation specific to AI systems. It classifies AI uses into risk categories: unacceptable (banned outright, like social scoring systems), high-risk (allowed but with strict controls, e.g. AI in medical devices or credit scoring), and limited or minimal risk. For high-risk AI, the Act will require things like risk assessments, documentation on training data and algorithms, transparency to users, and robust risk management systems throughout the AI lifecycle.

While the act is not about cybersecurity per se, one can infer that a high-risk AI system will need strong security (because if it’s compromised, it could cause serious harm, thus failing the risk mitigation requirement). For example, an AI that controls critical infrastructure must be secured against sabotage. The Act also implies you should be able to explain and document your AI’s function – an AI wildly off course due to an attack or data poisoning could put you in non-compliance since it no longer behaves as documented.

Sectoral Regulations: Various industries have their own rules. In healthcare, HIPAA in the U.S. requires safeguarding patient data (if you use AI on patient info, it applies). In finance, regulations like NYDFS cybersecurity regulations, FFIEC guidelines, or even SOX for internal controls could be interpreted to include AI models that make significant decisions. Governments are issuing AI-specific guidelines too (e.g., NIST in the U.S. published an AI Risk Management Framework, and many standards bodies like ISO are working on AI security standards).

Challenges for Businesses: Compliance is challenging because laws lag technology, and AI can be a moving target. For instance:

How do you document an AI model’s decision process for regulators when even the engineers don’t fully understand the black box? (This is the explainability challenge – relevant to both compliance and security, as lack of understanding can hide vulnerabilities).

Privacy laws might give a person the right to have their data deleted. If that data was used to train an AI, how do you scrub the influence of that data from the model? It’s a hard technical problem; solutions like retraining from scratch or specialized deletion algorithms are being explored.

Regulators may expect robust AI governance: clear roles, procedures for when something goes wrong, and proof that you tested the AI for safety. Security testing will likely become a de facto requirement. For example, if an AI caused harm and you can’t show you ever tested it against adversarial inputs or that you lacked basic access controls, it will look very bad in court or regulatory hearings.

Mitigation – Building Compliance into AI Strategy:

To handle compliance, organizations should treat it hand-in-hand with security:

Map Your AI Use Cases to Regulations: Identify which laws apply to each AI system. Is it processing personal data (GDPR/CCPA)? Is it used in a regulated decision (credit, healthcare)? Is it deployed in Europe (AI Act)? For each applicable law, list the requirements (e.g., GDPR requires ability to respond to data subject rights, so you need to know what data an AI has on someone). This mapping ensures no AI project launches without considering legal obligations.

Privacy by Design: Incorporate privacy considerations from the get-go. Minimize personal data usage in AI if possible (use synthetic or aggregated data where you can). If personal data is necessary, ensure you have consent or another legal basis, and have a plan for data retention and deletion. Implement features to comply with user requests – e.g., the ability to delete a user’s data from the training set and possibly retrain or adjust the model (tools for machine unlearning are emerging).

Documentation and Transparency: Maintain documentation for each AI system: what data was it trained on, what algorithms and techniques were used, what potential biases or risks were identified, and what safeguards are in place. Not only does this help with compliance reporting, it also overlaps with good security practice (inventory and understanding of your assets). For high-risk AI, the EU AI Act will likely require submitting such documentation to authorities or notified bodies for approval. Even outside the EU, being able to explain your AI builds trust – think of it as an ingredient label for your AI product.

Robust Security Controls as Compliance Proof: Many compliance requirements boil down to “secure your system appropriately.” By implementing the security measures discussed throughout this article – encryption, access control, monitoring, testing – you can largely fulfill these requirements. For instance, if audited after a breach, showing that you had state-of-the-art encryption and still got breached (maybe through zero-day vulnerability) can protect you from negligence claims. Conversely, if you left an S3 bucket of training data open, expect heavy fines and lawsuits. Regulators don’t expect perfection, but they expect due diligence and reasonable best practices in place.

Stay Updated and Engage with Policy: The AI regulatory environment is evolving. Assign someone (or a team) to stay abreast of new laws and industry standards. This might be your Chief Privacy Officer or a specialized AI governance committee. Consider participating in industry groups or standards development – it can give early insight into what requirements might be coming. Some forward-leaning organizations are even creating an “AI ethics board” that overlaps with compliance and looks at not just what can we do with AI, but what we should do, aligning with emerging ethical norms that often precede regulation.

In summary, compliance is not separate from security – a lot of compliance in AI is about doing security and governance right. By treating user data with care, ensuring your AI’s integrity, and being prepared to show regulators that you run a tight ship, you not only avoid fines but also build trust with users and partners. It’s part of the cost (and reward) of doing business with AI in 2025 and beyond.

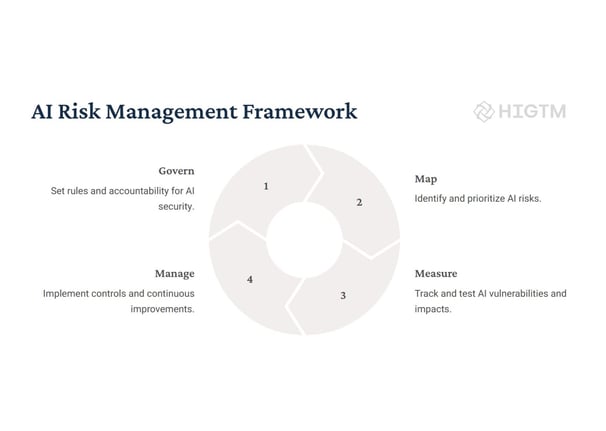

5. AI Security Best Practices & Risk Management Strategies

Having covered the landscape of threats and challenges, let’s turn toward solutions. Protecting AI assets requires a mix of traditional cybersecurity savvy and AI-specific practices. It also requires executive-level risk management – processes to continually assess and mitigate risks as your AI initiatives grow. Below is a step-by-step strategy that businesses of all sizes can adopt to systematically secure their AI systems.

Step 1: Identify and Inventory Your AI Assets

You can’t protect what you don’t know you have. Start by creating an inventory of all AI-related assets in your organization. This includes:

Data: Training datasets (e.g., customer data, transaction records, images, etc.), validation datasets, and data pipelines feeding into AI models. Note which contain personal or sensitive information.

Models: Each machine learning model in development, testing, or production. Document what it’s used for, who the owner is, and what algorithms or platforms it uses.

Infrastructure: The servers, cloud services, and devices where training and inference occur. Also any ML Ops tools, APIs exposing model functionality, and third-party AI services integrated into your workflows.

Personnel: Teams and key individuals working on AI (data scientists, ML engineers) and those with access to AI systems or data.

Once inventoried, classify the assets by criticality. For example, an AI model that recommends medical treatments or moves millions of dollars is critical. A prototype model analyzing public data for internal research might be less so.

This helps prioritize where to focus first. Alongside, perform a risk assessment for each asset: ask what could go wrong? E.g., “Model X could be manipulated to give wrong outputs” or “Dataset Y leak would violate GDPR” or “If service Z goes down or is hacked, business stops”. This risk mapping will inform all subsequent steps.

Step 2: Protect Data at Every Stage

Data is the lifeblood of AI – securing it is paramount. Implement strong controls around data:

Access Control & Least Privilege: Only allow the minimum necessary access to AI datasets. If a data scientist only needs aggregated data, don’t give them raw identifiable data. Use role-based access control for databases and storage buckets containing AI data. Regularly review who has permissions and revoke those no longer needed.

Encryption: Always encrypt sensitive data, both at rest (on disk) and in transit (network). Modern cloud services make this straightforward – ensure it’s enabled everywhere. Manage your encryption keys carefully (e.g., using a cloud key management service or HSM). For ultra-sensitive projects, consider using memory encryption or enclave technologies so even data in use is protected from other processes on the machine.

Data Masking & Anonymization: Before using data in development or testing, anonymize it. Replace personal identifiers with hashes or synthetic data. Use masking for any dashboards or outputs – e.g., if showing example model inputs, obscure names or ID numbers. This way, even if some data leaks, it’s less likely to expose real personal information.

Secure Data Sharing: If you need to share data with partners (for model training collaboration, etc.), do it securely. Use secure file transfer, and consider sharing through federated learning (where raw data stays on their site, only model updates are exchanged) to avoid large data dumps. Always have a data processing agreement in place outlining security expectations.

Monitor Data Access: Enable logging on all data repositories. Use automated tools to flag unusual data access patterns (e.g., large export of records, or access at odd hours by a user who never did that before). This helps catch both insiders and external intruders early.

By locking down the data, you not only reduce breach risk, you also maintain higher data quality (since fewer hands touch it, fewer chances to corrupt or mislabel it, whether accidentally or maliciously).

Step 3: Harden and Secure AI Models and Infrastructure

With data under guard, turn to the models and the systems they run on:

Secure Development Practices: Instruct AI developers to follow secure coding and config practices. For example, no hardcoding credentials in notebooks or scripts, sanitize all inputs (even internal ones, to avoid unexpected formats causing issues), and validate outputs where possible. Incorporate security checks in code reviews for model code.

Environment Isolation: Isolate environments for development, testing, and production. Development environments are more open (for flexibility) but shouldn’t connect to production data. Production AI environments (where the live models run) should be treated like production servers: minimal software installed, locked-down configurations, and network restrictions (e.g., your production ML server probably doesn’t need to reach out to the internet except to specific services). Containerization can help maintain consistency and isolate dependencies.

Adversarial Robustness: For each model, consider performing adversarial testing. Use frameworks like IBM’s Adversarial Robustness Toolbox or Microsoft’s Counterfit to simulate attacks on your models. For instance, see if adding noise to an input drastically changes the output. Any model going into a critical application should go through this gauntlet. If weaknesses are found, retrain with adversarial examples or adjust the model architecture.

Regular Patching: Keep the ML frameworks and libraries up to date on the servers. Outdated libraries can have known exploits. The challenge is that updating frameworks can sometimes affect model performance (due to version changes), so have a schedule – e.g., every quarter, update non-critical environment first, test your models still work, then roll to prod. Treat it akin to patch Tuesday in IT.

Authentication and Authorization for Models: If a model is provided as a service (say via REST API or embedded in an app), ensure only authenticated calls can reach it. Don’t allow anonymous access to a sensitive model. Use API keys or OAuth tokens, and implement scopes/roles if certain users should only get certain functionality. Internally, if multiple services call the model, use service accounts and not generic credentials. This prevents someone from just pinging your model URL and using it endlessly (or abusing it).

Output Filters: For generative AI or any AI that produces content (text, images), build an output filtering layer for safety. This might involve checking the output for sensitive data (to prevent leaks) or policy violations (to prevent disallowed content). Many companies have separate AI models or regex rules to scan outputs before they’re shown to users. While not foolproof, it adds a safety net.

Resource and Budget Controls: Another aspect of security is preventing abuse of your AI resources (which could be costly). For instance, limit how long training jobs can run or how many resources they consume to mitigate any crypto-mining malware or runaways. In cloud environments, set budget alarms so an anomaly in usage (which could indicate misuse) gets noticed quickly.

Step 4: Monitor, Detect, and Respond

No defense is 100% impenetrable. Assume that at some point, something will go wrong – an attack will slip through or an insider will make a mistake. The difference between a minor incident and a full-blown disaster often lies in detection and response.

Continuous Monitoring: Implement monitoring on all layers of the AI stack. This includes traditional security monitoring (intrusion detection systems, SIEM aggregating logs from servers, etc.) but also AI-specific monitoring. For example, monitor model prediction distributions – if suddenly a model that usually outputs a variety of results starts outputting a single category overwhelmingly, that could indicate tampering or a problematic drift. Monitor data pipelines for changes in data patterns (if someone poisoned your training data, the data profile might shift). User behavior monitoring, as mentioned, is key for catching insider issues.

Anomaly Detection for AI behavior: It’s fitting to use AI to secure AI – deploy anomaly detection on your system metrics. There are tools that learn normal CPU, memory, and network usage for your AI servers and can alert on deviations (could indicate crypto-jacking malware or unauthorized processes). Similarly, monitor query patterns to your AI APIs – if normally you get 100 requests per hour and suddenly it’s 10,000, either you got popular (good) or you’re under attack or being scraped (bad).

Incident Response Plan (IRP) for AI Incidents: Update your organization’s IRP to include scenarios involving AI. This means defining playbooks for things like “AI model outputting incorrect/reckless results due to suspected attack” or “data leak via AI service.” Who gets alerted? Likely not just IT—bring in the data science lead, legal (if customer data is involved), and PR if it’s public-facing. Have a strategy for pulling a model from production if needed (rollback to a previous model version, or switch to a dumb but safe rule-based system as a stopgap). If a breach occurs, know your regulatory reporting obligations – for example, which authorities to notify for a personal data breach (GDPR) and do you need to inform customers? Time is of the essence, so pre-draft some communication templates.

Tabletop Exercises: Run drills for AI-specific incidents. Tabletop exercises (simulating an incident with the key stakeholders in a room) are great for normal cyber incidents and equally useful for AI. For instance, simulate a scenario: “Our customer service AI starts revealing other customers’ data in its responses – go!” Walk through how teams investigate the root cause (bug or attack?), what steps they take (shut off the AI, revert to human support, etc.), and how they’d communicate. This exposes gaps in your processes in a safe way and prepares everyone for the real thing.

Logging and Forensics: Ensure that systems are logging enough information to investigate incidents after the fact. If an AI made a bad decision, can you trace why? (Which data was used, which version of the model, who deployed that version, etc.) This is crucial not only for fixing the issue but also for demonstrating due diligence to regulators or in court if needed. Maintain archives of model versions and training data snapshots – if a model was compromised, you may need to do a forensic analysis on the version used at that time.

Step 5: Govern and Continuously Improve

AI security isn’t a one-off project – it’s an ongoing part of governance and risk management. To sustain and improve your posture:

Establish AI Governance Team or Committee: This group (which could be part of an existing risk committee) should include stakeholders from IT security, data science, legal/compliance, and business units using AI. They meet regularly to review AI projects, assess risks, and decide on controls. They also keep policies up to date and ensure new projects undergo security review. Basically, they are the cross-functional brains that keep AI use aligned with company risk appetite and regulatory obligations.

Security Awareness for AI Teams (and Vice Versa): Train your data scientists and ML engineers in cybersecurity basics and how attacks can manifest in AI systems. Conversely, educate your security teams about AI systems so they know what to watch for. This cross-pollination is important – you might even designate certain security engineers as “AI security champions” embedded with AI teams. Developers who understand security will build inherently safer systems; security folks who understand AI will deploy more relevant protections.

Stay Informed on AI Threat Landscape: The AI threat landscape is evolving. New research papers on attacks and defenses come out constantly. Assign someone (like those AI security champions) to keep tabs on developments. Participate in industry forums, subscribe to AI security newsletters, attend conferences (there are now niche conferences on adversarial ML and AI safety). Knowing the latest attack techniques allows you to proactively adapt. For example, if a new way to extract text from language models is published, you can test your models against it immediately.

Metrics and Reporting: Develop metrics to track your AI security posture. This could be number of AI systems reviewed for security before deployment, percentage of models with adversarial training, mean time to detect/respond to an AI incident, etc. Report these to senior management or the board as part of overall cybersecurity reporting. When executives see these metrics regularly, it reinforces that AI security is part of business as usual. It also helps justify budgets for improvements.

Review and Audit: Periodically audit the security of AI systems. This can be internal audits or even third-party assessments for an objective review. They might find, for instance, that a dev environment has lingering sensitive data or that a deprecated model still has an open API endpoint. Use findings to fix issues and feed back into policy updates. Additionally, if under regulations like the AI Act, you might be required to undergo conformity assessments – preparing via internal audits gets you ready for that.

By following these steps, organizations can construct a defense in depth for AI – protecting the data, the models, and the surrounding infrastructure while embedding security thinking into the AI development lifecycle. Crucially, this strategy is actionable: it breaks down the nebulous problem of “AI security” into concrete practices that align with traditional cybersecurity and governance frameworks.

6. Conclusion: Secure Your AI, Reap the Rewards

AI offers transformative benefits – efficiency, insights, personalization, automation at scale – but it also introduces novel risks that can’t be ignored. As we’ve explored, threats like data breaches, adversarial attacks, model theft, and insider mishaps can undermine an AI initiative or even an entire business. The evolving patchwork of regulations globally further raises the stakes for getting AI security and ethics right.

For executives, the mandate is clear: make AI security and risk management a core part of your AI strategy. It’s not merely an IT problem; it’s enterprise risk management in the age of AI. This means investing appropriately – whether that’s new tools (for monitoring or testing), hiring or training talent in AI security, or bringing in experts to audit and bolster your defenses.

The good news is that by addressing these challenges head-on, you’re not stifling innovation – you’re enabling it. Teams that know there’s a safety net and guidelines are actually freer to innovate (much like how clear financial controls give finance teams confidence to operate). When your AI is secure and well-governed, you can scale it up without as much fear of the unknown. You can proudly explain to customers and regulators how your AI works and how you protect their interests, turning security and compliance into a competitive advantage rather than a roadblock.

In practice, organizations that excel in AI security follow a few key takeaways:

Adopt a holistic approach: Technical fixes alone aren’t enough; organizational processes and people must be part of the solution. Make it a company-wide effort.

Stay proactive: Don’t wait for an incident to implement protections. Use threat modeling and scenario planning to anticipate what could happen, and put measures in place in advance.

Learn and adapt: The AI field evolves quickly. What’s secure today might not be tomorrow. Embrace a continuous improvement mindset – regularly update models, defenses, and policies as new knowledge comes in.

Foster a culture of security and ethics in AI teams: Encourage your AI developers to think about the “dark side” of their creations. Perhaps even implement an internal “red team vs blue team” exercise where one group tries to break the other’s model. Make it a positive challenge, not a punitive fear.

By securing AI systems against threats and aligning with regulatory expectations, you unlock the true value of AI with minimized downside. It builds trust – users trust your AI won’t spill their data or make a crazy, harmful mistake; investors and partners trust that you manage emerging risks responsibly; and regulators see you as a mature player in the AI space.

In essence, safeguarding your AI is safeguarding your future. Companies that get this right will ride the AI wave to new heights of success. Those that don’t might find their AI dreams dashed by an avoidable nightmare. With the fundamentals and strategies outlined in this guide, any organization can start building an AI security shield around their hard-won innovations. Now is the time to act – assess your AI risks, implement the best practices, and turn AI security into one of your firm’s core strengths. Your AI is powerful; make it securely so, and it will serve you for years to come.

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.