36. Data Warehousing vs. Data Lakes – Key distinctions for SMEs

Modern SMEs are collecting more data than ever – from sales transactions and customer interactions to social media feedback and IoT sensor readings. But simply having data isn’t enough; how you store and manage that data determines whether you get actionable insights or just information overload. This is where the concepts of data warehousing and data lakes come in. Both are popular data management solutions, but they serve different purposes. For a small or mid-sized enterprise aiming to adopt AI and improve decision-making, understanding these concepts and their key distinctions is critical. In this comprehensive guide, we’ll explore Data Warehousing vs. Data Lakes – Key Distinctions for SMEs, covering definitions, use-cases, integration with AI, cost considerations, and best practices. By the end, you’ll have a clearer idea of which approach (or combination) suits your business, backed by real examples and expert tips, so you can make an informed decision on your data strategy. Let’s dive in!

Q1: FOUNDATIONS OF AI IN SME MANAGEMENT - CHAPTER 2 (DAYS 32–59): DATA & TECH READINESS

Gary Stoyanov PhD

2/5/202543 min read

1. Definition & Core Differences

1.1 What is a Data Warehouse?

A data warehouse is a centralized repository that stores structured, processed data from various sources, designed for efficient querying and analysis. In a data warehouse, data is cleaned, transformed, and organized into a consistent schema before storage. This schema-on-write approach means the data warehouse has a predefined structure (tables, columns, data types) optimized for SQL queries and business intelligence uses.

Think of it as a highly organized library of data: information is indexed in known categories so that anyone (with permission) can quickly retrieve insights. Typically, data warehouses contain historical data that represents a “single source of truth” for the business – for example, consolidated sales figures, financial records, or inventory levels over time. The primary goal is to support reporting, dashboards, and other business intelligence (BI) activities. Because the data is structured and often aggregated, users can run complex queries and get results fast. In short, a data warehouse turns raw data into a form where “little or no data prep is needed” for analysis, making it easier for analysts and managers to use data directly.

1.2 What is a Data Lake?

A data lake, in contrast, is a massive storage repository that holds raw data in its native format, encompassing structured, semi-structured, and unstructured data. There is no predefined schema imposed on data when it enters the lake – this is known as schema-on-read, since structure is applied only when the data is retrieved for analysis. You can imagine a data lake as a huge digital lake fed by many data streams: transactional databases, CRM systems, images, logs, PDFs, social media feeds – all these can flow into the lake and be stored side by side. The purpose of data in a lake is not immediately defined.

This means data lakes are ideal for situations where you want to collect everything and decide later how to use it. They are highly scalable (especially with cloud object storage, you can virtually store unlimited data) and flexible. A data lake accepts raw data “as-is,” which has two big implications: (a) Storage is inexpensive (you’re often using low-cost storage and not spending effort on transformation upfront), and (b) Extracting value requires analysis tools or expertise – since the data is not pre-structured, data scientists or specialized tools are needed to process and analyze it. Data lakes shine for advanced analytics, machine learning, and big data projects because they retain all the original data fidelity. As one source puts it, retaining raw data allows analysts to “look for nuances that might not have appeared... in the past” and enables true predictive analytics. However, without proper management, a data lake can become disorganized (often termed a “data swamp”).

1.3 Key Architectural Distinctions

From the definitions, it’s clear that the fundamental difference between a warehouse and a lake lies in how they handle data structure and purpose. Let’s summarize the core distinctions:

Schema and Data Processing: A data warehouse uses schema-on-write, meaning data is transformed and normalized before entry. For example, when pulling sales data from different store databases into a warehouse, an SME will clean it, remove errors, and fit it into a common sales table schema. In a data lake, using schema-on-read, the same data could be dumped in raw (including, say, CSV files or JSON logs) and only cleaned or structured when a query is made or when it’s loaded into an analysis tool. This makes warehouses slower to set up but faster to query, and lakes faster to set up (just load everything) but potentially slower or more complex to use later.

Data Types: Data warehouses deal primarily with structured data (and some semi-structured data if they have capabilities for JSON, etc., but it’s organized upon entry). They are not meant to store things like images, free-form text, or binary logs in their raw form. Data lakes can store structured, semi-structured, and unstructured data all together. For instance, an SME’s data lake might contain a mix of relational data tables, text files with customer reviews, multimedia files, sensor readings, etc. This means warehouses are optimal for relational data (think tables you can put in Excel), while lakes accommodate everything else in addition to relational data.

Storage & Cost: Because of their different approaches, warehouses and lakes have different cost profiles. A warehouse often uses storage that is part of a database system (on SSDs or other high-performance media, especially in cloud warehouses) optimized for speed. This can cost more per gigabyte. Additionally, the processing (ETL – Extract, Transform, Load) that populates a warehouse adds to cost (in infrastructure and labor). A data lake typically uses cheap storage (like Amazon S3 or Hadoop distributed file system) where you pay very little for storing each GB, and you can store huge volumes cost-effectively. One trade-off is that to query data in a lake, you often spin up processing engines (Spark, EMR, data warehouse engines reading from the lake, etc.), incurring cost at query time. For SMEs, this often means a warehouse might have a higher ongoing cost if you’re paying for an active data warehouse service 24/7, whereas a lake might cost very little until you actually run a big analysis job. Sources note that data lakes are “a much less expensive alternative” to warehouses for storage, though warehouses provide performance benefits in return. We’ll delve more into cost in Section 4.

Users & Accessibility: Who uses the data also differs. In many organizations, business analysts and managers are the main consumers of data warehouse information – via BI tools, reports, or direct queries. The data is structured to answer known questions (e.g., “What were last quarter’s sales by region?”). Data lakes are often the domain of data scientists, data engineers, or savvy analysts who are comfortable working with raw data to discover new insights. That said, there are self-service tools emerging to make lake data more accessible. For an SME, if you have no in-house data science capability and just need routine metrics, a warehouse is more immediately useful. If you have (or plan to hire) data scientists or use AI services that need large raw datasets, a lake becomes more attractive.

In summary, a data warehouse is optimized for structure, speed, and known analytics, whereas a data lake is optimized for flexibility, scale, and unknown future uses. Many businesses find that both have a place in a modern data architecture. In fact, it’s common to see an architecture where a data lake is the first landing zone for all data, and then important structured subsets are fed into a data warehouse for easy access – ensuring nothing is lost, but day-to-day business users have the curated data they need. In the following sections, we’ll explore how SMEs can choose one or the other (or both), and what factors to consider.

2. Choosing the Right Solution for SMEs

Not long ago, data warehouses were seen as the domain of larger enterprises, while data lakes were often experimental projects at tech giants or research firms. Today, thanks to cloud technology, SMEs can leverage both data warehouses and data lakes without massive investments. The challenge is determining which solution aligns best with your business goals, data characteristics, and resources. Here, we discuss when an SME should use a data warehouse vs. a data lake, provide common business use cases for each, and touch on industry-specific applications.

2.1 When an SME Should Use a Data Warehouse

For many small and mid-sized businesses, a data warehouse is the entry point into advanced data analytics. You should consider a data warehouse if:

You have predominantly structured data from operational systems. If most of your crucial data comes from structured sources – e.g., a relational database from your ERP or CRM, Excel sheets, online store transactions – a warehouse can integrate these into one structured whole. SMEs often use multiple SaaS tools (accounting software, CRM, inventory management), and a warehouse can pull together data from all these sources into a unified schema.

You need reliable reporting and dashboards. When the goal is to produce consistent reports (sales trends, financial summaries, performance KPIs) on a regular basis, warehouses shine. They enforce data quality and consistency. For example, an SME retailer using a warehouse can ensure that all regional sales reports use the same definition of “net sales” and reference the same up-to-date data. The warehouse becomes the “single source of truth” for the company. This is especially valuable if you’ve had issues with teams having conflicting numbers.

Your queries are known and repetitive. If you know the kinds of questions you need answered every day or quarter (e.g., “What’s our customer lifetime value by cohort?” or “What is the monthly supply chain cost?”), you can design the warehouse schema to answer those efficiently. Data warehouses are optimal for structured query patterns. Business analysts can write SQL or use a BI tool to get results in seconds, because the data is pre-aggregated or indexed for those queries.

You have limited data science capabilities (or none). If you don’t have a data science team and aren’t planning complex machine learning in the immediate future, a warehouse might cover all your needs. It’s generally easier for non-technical users to interact with a warehouse (through BI tools or even direct Excel connections) than a data lake.

Examples of use-cases:

Historical trend analysis: SMEs can load years of sales data into a warehouse and easily query year-over-year trends, product performance, etc.

Financial reporting and auditing: Warehouses provide the accuracy and completeness required for financial statements. Once data is in the warehouse, it’s often considered auditable because it’s been through checks and balances.

Operational dashboards: For instance, a supply chain dashboard that draws from inventory, procurement, and shipping data – a warehouse can merge these streams and present up-to-date metrics.

In essence, an SME should use a data warehouse when the primary goal is to support business decision-making with trustworthy, quick analytics on structured data. As one article notes, SMEs can improve strategic decision-making in every function by leveraging data warehousing – from sales to supply chain to HR. It brings the big data benefits to the small business in a manageable way.

2.2 When an SME Should Use a Data Lake

A data lake might seem like a heavyweight solution more fitting for tech giants, but there are scenarios where it makes sense for an SME to invest in one:

You deal with diverse data types or big data volumes. If your business naturally collects a lot of unstructured or semi-structured data, a data lake is a suitable repository. For example, a manufacturing SME with IoT sensors might gather thousands of readings per second from equipment. Storing all that raw sensor data in a structured warehouse could be impractical or expensive. A data lake on cheap storage can handle it. Likewise, if you collect things like customer support chat logs, social media comments, or high-volume web analytics, a lake will store this data without forcing it into a predefined schema.

You plan to implement AI or advanced analytics that go beyond standard reporting. Data lakes are the launchpad for AI and machine learning. Suppose an SME in e-commerce wants to build a recommendation engine or a customer churn predictor – they will benefit from having a rich data lake that includes raw web clickstreams, user reviews, customer profiles, and more. Machine learning algorithms often improve with access to raw, granular data (including historical data), which the lake provides. In fact, having raw data accessible enables analytics that were not even envisioned when the data was first stored.

Flexibility and “future-proofing.” Maybe today you don’t have a use for unstructured data, but you want to archive it because tomorrow you might. Data lakes allow SMEs to future-proof their data strategy. For instance, a healthcare clinic (SME) might start storing anonymized diagnostic images or patient device data in a lake even if they’re not analyzing it yet, with the idea that down the line they could apply image recognition AI or health analytics. If you suspect some data could be valuable later, putting it in a lake now ensures it’s available when you’re ready.

You want a unified data repository to complement a warehouse. Some SMEs purposefully choose both: the warehouse for daily needs and a lake for “overflow” or raw data. The lake can feed the warehouse after refining, but it also keeps a copy of everything. This way, if a new question arises that the warehouse isn’t designed for, analysts can go to the lake and explore. This hybrid approach is increasingly common, even for mid-market companies, as it balances immediate usability with long-term exploration.

Examples of use-cases:

Data science and innovation projects: A marketing company (SME) could store a vast collection of ad impression data, clicks, and social media engagement in a lake. Data scientists can then train predictive models for ad performance or analyze sentiment – tasks not feasible on a summarized data set.

Detailed logging and audit trails: An SME software firm might keep detailed application logs, user event data, and error reports in a lake. If an issue comes up or for compliance, they can query these logs (possibly with tools like AWS Athena or Azure Data Lake Analytics) on demand.

Combining external data: SMEs might use open datasets or third-party data (market data, weather data, etc.) to augment their analysis. Dumping these into a lake without worrying about format conflicts is easier. The raw external data sits in the lake until needed, when it can be combined with internal data to discover insights (for example, seeing if weather patterns affect retail foot traffic, by combining weather data files with sales data).

In summary, an SME should lean toward a data lake if they prioritize flexibility, handle lots of unstructured data, or have strategic plans for advanced analytics/AI that require detailed data. Even smaller businesses can benefit – as one business analytics firm notes, even small businesses will be amazed at the benefits of going with a data lake because of its unstructured nature. These include real-time decision making, more analytics options, and easier machine learning use, all of which can apply to SMEs looking to innovate.

2.3 Common Business Use Cases and Industry Applications

To make these ideas more concrete, let’s look at how different industries or business scenarios might favor a warehouse or a lake (or a combo).

Retail SME: A boutique retail chain might use a data warehouse to consolidate sales data from point-of-sale systems and e-commerce, producing daily sales dashboards and inventory reports. This structured approach helps optimize stock levels and analyze sales by product category easily. At the same time, they could maintain a data lake for customer behavior data – website clickstreams, social media mentions, loyalty program interactions – aiming to later analyze it for personalization or to train an AI model for product recommendations. Retailers benefit from warehouses for immediate operational insights, and lakes for deeper customer analytics (like combining CRM, point-of-sale, and online behavior data to understand the full customer journey).

Healthcare SME (Clinic or Small Hospital): Such an organization might use a data warehouse to store structured electronic health record (EHR) data, billing information, and appointment schedules, enabling standard reporting on patient counts, revenue, etc. Concurrently, a data lake could hold unstructured data like medical images, doctor’s notes, sensor readings from medical devices, and genomic data (if applicable). The lake can facilitate research and advanced diagnostics (for example, applying machine learning to MRI images or analyzing patterns in patient device data), while the warehouse ensures day-to-day operational metrics (like readmission rates, bed occupancy) are easily accessible.

Financial Services SME: A small finance company or credit union could leverage a data warehouse for regulatory reporting and financial analysis – aggregating transactions, loans, customer profiles into a schema that ensures consistency and accuracy (important for compliance and audits). Meanwhile, a data lake can store detailed transactional logs, market data feeds, and even text from customer inquiries. This lake could be used to detect fraud patterns using AI (fraud detection models often require analyzing large volumes of transactions for anomalies), or to do risk modeling by combining many data sources.

Manufacturing SME: In manufacturing, a data warehouse might integrate data from ERP (production volumes, supply chain data) and quality control systems to track KPIs like defect rates, output, and costs per unit. This helps in operational decision-making and reporting to management. A data lake can absorb IoT sensor data from machines on the factory floor (temperatures, vibration readings, etc.) and maintenance logs. Using that data, the company can implement predictive maintenance – predicting machine failures or optimizing maintenance schedules via machine learning. The unstructured IoT data (which can be huge in volume) lives in the lake, and insights from it (like an alert or a summarized dataset of predicted failures) could even feed back into the warehouse for broader visibility.

Consulting/Service SME: A professional services or consulting SME might primarily need a warehouse to consolidate data from project management tools, timesheets, CRM, and finance software to analyze project profitability and consultant utilization rates. If they start capturing more varied data – say, client feedback forms (text data) or market research data – they might add a data lake to store that. If this consulting firm wants to build an AI tool (for instance, a model to predict project success factors from past data), having a data lake with all historical project documents and communications could be invaluable for training such a model.

These examples illustrate a pattern: warehouses often address immediate, structured data needs across industries, while lakes handle the innovative, exploratory, or large-scale data opportunities unique to each field. Importantly, many SMEs find value in using them together. In fact, one source emphasizes that the choice isn’t about picking one over the other anymore – “Both are essential” for mid-sized organizations looking to get the most from their data. The key is understanding your current needs and future ambitions. An SME deeply focused on BI might start with a warehouse; one focused on data exploration might start with a lake; and many will eventually use both as they mature their data capabilities.

3. Integration with AI & Analytics

One of the primary drivers for SMEs to invest in modern data solutions is the promise of better analytics and AI capabilities. But data warehouses and data lakes play different roles in the analytics ecosystem. In this section, we discuss how each supports business intelligence (BI) and reporting, how they enable advanced AI and machine learning, and highlight some SME-friendly cloud solutions for implementing these with minimal friction.

3.1 Data Warehouses for Business Intelligence and Reporting

Data warehouses have been around for decades, largely because they excel at supporting traditional analytics – namely, business intelligence (BI), reporting, and OLAP (online analytical processing). Here’s how warehouses integrate with analytics in an SME context:

Straightforward BI Integration: Most BI tools (Tableau, Power BI, Looker, etc.) are optimized to query structured data. Connecting a BI dashboard to a SQL data warehouse is usually plug-and-play. SMEs using a warehouse can easily set up dashboards that refresh daily or even in real-time if the warehouse is updated continuously. The performance is typically high, meaning even non-technical users can drag-and-drop fields to create reports without writing complex code.

Support for Descriptive & Diagnostic Analytics: A warehouse is ideal for descriptive analytics (summarizing what happened, e.g., monthly sales, website traffic over time) and diagnostic analytics (why something happened, e.g., sales dropped because foot traffic decreased in certain stores). Because data warehouses often store historical data, you can do year-over-year comparisons, trend analysis, etc., quite easily. And since the data is cleaned and unified, you can join data across subjects (e.g., linking marketing spend data with sales outcomes) to find correlations and causes.

Data Marts for Departments: In some cases, SMEs create smaller “data marts” from the main warehouse for specific departments (finance, marketing, etc.). This is an approach where you give each team a simplified view of the data relevant to them, often for performance reasons or to simplify their analysis. Data warehouses support this concept (some call it the “enterprise warehouse” with departmental data marts). For instance, a marketing data mart might aggregate campaign results, while a finance data mart focuses on revenue and expenses – all sourced from the same master data warehouse but tailored to the audience.

Example – Traditional BI in Action: Consider a chain of cafes (SME). They’ve implemented a cloud data warehouse that pulls data from point-of-sale systems, a loyalty app, and supply chain spreadsheets. Using a BI tool connected to this warehouse, the management can see daily dashboards of total sales, compare which locations are performing best, and drill down into what menu items are popular. If something looks off (say one cafe’s sales dipped last week), they can investigate by querying the warehouse for that location’s data specifically, perhaps noticing that a local event impacted foot traffic. This kind of analysis is intuitive with a warehouse because the data is structured around the business’s key entities (stores, products, time, etc.).

Overall, data warehouses are the backbone of BI. They ensure that when you’re looking at a chart or report, it’s based on consistent, well-defined data. For SMEs, this means less time reconciling numbers and more time making decisions. Many SMEs find that after implementing a data warehouse, their reporting efficiency skyrockets and the trust in data improves, since everyone is using the same numbers rather than siloed spreadsheets.

3.2 Data Lakes for Advanced AI and Machine Learning

If data warehouses are for traditional BI, data lakes are the launching pad for advanced analytics, especially AI and machine learning. Here’s how data lakes facilitate these in an SME environment:

Holistic Data for AI: AI models (like machine learning algorithms) often perform better with more data – both in volume and variety. Data lakes, by capturing every detail in its original form, provide a rich training ground for AI models. For example, a small e-commerce player might want to build an AI model to recommend products. To do this well, they’d like to use browsing history, purchase history, customer reviews, product images, and perhaps customer service chat transcripts. All these different data types can be housed in a data lake. An analyst or data scientist can then pull them together to train a model (perhaps using Python or R on a subset of data loaded from the lake). Without a lake, gathering and prepping this data from disparate sources would be a huge hurdle.

Enabling Predictive & Prescriptive Analytics: Beyond descriptive (what happened) and diagnostic (why), the next stages of analytics are predictive (what is likely to happen) and prescriptive (what we should do about it). Data lakes are particularly aligned with these because they have the historical raw data needed to find patterns and the breadth of data to consider many factors. For instance, predictive maintenance on equipment requires analyzing patterns in sensor data to foresee failures – a lake can store years of sensor readings and error logs that a machine learning model can crunch to predict an outage. According to one source, storing raw data in a central lake is “much more helpful in machine learning” than only having pre-aggregated data, precisely because it preserves nuance.

Experimental Analysis and Data Discovery: An SME might not know immediately what questions to ask of their data. Data lakes allow data discovery – you can run algorithms to find clusters in customer behavior, do text mining on customer feedback to see themes, or try out new metrics – without the constraint of having pre-modeled the data. For example, a data scientist might explore an SME’s log data to see if there are usage patterns that correlate with churn, something that wasn’t a predefined metric. Lakes give that freedom to experiment.

Integration with Big Data Tools: A rich ecosystem of big data and AI tools plug into data lakes. Frameworks like Apache Spark can be used to process lake data in large scale; Python notebooks can connect to lakes for iterative analysis; machine learning services (like Amazon Sagemaker, Azure ML, or Google Cloud AI) can directly consume data from lakes. Even if an SME doesn’t have all these in-house, many cloud providers offer managed versions. For example, AWS has Glue and Athena to prepare and query data in S3 (data lake storage), and then you can feed that into an ML service. These tools abstract a lot of technical complexity, meaning a reasonably skilled analyst (or a consulting partner) can perform big data analytics without building everything from scratch.

Example – Data Lake fueling ML: Imagine a small online education company that collects data from its learning platform: clickstreams of how students navigate courses, time spent on each lesson, quiz results, and even written feedback. They store all this in a data lake on the cloud. They decide to build a machine learning model to predict which students are likely to drop out of a course. Because they kept all the data, they can engineer a variety of features for the model – e.g., frequency of logins, variability in quiz scores, sentiment of feedback comments. By training the model on this rich dataset (accessible thanks to the lake), they achieve a high accuracy. The model might reveal that students who don’t watch videos fully and submit negative feedback early are at high risk of dropping – insight that could help the company intervene earlier. If the data had been pruned or summarized too early (say they only kept course completion rates in a warehouse), these insights might never surface.

For SMEs, setting up a data lake specifically for AI can be a strategic move if they anticipate value from AI projects. However, it’s worth noting that a data lake by itself doesn’t deliver AI – it enables AI by providing data. Companies also need either in-house talent or external help to actually build models and interpret them. The good news is that cloud and SaaS AI offerings are lowering the barrier. In short, if an SME’s goal is to leverage AI and innovative analytics, a data lake is often a prerequisite infrastructure that will pay off when those projects kick off.

3.3 SME-Friendly Cloud Solutions for Warehouses and Lakes

One of the reasons SMEs can realistically consider data warehouses and data lakes today is the abundance of cloud-based solutions. These solutions remove much of the heavy lifting of setting up hardware, managing databases, and scaling, allowing smaller companies to focus on using the data rather than managing it. Here we outline some popular options and how they benefit SMEs:

Cloud Data Warehouses (DWaas – Data Warehouse as a Service): Leading cloud data warehouse platforms include Amazon Redshift, Google BigQuery, Snowflake, Microsoft Azure Synapse (formerly SQL Data Warehouse), and Oracle Autonomous Data Warehouse, among others. These are all managed services – you load your data and run queries, while the provider handles optimization, backups, and scaling. For example, Snowflake has gained popularity with SMEs because of its ease of use (“stupid easy to set up” as one user noted) and a pay-as-you-go model where you can suspend or resize compute to control costs. Google BigQuery offers a serverless model where you’re charged by data processed per query, making it attractive for spiky workloads. Amazon Redshift now has an on-demand scaling and even a serverless option in preview, similarly removing the need to manage clusters. The key benefit for SMEs: low upfront cost and quick deployment. You can typically start a warehouse in minutes on these platforms. Additionally, these services often have free tiers or low-cost tiers that are sufficient for smaller data volumes, which lowers the risk of trying.

Cloud Data Lakes: A data lake is more of an architecture than a single product, but cloud providers offer the building blocks. Amazon S3 (Simple Storage Service) is practically synonymous with cloud data lakes – it’s cheap, durable storage where you can dump any amount of data. AWS then provides Glue (for cataloging and ETL) and Athena (for querying data in S3 with SQL) to help use that data. Azure Data Lake Storage similarly provides massive storage, and Azure Synapse or HDInsight can query or process it. Google Cloud Storage plus BigQuery (which can query external data) or Dataproc covers the Google side. For a more integrated solution, some SMEs look at platforms like Databricks, which, on top of cloud storage, provides a managed Spark environment geared towards data engineering and machine learning on lake data (Databricks has a “lakehouse” concept merging both paradigms). Another emerging concept is Microsoft Fabric (mentioned in Azure context) which aims to unify data engineering, warehousing, and lake analysis in one SaaS offering – something potentially very SME-friendly in the future. The bottom line is, an SME can set up a data lake by simply creating a bucket in S3 or Azure and start loading files. The challenge is then how to catalog and analyze it, but services like Athena (SQL query on S3) mean you can treat the lake a bit like a warehouse when needed, without moving data around.

Hybrid and Cost-Effective Approaches: SMEs might also consider a hybrid approach to leverage both warehouses and lakes without duplicating too much data or cost. For instance, tools now allow a “query federation” where a data warehouse can query data in a data lake. BigQuery can do this with external tables, Snowflake has an External Table feature for data in S3, and Redshift Spectrum similarly can query S3 data. This means you could keep less-frequently-used data in cheap lake storage and only pull it into the warehouse when necessary for a query – best of both worlds. Another approach is using open data formats (like Parquet, ORC) in your lake which can then be loaded to any warehouse if needed. This avoids lock-in and keeps things flexible.

Ease of Setup and Maintenance: Cloud solutions often come with automation that SMEs appreciate. Automated backups, encryption by default, auto-scaling, and one-click integrations (like connecting a warehouse to popular data sources through built-in connectors or marketplaces) reduce the need for dedicated IT staff. For example, integrating your Salesforce CRM data into BigQuery can be done through Google’s BigQuery Data Transfer Service with a few configuration steps, no custom code. Many SaaS applications now directly offer connectors to popular warehouses (it’s common to see “export to Snowflake or Redshift” in SaaS analytics tools). This ecosystem makes adopting these platforms feasible for a small team.

To illustrate, here’s an SME-friendly scenario: A mid-sized online retailer uses Snowflake as its warehouse and S3 as a data lake. They have an ETL service (say, Fivetran) that nightly pulls data from their production PostgreSQL database, Google Analytics, and Shopify store into Snowflake – giving them a near-real-time warehouse for core metrics. Meanwhile, all the raw CSV exports, JSON logs, and even some images (perhaps product photos with metadata) are stored in S3. When their data scientist wants to experiment with an AI model, she can access the S3 data via a Spark notebook or even create an external table in Snowflake to join it with structured data. This setup leverages cloud strengths: Snowflake handles performance for BI, S3 handles cheap storage, and the two can work in tandem when needed. And importantly, the retailer’s small data team didn’t have to manage servers or worry about scaling; they rely on the cloud services to handle that.

In conclusion, the integration of warehouses and lakes with AI and analytics is not an either/or proposition. Data warehouses empower SMEs to extract value from data quickly for known needs, supporting the crucial BI that runs the business. Data lakes empower SMEs to explore and innovate, unlocking the potential of AI and big data when they are ready for it. Cloud solutions have made both approaches accessible, cost-effective, and scalable on-demand. An SME’s data strategy can thus start with one and evolve to include the other as their needs grow – with the cloud ensuring that this evolution is smooth and doesn’t require a huge overhaul. Next, we’ll consider the financial and operational implications (cost, scalability, maintenance) in more detail, which is often the deciding factor for smaller enterprises.

4. Cost, Scalability & Maintenance

For SMEs, adopting any new technology comes with concerns about cost, scalability, and maintenance overhead. In this section, we analyze these factors for data warehouses vs. data lakes, specifically from an SME perspective. We’ll compare cost structures, discuss scaling up (or down) as your business evolves, and look at what it takes to maintain each solution, including security considerations.

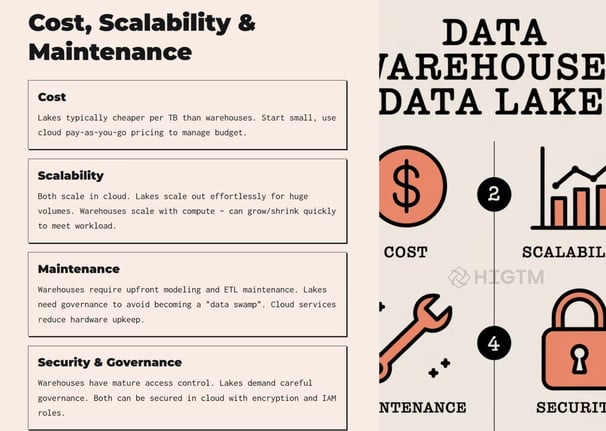

4.1 Cost-Effectiveness Considerations

Cost is often the make-or-break issue for SMEs. Let’s break down the cost factors for warehouses and lakes:

Upfront vs. Ongoing Costs: Traditional on-premises data warehouses historically required large upfront investment in hardware, database licenses, and technical expertise – often too high for SMEs. Cloud data warehouses have changed this model to a subscription or usage-based cost. Now, an SME can start a warehouse with virtually no upfront cost, paying monthly or per-query. Data lakes using cloud storage similarly have negligible upfront cost (you pay for storage as you use it). The ongoing cost for a warehouse will typically include compute and storage. For example, Snowflake or Redshift might charge by the hour for compute clusters and by the amount of data stored. Data lake costs are mostly storage and data transfer (processing is optional and usually separate, like if using Athena it’s per TB scanned).

Storage Costs: As noted earlier, storing data in a data lake is cheaper per unit than in a data warehouse. Cloud object storage can be as low as fractions of a cent per GB per month. Warehouses storage (especially if using high-performance SSDs or redundant storage for reliability) can cost more. Some warehouse services (BigQuery, Snowflake) separate storage costs (often at a slightly higher rate than raw cloud storage) and compute costs. An example ballpark: storing 1 TB of data in S3 might cost ~$25/month, whereas storing 1 TB in a warehouse could be 2-3x that (though exact prices vary). One source clearly states: “data lakes are a much less expensive alternative because data warehouses… require more expensive storage options”.

Compute/Query Costs: For warehouses, you’re paying for the ability to query quickly. This could be a dedicated cluster running 24/7 (you pay whether you use it or not, unless you turn it off) or a serverless on-demand model where each query has a cost. Data lake querying (via tools like Athena, etc.) usually charges by data scanned or by the compute time in tools like Spark. If an SME only runs occasional big analysis, a data lake query model might be cheaper (no running warehouse all the time). If they run frequent reports, a warehouse might be more cost-effective because of optimized performance.

Human Resource Costs: It’s easy to overlook the cost of people and development. A warehouse typically requires effort to set up ETL pipelines and design schemas (though this can be outsourced or mitigated with modern ELT tools). A data lake might require hiring a data engineer or training someone to manage data quality later or to set up query engines. SMEs should factor in whether they have/need an IT team or if a solution can be mostly automated. Often, the turnkey warehouse services pitch that they “save costs and time on setting up an in-house team”, which is crucial for SMEs with lean teams. If an SME tries to build a complex data lake infrastructure themselves without sufficient expertise, the costs (and delays) can mount. Sometimes using a managed platform or consulting service can be more cost-effective in the long run.

Scaling Costs: SMEs need to ensure the solution is cost-effective not just at the start but as data grows. Cloud warehouses generally allow scaling up or down easily – but scaling up means higher costs. The “pay only for what you use” model is a double-edged sword: it prevents overpaying when you’re small, but if you suddenly run a lot of queries or your data doubles, your bill can increase accordingly. Data lakes scale storage very cheaply, but if your analysis needs grow, you might end up incurring compute costs to process that mountain of data, or costs to move data around. A best practice is to set budgets/alerts and use cost monitoring tools (cloud providers have these) to avoid surprises.

For example, an SME might budget that a warehouse will cost $1000/month for their current workload. If they foresee growth, they should project what costs would be at 2x or 5x data volume and ensure that’s still affordable or plan for archiving older data to manage costs. With a lake, they might spend only $200/month on storage now, but a heavy analysis might incur $50 in Athena costs one day. Being aware and managing usage is key.

In general, data lakes are very cost-effective for storage and initial ingestion, whereas data warehouses justify their higher cost by providing faster value extraction. Many SMEs find a combination works: keep the bulk of data in a cheap lake, and only store high-value, frequently-used data in the warehouse (smaller size means lower warehouse cost). This tiered approach can be quite cost-efficient.

4.2 Scalability and Performance

Scalability is about handling growth (in data volume or users), and performance is about how fast queries return or how well the system handles load. Both warehouses and lakes can scale, but differently:

Data Warehouse Scalability: Modern data warehouses (especially cloud-based) are designed to scale out (by adding more nodes) or scale up (using more powerful processors) as data and user counts grow. For instance, Amazon Redshift allows adding nodes to a cluster to improve performance on larger data sets; Snowflake separates storage and compute, so you can increase the compute size for faster queries on more data without touching storage. There’s usually a linear relationship – more data or more users means you pay for more compute to maintain speed. One advantage for SMEs is the ability to start small (maybe a few hundred GB of data, a small virtual warehouse) and gradually scale to multiple TB and more powerful clusters without changing architecture – you just turn the dial on the service. Cloud warehouses often tout seamless scaling; however, there can still be performance tuning needed (very large data or very complex queries might need partitioning, clustering keys, etc., which are technical details to optimize performance). The key point is capacity can be increased on-demand.

Data Lake Scalability: Data lakes, built on distributed storage, can scale almost infinitely in terms of storage. The question is processing power when you need to use the data. If using something like Hadoop or Spark on a data lake, you might need to scale the cluster size to process more data – which cloud can also handle by adding more nodes (either manually or via services that auto scale). Many companies have implemented data lakes precisely for scalability: you don’t worry about outgrowing it, because you can always just add more files. One potential performance issue is that if a lake lacks structure, queries can be slower (scanning a ton of raw data). Techniques like partitioning data (storing in folders by date, for example) or using columnar file formats (Parquet, ORC) help maintain performance even as data grows. Essentially, a data lake’s storage scales independently of compute – which is great for collecting data, but when analyzing, you need to provision enough compute to crunch it in a timely manner.

Concurrency and Users: Data warehouses are generally better at handling many concurrent users or queries. They are databases built for analytics, so if 10 people run reports at the same time, the warehouse can handle it (to a limit, depending on its size). Data lake processing, especially if using batch frameworks, might not be as interactive. However, newer query engines and the concept of lakehouse are improving concurrent query handling on lakes. For an SME, this might not be a big issue (you may have a small set of analysts), but it’s worth noting if you foresee lots of people or automated processes hitting the data at once (like many BI dashboards refreshing simultaneously).

Real-time needs: If you need near real-time data updates and queries (like streaming data), warehouses are adding features for that (e.g., real-time ingestion in BigQuery or Redshift streaming). Data lakes historically were more for batch (dump data, then process in batches), though technologies like Kafka + lake or Spark streaming allow real-time pipelines too. SMEs that need live dashboards (say monitoring IoT device status minute by minute) might lean on a warehouse or specialized time-series databases, but could also use a lake with a streaming layer. It adds complexity, so often a warehouse with real-time ingestion is simpler for an SME.

To put it simply: both solutions are scalable, but you pay for performance. A well-designed data warehouse can handle very large data sets quickly, but costs will rise as you scale. A data lake can handle huge data sets cheaply, but extracting insights might slow down if you don’t scale your processing power accordingly (which then costs more).

For an SME, cloud scalability means you won’t outgrow the solution for a long time. A small company today could become a medium one in a few years, and their Redshift or Snowflake can grow with them (or they can transition to a bigger setup without starting from scratch). Likewise, their S3 data lake could accumulate 100x data, and it’s just a matter of paying for the storage. One caution: always design with data growth in mind. If you expect rapid growth, plan your partitioning, indexing, and pipeline designs early to avoid performance bottlenecks later. This is an area where consulting with experts (like HIGTM) can ensure your foundation is strong enough to scale.

4.3 Maintenance and Ease of Setup

Maintenance refers to the ongoing tasks to keep the system running efficiently – e.g., updating software, fixing data issues, managing users and permissions, optimizing performance, etc. Ease of setup is about the initial effort to get the solution up and running. These are significant factors for SMEs that may not have a dedicated IT department.

Setting up a Data Warehouse: Thanks to cloud, setting up a basic data warehouse is faster than ever. You can, for example, create a Snowflake account and within hours have databases ready. The bigger effort is in ETL/ELT – getting your data into the warehouse and modeling it. SMEs should plan the data pipeline: What data sources? How to extract? Many modern solutions (like Stitch, Fivetran, or AWS Data Pipeline) can do this with minimal coding, using connectors to common sources. Designing the schema is another step – ideally with knowledge of your reporting needs. This can be iterative: you start with a simple schema and refine as you go. The great part is many warehouses come with sample templates or have active communities with best practices. For maintenance, cloud warehouses reduce a lot of traditional chores: no hardware to upgrade, no DB patching, automated vacuuming or indexing in some cases. However, you still need to maintain data quality and update pipelines when source systems change (e.g., if your CRM adds a new field you want to capture, you must adjust the pipeline). Also, as data volume grows, you might need to optimize (like adding sorting keys, partitioning large fact tables, etc.) which may require some expertise.

Setting up a Data Lake: Setting up the storage is trivial (create a bucket). The challenge is setting up a useful data lake environment – ingesting data and enabling analysis. Without a data warehouse’s structure, you need to decide how to organize the raw data (by source? by date? etc.). Many SME data lake projects start as just “dump everything in S3.” While that technically works, to make data usable you might need to create catalogues (e.g., AWS Glue Data Catalog or a Hive metastore) so you know what data you have and how to query it. If using Hadoop/Spark, setting up those clusters (or using a managed service like AWS EMR, Databricks, or Azure HDInsight) is needed. It’s fair to say a purely DIY data lake has a higher technical barrier than a warehouse. However, if you use a managed platform (Databricks, for example, or Azure Synapse which integrates lake storage with SQL and Spark interfaces), it can be much more approachable. Maintenance for a lake involves monitoring storage (though rarely an issue beyond cost), managing metadata (keeping track of what datasets exist and ensuring they’re documented), and potentially managing the processing engines that use the data. Data lakes also require data lifecycle management: you might accumulate outdated data that needs archiving or deletion (especially if there are privacy regulations, e.g., remove personal data after X years). Implementing those policies is part of maintenance.

Security Maintenance: Both warehouses and lakes require setting up user roles, permissions, and maybe network security (like VPC, firewall rules). Cloud services simplify a lot but not entirely. A warehouse might need user accounts for each analyst with appropriate table permissions. A data lake might need bucket policies or IAM roles configured so that only the right people or processes can access certain folders. Regular audits are wise – e.g., reviewing who has access to sensitive data. Cloud providers offer encryption at rest and in transit; enabling and managing encryption keys (if using customer-managed keys) is another task. Many SMEs stick with provider-managed keys for simplicity.

Updates and Upgrades: In cloud, the providers update the underlying software. With Snowflake or BigQuery, you just get new features automatically. With a more DIY approach (like running your own Hadoop cluster on AWS EC2), you’d have to patch/upgrade – which SMEs usually avoid by choosing managed services.

Monitoring and Troubleshooting: It’s important to have some monitoring – e.g., if a data pipeline fails, you need alerts. Warehouse services often have monitoring dashboards for query performance, etc. Setting up alerts on cloud spend is also a good maintenance step (to catch any runaway process). If using multiple tools, a bit of glue like CloudWatch (on AWS) might be used to keep an eye on things.

In terms of ease of use day-to-day, once setup:

A well-designed data warehouse is generally easy for end-users. They connect their BI tool or run SQL queries; not much maintenance on their part. The data engineering team (even if that’s a single person) needs to ensure data is updated and correct, but they are not firefighting infrastructure issues typically.

A data lake requires more technical skill to exploit. Without a clear interface, some SMEs might find their data lake underutilized (“we put all our data there, but who knows how to get insights out?”). To counter this, organizations often add user-friendly layers on top of lakes – for example, using Amazon Athena to let analysts run SQL on the lake, or loading some lake data into a temporary warehouse when needed. Maintenance here might include managing those intermediary steps.

Security Factors: We should highlight security as the user specifically mentioned it. Warehouses, being structured, allow fine-grained security (you can mask a salary column or restrict a table). Lakes often require securing at file or directory level (coarser) unless you implement something like a data lake governance tool that provides fine-grained controls. On cloud, both can be locked down to meet high security standards (with proper configuration). SMEs dealing with sensitive data (PII, financial info, healthcare data) should consider compliance requirements. Sometimes this influences choice: a warehouse from a reputable vendor might have out-of-the-box compliance (HIPAA compliance, etc.), whereas a DIY lake might put more onus on the company to enforce compliance.

5. Best Practices & Case Studies

Implementing a data warehouse or data lake (or both) is not just about technology – it’s about doing it in a way that delivers business value. In this section, we highlight best practices for SMEs embarking on this journey, share real-world examples of successful implementations, and note common mistakes (and how to avoid them). We’ll also give some practical recommendations on integrating these data solutions with your existing business tools and processes, ensuring a smooth adoption.

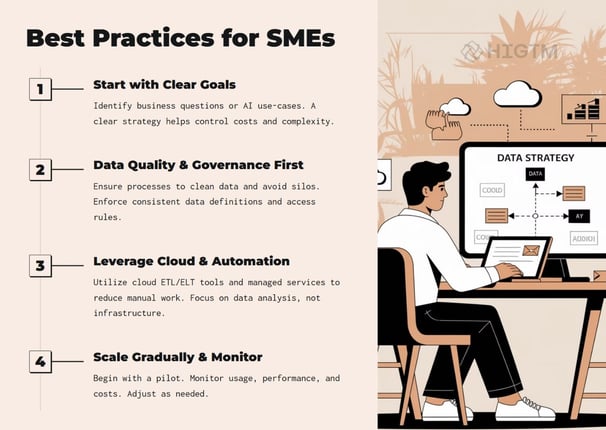

5.1 Best Practices for Implementation

Start with a Clear Strategy and Goals: Before writing a single line of code or signing up for a cloud service, define what you want to achieve. This could be as simple as “consolidate all sales and marketing data to improve reporting” or “enable data science on our customer data to improve retention”. Clear goals will drive your design and prevent scope creep. One mid-market strategy guide suggests SMEs should “identify your goals” and understand your current data as a first step. This includes talking to stakeholders: What reports or insights do different teams need? Are there pain points (like manual data merging or unanswered questions) that the new system should solve? Having business buy-in on goals ensures the project has direction and support.

Phased Approach – Think Big, Start Small: It’s wise to envision a scalable system for the future (think big) but implement in increments (start small). For example, you might initially integrate just a few key data sources into a warehouse and get some wins (like a working dashboard for management). Or spin up a minimal data lake with one data domain to test out tools. This phased approach allows learning and adjustment. It also provides intermediate deliverables that prove value. Avoid the “big bang” where you spend a year building and have nothing to show until the end – that’s risky, especially for SME budgets.

Ensure Data Quality and Consistency: Garbage in, garbage out. Whether you build a lake or warehouse, invest time in data cleansing and defining common data definitions. In a warehouse, this means your ETL processes should handle things like removing duplicates, standardizing date formats, and reconciling codes (e.g., “NY” vs “New York” in different systems). In a data lake, you might not clean upfront, but you should still record metadata and sources to later trace and clean when needed. Consider using data validation tools or having a data steward to review the data. Many warehouse projects fail not technically, but because users don’t trust the data – usually due to quality issues. Setting up a robust process to maintain accuracy (and communicating that to users) is key to adoption.

Data Governance from Day One: We’ve touched on this, but to reiterate as a best practice: define who can access what data, and how new data gets added. Even in a small company, it helps to have someone responsible for data governance. They should set policies such as: how to request a new data source to be added, security protocols, backup and retention policies, and so on. Governance also covers documentation – maintain a data catalog or at least a simple wiki that lists what tables or files are available, definitions of fields, update frequency, etc. This will immensely help users discover and correctly use the data. If you’re using a data lake, a governance framework prevents the chaos of a “data swamp.” In data lakes, you might implement tools like AWS Glue Data Catalog or Azure Data Catalog to organize datasets. For warehouses, ensure each major table has a description and owners.

Leverage Automation and Cloud Services: As a small or mid-sized business, you likely can’t afford to do everything manually. Use modern ETL/ELT tools (many offer free tiers or affordable plans for SMEs). Automate your data pipelines so that updates happen on schedule without someone having to push a button. Use Infrastructure-as-Code (IaC) if possible for reproducibility (though for simpler setups this might be overkill, a bit of scripting to set up resources can help). Also, use cloud monitoring to automatically alert you of failures in data loads, or if a query is running too long, etc. The more you can rely on cloud-managed services, the less you have to worry about maintenance. For example, a Data Warehouse as a Service offering can spare you the tasks of indexing and query tuning. Some best practices guides suggest SMEs look into DWaaS for convenience and utility – basically outsourcing the heavy lifting.

Integrate with Existing Tools & Workflow: Ensure that the new data repository connects with how your team already works. If your sales team lives in Excel, provide them with an ODBC connection or a tool like Power BI which they can use to pull data from the warehouse (instead of forcing them to learn a new interface from scratch). If you adopt a new BI tool as part of this project, conduct training sessions. For a data lake, maybe integrate with tools like Jupyter Notebooks for data science or a simple query interface. The idea is to make data access part of daily operations. It might mean embedding reports into something like SharePoint or Slack channels. Also, consider connecting the warehouse or lake to other business systems if needed (for example, feeding processed data back into a CRM to enrich customer profiles). The more the data platform becomes intertwined with business processes, the more valuable and harder to abandon it becomes.

Performance Tuning and Optimization: After initial setup, monitor usage and performance, and fine-tune. Best practices include adding proper indexes or clustering in warehouses once you see query patterns, partitioning large tables by date (common for fact tables), and perhaps summarizing very large data sets into aggregate tables for super-fast queries (while keeping detail for deeper analysis). In a data lake, if certain large queries are common, you might materialize the result into a smaller table or even consider moving that subset into the warehouse. The cloud provides many metrics – use them. If queries are slow, investigate why: maybe you need to redistribute data differently or provide more compute. A little tuning can save cost too (faster queries = less compute time).

Backup and Recovery Planning: Although cloud services are reliable, have a backup strategy. For warehouses, that might mean doing periodic snapshots or exports (some services do this automatically). For lakes, perhaps a lifecycle rule to copy critical data to a backup bucket or to Glacier storage. Also test (if possible) that you can restore in case of an accidental deletion or a corruption (rare but possible via user error). Knowing that your data is safe provides peace of mind and is part of governance.

Keep Security and Compliance in Focus: Implement least-privilege access (give people access to only the data they need). Mask sensitive data in analysis environments if possible. If you have customer personal data and need to comply with GDPR or similar, set up deletion pipelines for when a user invokes their right to be forgotten (meaning you might need to delete or anonymize their data in your lake/warehouse too). Regularly update passwords/keys and use multi-factor authentication for accessing the management of these systems. Many SMEs rely on cloud security defaults which are good, but you must still use them correctly (e.g., don’t accidentally make your data lake storage public).

5.2 Common Mistakes and How to Avoid Them

Implementing data solutions can come with pitfalls. Here are some common mistakes SMEs should watch out for:

Boiling the Ocean: Trying to do too much at once – integrating every possible data source and attempting to solve all data problems in one project. This often leads to missed deadlines, budget overruns, and a system that’s too complex. Avoidance: Prioritize high-value data and use cases first. It’s okay if not all data is in the warehouse on day one. Expand gradually.

Lack of User Involvement: Building a warehouse/lake in a vacuum without involving the end-users (analysts, managers, etc.). This can result in a solution that doesn’t actually meet their needs or is too hard to use. Avoidance: Engage key users early, get feedback on data schema, tools, and even ask them to test-run the new system. This also fosters ownership and adoption.

Neglecting Data Governance: Not setting rules or standards, leading to disorganized data or security issues. A notorious issue for lakes is the “data swamp” scenario where data is dumped with no order and no documentation – eventually no one uses it because it’s too confusing. Avoidance: As discussed, implement governance from the start. Even if it’s simple (like a spreadsheet tracking data sources and owners), have something. Enforce some structure in how data is stored (directory structures, naming conventions).

Performance Blind Spot: Perhaps focusing only on getting data in, and not thinking about query performance until users complain. Avoidance: After the initial load, test some representative queries or reporting tasks. Does the result come back in a reasonable time? If not, tune or adjust. Many small issues (like missing indexes or not partitioning a huge table) can degrade performance but are fixable if caught early.

Overestimating In-House Skills: Sometimes an SME might be ambitious in using complex big data tech (like setting up a Hadoop cluster) without the needed expertise, resulting in a half-working system or one that can’t be maintained. Avoidance: Be realistic about what your team can manage. It might be better to use a simpler managed service or get a consultant for the tricky parts than to struggle with a DIY setup that fails. As an example, some companies tried building data lakes on Hadoop and later realized they didn’t have the skills to optimize it, causing the project to stall (this led to the rise of simpler “lakehouse” solutions).

Ignoring Scalability Until It’s a Problem: Maybe initially things run fine, but as data grows 10x, the nightly ETL jobs start missing their window or queries slow down, and you find the design doesn’t scale. Avoidance: Design with some headroom. If you expect growth, test with larger data volumes (maybe simulate by copying data). Use scalable cloud services that can adjust, and set up monitoring to catch when you’re nearing capacity.

Not Training Users: Rolling out a new data tool but not training the team can lead to low adoption. People stick to old habits (Excel files, etc.) because they aren’t comfortable with the new system. Avoidance: Provide training sessions, how-to guides, and maybe appoint data champions in each team who can help others. Demonstrate the benefits by showing cool things they can do now (like “look, with our new warehouse you can get your sales report in seconds and drill down by product – no more manual merges!”). Sometimes quick wins and showcasing them helps a lot.

Security Lapses: This could be leaving data unsecured (maybe an S3 bucket open by mistake or not restricting who can see sensitive data in the warehouse). Avoidance: Conduct a basic security review. Use provided security features. It might be worth having an external party do a quick check if you’re handling very sensitive info. Many mistakes are avoidable (for instance, AWS has options to warn or prevent public buckets).

Being mindful of these pitfalls and actively countering them will increase the chances of a successful project that stakeholders love.

5.3 Real-World SME Case Studies

Let’s highlight a couple of real or realistic examples to illustrate successful SME implementations (names are altered for privacy, but lessons are real):

Case Study 1: “FinServe Co” – Data Warehouse for a Financial SME

FinServe Co is a mid-sized financial services provider (about 150 employees) offering small loans and financial products. They had data siloed in an accounting system, a CRM, and some marketing databases. Reporting was tedious – Excel exports and manual merges leading to frequent errors. FinServe decided to implement a cloud data warehouse (they chose Google BigQuery). Using an ETL service, they scheduled nightly imports of data from all key systems into BigQuery. They designed a schema that linked customer records across sources (so marketing, sales, and finance data could be seen together). Within 3 months, they built a suite of dashboards in Looker (BI tool) for management, showing live metrics like loan approvals, portfolio risk levels, and marketing campaign ROI. The result? Reporting time shrank (what took days of prep is now instantly available), and the company identified trends that were previously hard to see (e.g., linking marketing spend to loan origination growth by region). They credit the success to strong executive sponsorship (the CFO championed the project), a clear goal of unifying data for decision-making, and choosing a fully managed warehouse so their small IT team could handle it. They also took it slow – first focusing on financial and sales data (most critical), then later adding more datasets. Today, FinServe continuously expands the warehouse as they launch new products, knowing the infrastructure will scale.Case Study 2: “HealthPlus Clinic” – Data Lake Enables AI

HealthPlus is a network of local clinics (SMB in healthcare) looking to improve patient care using data. They had an EHR system (for structured data like visits, diagnoses) and also lots of unstructured data – physician notes, patient feedback forms, even some device data from health trackers they offer to patients. They implemented a data lake on Azure. They stored all EHR exports, plus document files and IoT data, in Azure Data Lake Storage. To make use of it, they used Azure Databricks (a Spark-based analytics platform) to clean and integrate these sources. One of their first projects: a predictive model to identify patients at risk of missing appointments (to improve follow-up care). By analyzing both structured data (e.g., past appointment history, demographics) and unstructured physician notes (searching for phrases indicating potential barriers like “transportation issue”), their data science team built a model that flags high-risk patients. They then feed a list of these patients into the warehouse that powers their clinic management dashboard, so front-desk staff get alerts to double-confirm appointments with them. This initiative led to a measurable reduction in no-show rates. The key takeaways from HealthPlus: the data lake allowed them to combine data they could never put in a traditional database easily, and using cloud-based AI tools meant they didn’t need an army of engineers – a couple of savvy analysts could leverage pre-built libraries to parse text and train models. They did invest in data governance by anonymizing patient data in the lake (to comply with privacy laws) and by controlling access tightly (only the data science team can access raw identified data; outputs to dashboards are aggregated or masked). This case highlights that even SMEs can directly use data lakes for AI use-cases that have real operational impact.Case Study 3: “EcoTrade” – Hybrid Approach for an E-commerce SME

EcoTrade is a growing e-commerce SME specializing in eco-friendly products. They initially set up a data warehouse on Snowflake to handle typical e-commerce reporting: sales, inventory, web analytics, etc., bringing together Shopify, Google Analytics, and their small ERP data. This worked well for a while. As they grew, they wanted to dive deeper into customer behavior and marketing attribution, which meant analyzing raw web clickstreams, email campaign data, and social media engagement – large and messy datasets. Instead of overloading the warehouse with this, they established a data lake in AWS S3 for all raw event data and marketing data. They then used Amazon Athena (an on-demand SQL query service for S3) to analyze this data when necessary, and fed summarized results (like customer segments, or attribution scores) back into the Snowflake warehouse. This way, their core warehouse stays clean and performant for day-to-day needs, but the lake is there to offload heavy crunching and to store data they might need later. One outcome: they discovered through data lake analysis that customers who engage with their Instagram and also visit the blog section of their site have a 20% higher conversion rate. This insight prompted them to integrate Instagram campaigns with blog content more tightly. For EcoTrade, the combination of warehouse and lake proved powerful. They caution that initially they lacked a data catalog and sometimes struggled to find what they needed in the lake – a lesson that led them to implement a cataloging tool and better naming conventions. They also leveraged the fact that Snowflake can query data in S3 via external tables, simplifying how their analysts access some lake data. This case underscores a pragmatic approach: use each technology for what it’s best at, and ensure they work in concert.

5.4 Practical Recommendations for Tool Integration

Finally, integrating a new data repository with existing business tools is crucial for maximizing value. Here are practical tips:

BI and Visualization: Connect your data warehouse to a visualization tool that your team is comfortable with. Many SMEs use tools like Power BI (especially if already using Microsoft 365), Tableau, Qlik, or Looker. Even Google Data Studio (free) can connect to many databases and might be enough for simpler needs. Do some demos with real data to ensure the tool meets your requirements. Build a few key dashboards as exemplars. This not only proves the data is working but creates excitement (people love seeing their data in pretty charts!). Make these dashboards accessible – big screen in the office, or a share link, to encourage a data-driven culture.

Excel Integration: Like it or not, Excel remains a go-to for many business users. The good news is modern warehouses often provide connectors (ODBC, etc.) so Excel can directly query them. You could set up an Excel report that fetches data from the warehouse at refresh – giving users their familiar interface but powered by live data. Just be mindful of not pulling extremely large data sets into Excel (that’s what the warehouse is for – aggregate in SQL first).

CRM/ERP Integration: Consider pushing enriched data back to operational systems if it helps. For example, if your warehouse comes up with a customer risk score, and your salespeople live in a CRM like Salesforce, you might push that score into Salesforce so they see it on the customer profile. Some SMEs integrate warehouses with CRM using built-in connectors or middleware (so the data flow is two-way, not just one-way into the warehouse). This closes the loop by making insights actionable in daily workflows.

Collaboration and Reporting: Use collaboration tools for data insights. For instance, if you use Slack or Teams, there are plugins and bots that can fetch data from warehouses (or at least from BI tools). This way, someone can type a command or click a shortcut to get the latest sales figure or ticket count, etc., right in the chat. It reduces friction to access data. Alternatively, schedule regular email reports or alerts from the warehouse (many BI tools can send out a PDF or snapshot on a schedule, or even alert if a metric goes beyond a threshold). These integrations ensure that the data solution actively drives actions, instead of sitting isolated.

Training on Tools: We mentioned training generally, but specifically train users on the integrated tools. If they are used to static Excel and now have an interactive BI dashboard, show them how to use filters, drill-down, etc. If data scientists are new to using a cloud data lake, ensure they know how to access it (maybe via a notebook environment) and how to find documentation. Sometimes a lunch-and-learn session can kickstart usage.

Iterate and Solicit Feedback: After initial integration, ask teams how the solutions are working for them. Maybe the finance team wants a slight tweak in how a metric is calculated, or marketing wishes for a new data source to be added (like pulling in social media analytics). Keep a backlog of such requests and iterate. SMEs that treat their data warehouse/lake as a product that evolves tend to derive continuously increasing value from it. Also, internal success stories help – if one team solved a problem using data, share that story across the company (it might spark ideas in other teams).

Data warehouses and data lakes are powerful tools that, when used correctly, can transform how SMEs leverage data and AI. The core differences between them – structured curated data vs. raw big data – mean they serve different needs, but are often complementary. SMEs should evaluate their immediate requirements (fast, reliable reporting or flexible, in-depth analysis or both) and choose a solution accordingly. Remember, the goal isn’t to adopt trendy tech for its own sake, but to enable better business outcomes: faster decisions, uncovering growth opportunities, cutting costs through efficiencies, and delighting customers with insights. With cloud options leveling the playing field, smaller companies can implement data architectures once only available to large enterprises. The key is a clear strategy, good governance, and a focus on business value.

If your organization is looking to adopt AI and become more data-driven, taking the step to build a solid data foundation is absolutely crucial. This could mean a robust data warehouse, a versatile data lake, or a hybrid of both. And you don’t have to do it alone – leveraging experts or consultants can accelerate this journey, ensuring you avoid pitfalls and follow best practices from day one.

HIGTM, for instance, has expertise in guiding SMEs through these decisions and implementations, aligning the technology to your business strategy. As we’ve discussed, done right, a data warehouse or data lake can unlock AI opportunities and insights that give your SME a competitive edge in today’s data-driven world. The sooner you start treating your data as a strategic asset, the sooner you’ll reap the rewards. Here’s to your data success! 🚀

Turn AI into ROI — Win Faster with HIGTM.

Consult with us to discuss how to manage and grow your business operations with AI.

© 2025 HIGTM. All rights reserved.